Ansible自动化部署k8s二进制集群Ansible是一种IT自动化工具。它可以配置系统,部署软件以及协调更高级的IT任务,例如持续部署,滚动更新。Ansible适用于管理企业IT基础设施。 这里我通过Ansible来实现Kubernetes v1.16 高可用集群自动部署(离线版) (但是还是需要网络,因为这里需要去部署flannel,coredns,ingress,dashboard插件,需要拉取镜像

Ansible自动化部署k8s-1.16.0版集群

介绍

使用ansible自动化部署k8s集群(支持单master,多master)离线版

软件架构

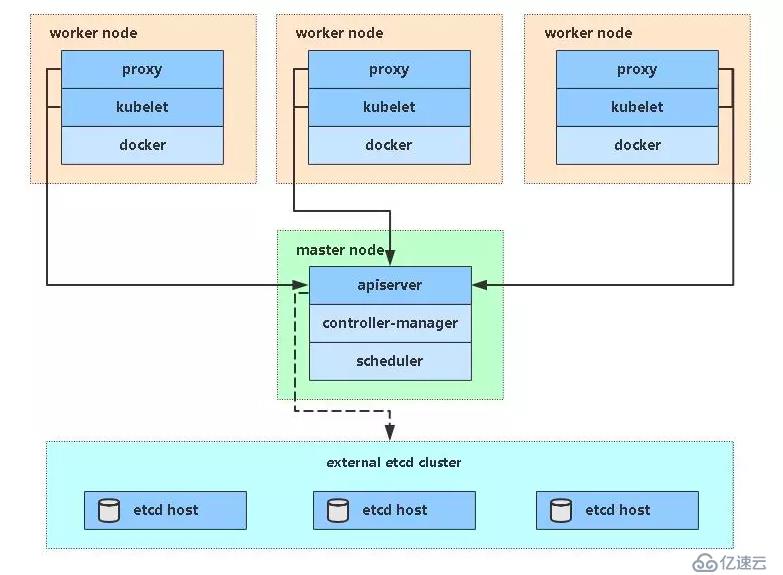

软件架构说明单master架构

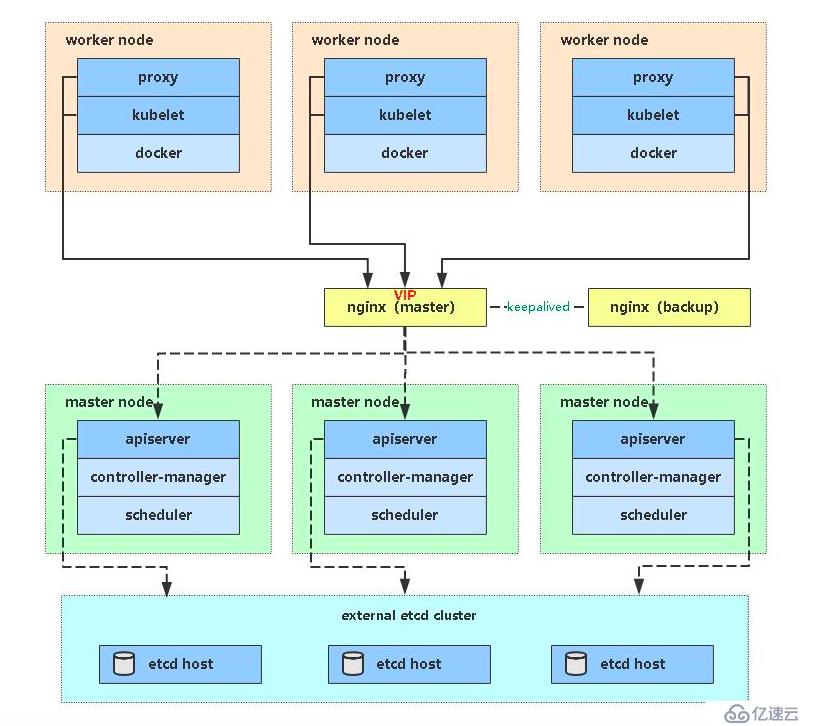

多master架构

1.安装教程

先部署一台Ansible来做管控节点,安装步骤这里省略

将两个文件都解压到ansible服务器上,我的工作目录是在/opt/下,将解压的目录都放在/opt下

修改hosts文件,指定部署是单master,还是多master,以及group_var下的all的变量,将ip指定需要修改的2.使用说明

单master,4c,8g,(1台master,2台node,1台ansible)

多master,4c,8g,(2台master,2台node,1台ansible,2台nginx)

如果部署的是多master主机,那么需要在nginx上再跑1个keepalived,如果是云主机可以拿slb来补充1. 系统初始化

3.Roles组织K8S各组件部署解析

编写建议:

下载所需文件

确保所有节点系统时间一致

4.下载Ansible部署文件:

git clone git@gitee.com:zhaocheng172/ansible-k8s.git拉取代码的时候,请把你的公钥发给我,不然你拉取不下来

下载软件包并解压:

https://pan.baidu.com/s/1Wf9sFR4zkpx_D0BJbZK7ZQ

tar zxf binary_pkg.tar.gz

修改Ansible文件

修改hosts文件,根据规划修改对应IP和名称。

vi hosts修改group_vars/all.yml文件,修改nic网卡地址和证书可信任IP。

vim group_vars/all.yml

nic: eth0 根据自己的网卡去写

k8s:信任的ip5.一键部署

单Master版ansible-playbook -i hosts single-master-deploy.yml -uroot -k

多Master版:ansible-playbook -i hosts multi-master-deploy.yml -uroot -k

6、部署控制

如果安装某个阶段失败,可针对性测试.

例如:只运行部署插件ansible-playbook -i hosts single-master-deploy.yml -uroot -k --tags master

部署单master完效果

[root@k8s-master1 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready <none> 2d3h v1.16.0

k8s-node1 Ready <none> 2d3h v1.16.0

k8s-node2 Ready <none> 2d3h v1.16.0[root@k8s-master1 ~]# kubectl get cs

NAME AGE

controller-manager <unknown>

scheduler <unknown>

etcd-2 <unknown>

etcd-0 <unknown>

etcd-1 <unknown>[root@k8s-master1 ~]# kubectl get pod,svc -A

NAMESPACE NAME READY STATUS RESTARTS AGE

ingress-nginx pod/nginx-ingress-controller-8zp8r 1/1 Running 0 2d3h

ingress-nginx pod/nginx-ingress-controller-bfgj6 1/1 Running 0 2d3h

ingress-nginx pod/nginx-ingress-controller-n5k22 1/1 Running 0 2d3h

kube-system pod/coredns-59fb8d54d6-n6m5w 1/1 Running 0 2d3h

kube-system pod/kube-flannel-ds-amd64-jwvw6 1/1 Running 0 2d3h

kube-system pod/kube-flannel-ds-amd64-m92sg 1/1 Running 0 2d3h

kube-system pod/kube-flannel-ds-amd64-xwf2h 1/1 Running 0 2d3h

kubernetes-dashboard pod/dashboard-metrics-scraper-566cddb686-smw6p 1/1 Running 0 2d3h

kubernetes-dashboard pod/kubernetes-dashboard-c4bc5bd44-zgd82 1/1 Running 0 2d3h

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.0.0.1 <none> 443/TCP 2d3h

ingress-nginx service/ingress-nginx ClusterIP 10.0.0.22 <none> 80/TCP,443/TCP 2d3h

kube-system service/kube-dns ClusterIP 10.0.0.2 <none> 53/UDP,53/TCP 2d3h

kubernetes-dashboard service/dashboard-metrics-scraper ClusterIP 10.0.0.176 <none> 8000/TCP 2d3h

kubernetes-dashboard service/kubernetes-dashboard NodePort 10.0.0.72 <none> 443:30001/TCP 2d3h部署完多master效果

[root@k8s-master1 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready <none> 6m18s v1.16.0

k8s-master2 Ready <none> 6m17s v1.16.0

k8s-node1 Ready <none> 6m10s v1.16.0

k8s-node2 Ready <none> 6m16s v1.16.0[root@k8s-master1 ~]# kubectl get cs

NAME AGE

controller-manager <unknown>

scheduler <unknown>

etcd-2 <unknown>

etcd-1 <unknown>

etcd-0 <unknown>[root@k8s-master1 ~]# kubectl get pod,svc -A

NAMESPACE NAME READY STATUS RESTARTS AGE

ingress-nginx pod/nginx-ingress-controller-4nf6j 1/1 Running 0 45s

ingress-nginx pod/nginx-ingress-controller-5fknt 1/1 Running 0 45s

ingress-nginx pod/nginx-ingress-controller-lwbkz 1/1 Running 0 45s

ingress-nginx pod/nginx-ingress-controller-v8k8n 1/1 Running 0 45s

kube-system pod/coredns-59fb8d54d6-959xj 1/1 Running 0 6m44s

kube-system pod/kube-flannel-ds-amd64-2hnzq 1/1 Running 0 6m31s

kube-system pod/kube-flannel-ds-amd64-64hqc 1/1 Running 0 6m25s

kube-system pod/kube-flannel-ds-amd64-p9d8w 1/1 Running 0 6m32s

kube-system pod/kube-flannel-ds-amd64-pchp5 1/1 Running 0 6m33s

kubernetes-dashboard pod/dashboard-metrics-scraper-566cddb686-kf4qq 1/1 Running 0 32s

kubernetes-dashboard pod/kubernetes-dashboard-c4bc5bd44-dqfb8 1/1 Running 0 32s

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.0.0.1 <none> 443/TCP 19m

ingress-nginx service/ingress-nginx ClusterIP 10.0.0.53 <none> 80/TCP,443/TCP 45s

kube-system service/kube-dns ClusterIP 10.0.0.2 <none> 53/UDP,53/TCP 6m47s

kubernetes-dashboard service/dashboard-metrics-scraper ClusterIP 10.0.0.147 <none> 8000/TCP 32s

kubernetes-dashboard service/kubernetes-dashboard NodePort 10.0.0.176 <none> 443:30001/TCP 32s扩容Node节点

模拟扩容node节点,由于我的资源过多,导致无法分配,出现pending的状态

[root@k8s-master1 ~]# kubectl run web --image=nginx --replicas=6 --requests="cpu=1,memory=256Mi"

[root@k8s-master1 ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

web-944cddf48-6qhcl 1/1 Running 0 15m

web-944cddf48-7ldsv 1/1 Running 0 15m

web-944cddf48-7nv9p 0/1 Pending 0 2s

web-944cddf48-b299n 1/1 Running 0 15m

web-944cddf48-nsxgg 0/1 Pending 0 15m

web-944cddf48-pl4zt 1/1 Running 0 15m

web-944cddf48-t8fqt 1/1 Running 0 15m现在的状态就是pod由于资源池不够,无法分配资源到当前的节点上了,所以现在我们需要对我们的node节点进行扩容

执行playbook,指定新的节点

[root@ansible ansible-install-k8s-master]# ansible-playbook -i hosts add-node.yml -uroot -k

查看已经收到加入node的请求,并运行通过

[root@k8s-master1 ~]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-0i7BzFaf8NyG_cdx_hqDmWg8nd4FHQOqIxKa45x3BJU 45m kubelet-bootstrap Approved,Issued查看node节点状态

[root@k8s-master1 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready <none> 7d v1.16.0

k8s-node1 Ready <none> 7d v1.16.0

k8s-node2 Ready <none> 7d v1.16.0

k8s-node3 Ready <none> 2m52s v1.16.0查看pod资源已经自动分配上新节点上

[root@k8s-master1 ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

web-944cddf48-6qhcl 1/1 Running 0 80m

web-944cddf48-7ldsv 1/1 Running 0 80m

web-944cddf48-7nv9p 1/1 Running 0 65m

web-944cddf48-b299n 1/1 Running 0 80m

web-944cddf48-nsxgg 1/1 Running 0 80m

web-944cddf48-pl4zt 1/1 Running 0 80m

web-944cddf48-t8fqt 1/1 Running 0 80m免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。