小编给大家分享一下Python爬取微信公众号文章和评论的案例,相信大部分人都还不怎么了解,因此分享这篇文章给大家参考一下,希望大家阅读完这篇文章后大有收获,下面让我们一起去了解一下吧!

背景说明

感觉微信公众号算得是比较难爬的平台之一,不过一番折腾之后还是小有收获的。没有用Scrapy(估计爬太快也有反爬限制),但后面会开始整理写一些实战出来。简单介绍下本次的开发环境:

python3

requests

psycopg2 (操作postgres数据库)

抓包分析

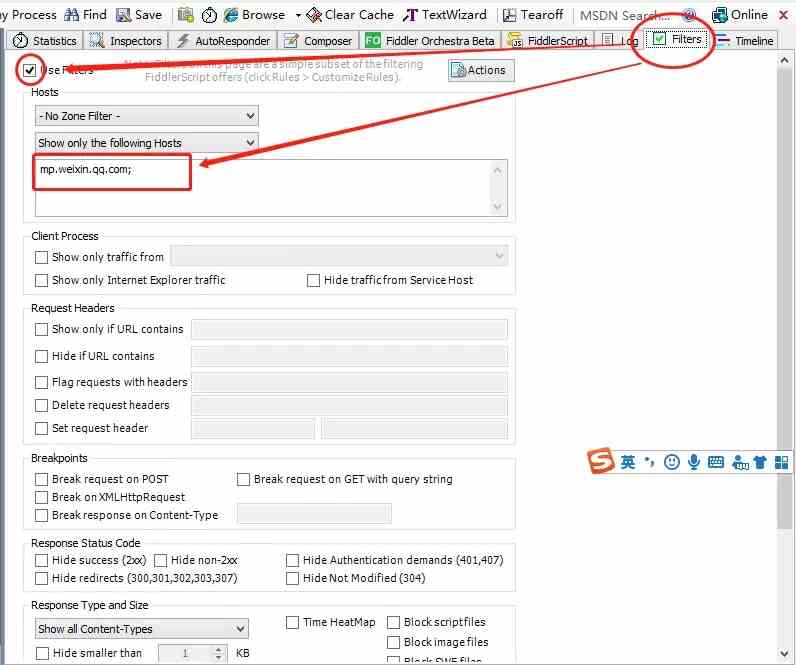

本次实战对抓取的公众号没有限制,但不同公众号每次抓取之前都要进行分析。打开Fiddler,将手机配置好相关代理,为避免干扰过多,这里给Fiddler加个过滤规则,只需要指定微信域名mp.weixin.qq.com就好:

Fiddler配置Filter规则

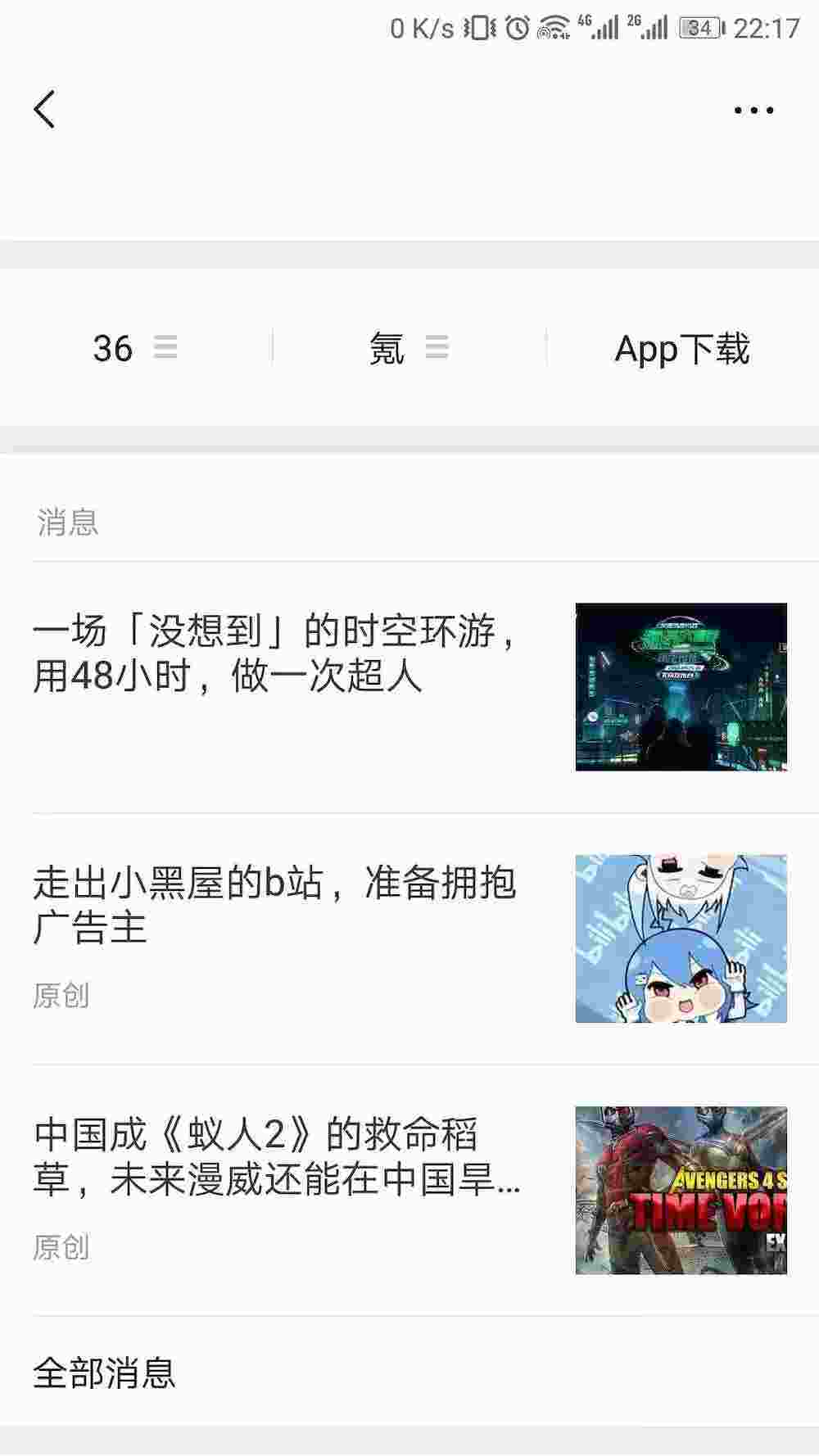

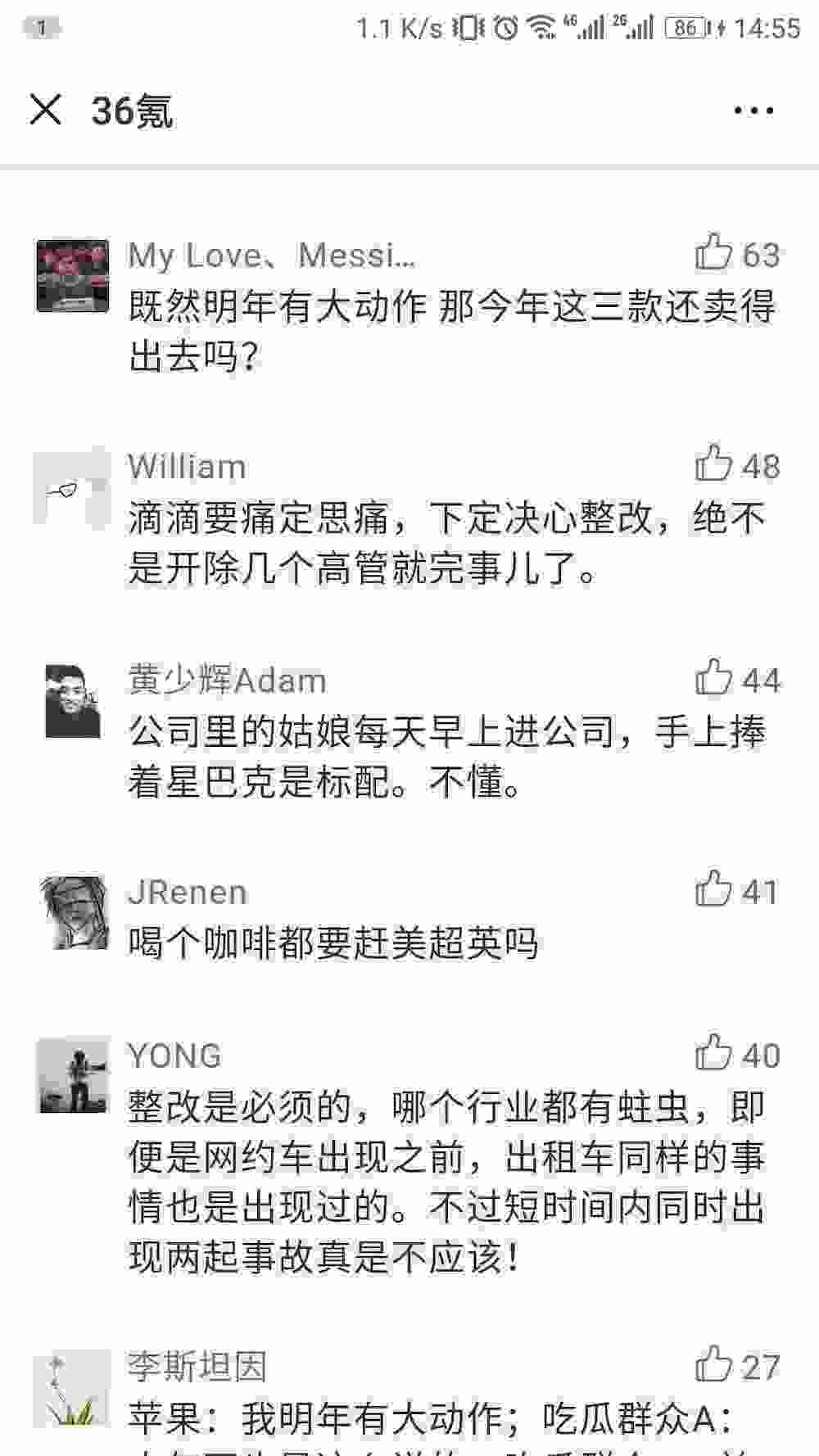

平时关注的公众号也比较多,本次实战以“36氪”公众号为例,继续往下看:

“36氪”公众号

公众号右上角 -> 全部消息

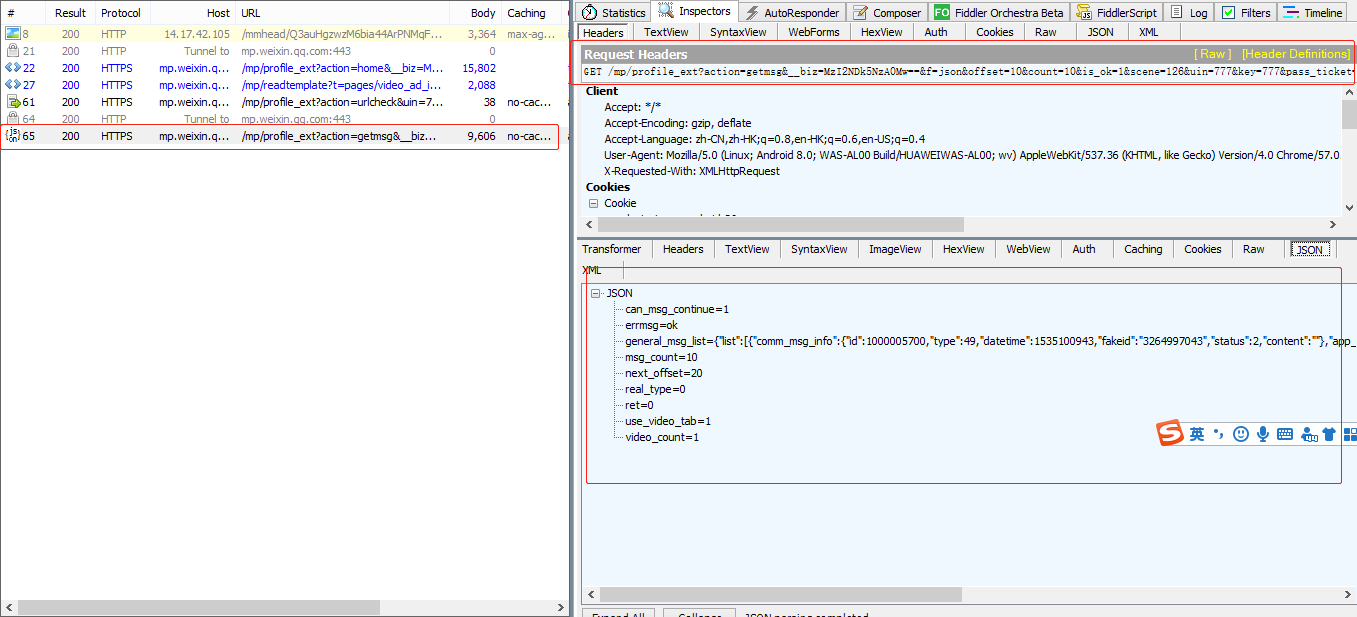

在公众号主页,右上角有三个实心圆点,点击进入消息界面,下滑找到并点击“全部消息”,往下请求加载几次历史文章,然后回到Fiddler界面,不出意外的话应该可以看到这几次请求,可以看到返回的数据是json格式的,同时文章数据是以json字符串的形式定义在general_msg_list字段中:

公众号文章列表抓包请求

分析文章列表接口

把请求URL和Cookie贴上来进行分析:

https://mp.weixin.qq.com/mp/profile_ext?action=getmsg&__biz=MzI2NDk5NzA0Mw==&f=json&offset=10&count=10&is_ok=1&scene=126&uin=777&key=777&pass_ticket=QhOypNwH5dAr5w6UgMjyBrTSOdMEUT86vWc73GANoziWFl8xJd1hIMbMZ82KgCpN&wxtoken=&appmsg_token=971_LwY7Z%252BFBoaEv5z8k_dFWfJkdySbNkMR4OmFxNw~~&x5=1&f=json

Cookie: pgv_pvid=2027337976; pgv_info=ssid=s3015512850; rewardsn=; wxtokenkey=777; wxuin=2089823341; devicetype=android-26; version=26070237; lang=zh_CN;pass_ticket=NDndxxaZ7p6Z9PYulWpLqMbI0i3ULFeCPIHBFu1sf5pX2IhkGfyxZ6b9JieSYRUy;wap_sid2=CO3YwOQHEogBQnN4VTNhNmxQWmc3UHI2U3kteWhUeVExZHFVMnN0QXlsbzVJRUJKc1pkdVFUU2Y5UzhSVEtOZmt1VVlYTkR4SEllQ2huejlTTThJWndMQzZfYUw2SldLVGVMQUthUjc3QWdVMUdoaGN0Nml2SU05cXR1dTN2RkhRUVd1V2Y3SFJ5d01BQUF+fjCB1pLcBTgNQJVO下面把重要的参数说明一下,没提到的说明就不那么重要了:

__biz:相当于是当前公众号的id(唯一固定标志)

offset:文章数据接口请求偏移量标志(从0开始),每次返回的json数据中会有下一次请求的offset,注意这里并不是按某些规则递增的

count:每次请求的数据量(亲测最多可以是10)

pass_ticket:可以理解是请求票据,而且隔一段时间后(大概几个小时)就会过期,这也是为什么微信公众号比较难按固定规则进行抓取的原因

appmsg_token:同样理解为非固定有过期策略的票据

Cookie:使用的时候可以把整段贴上去,但最少仅需要wap_sid2这部分

是不是感觉有点麻烦,毕竟不是要搞大规模专业的爬虫,所以单就一个公众号这么分析下来,还是可以往下继续的,贴上截取的一段json数据,用于设计文章数据表:

{

"ret": 0,

"errmsg": "ok",

"msg_count": 10,

"can_msg_continue": 1,

"general_msg_list": "{\"list\":[{\"comm_msg_info\":{\"id\":1000005700,\"type\":49,\"datetime\":1535100943,\"fakeid\":\"3264997043\",\"status\":2,\"content\":\"\"},\"app_msg_ext_info\":{\"title\":\"金融危机又十年:钱荒之下,二手基金迎来高光时刻\",\"digest\":\"退出永远是基金的主旋律。\",\"content\":\"\",\"fileid\":100034824,\"content_url\":\"http:\\/\\/mp.weixin.qq.com\\/s?__biz=MzI2NDk5NzA0Mw==&mid=2247518479&idx=1&sn=124ab52f7478c1069a6b4592cdf3c5f5&chksm=eaa6d8d3ddd151c5bb95a7ae118de6d080023246aa0a419e1d53bfe48a8d9a77e52b752d9b80&scene=27#wechat_redirect\",\"source_url\":\"\",\"cover\":\"http:\\/\\/mmbiz.qpic.cn\\/mmbiz_jpg\\/QicyPhNHD5vYgdpprkibtnWCAN7l4ZaqibKvopNyCWWLQAwX7QpzWicnQSVfcBZmPrR5YuHS45JIUzVjb0dZTiaLPyA\\/0?wx_fmt=jpeg\",\"subtype\":9,\"is_multi\":0,\"multi_app_msg_item_list\":[],\"author\":\"石亚琼\",\"copyright_stat\":11,\"duration\":0,\"del_flag\":1,\"item_show_type\":0,\"audio_fileid\":0,\"play_url\":\"\",\"malicious_title_reason_id\":0,\"malicious_content_type\":0}}]}",

"next_offset": 20,

"video_count": 1,

"use_video_tab": 1,

"real_type": 0

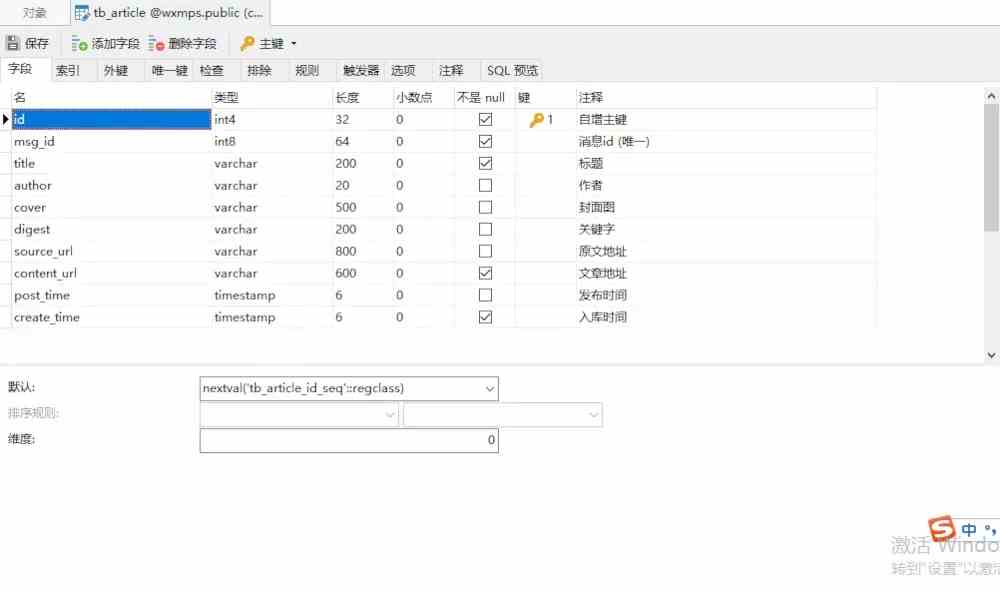

}可以简单抽取想要的数据,这里将文章表结构定义如下,顺便贴上建表的SQL语句:

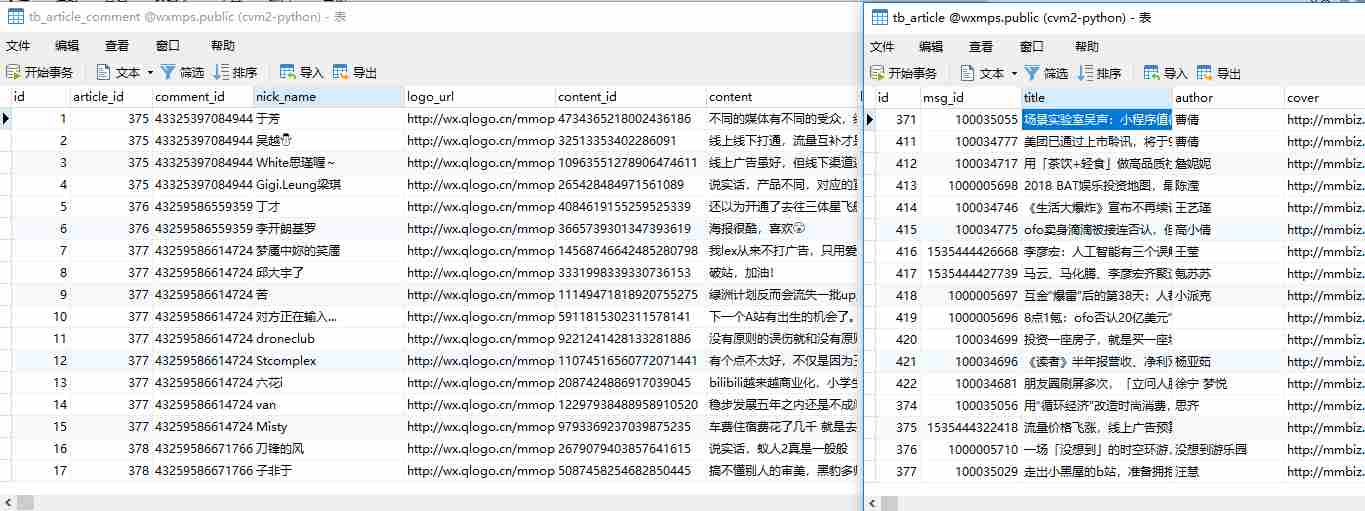

文章数据表

-- ----------------------------

-- Table structure for tb_article

-- ----------------------------

DROP TABLE IF EXISTS "public"."tb_article";

CREATE TABLE "public"."tb_article" (

"id" serial4 PRIMARY KEY,

"msg_id" int8 NOT NULL,

"title" varchar(200) COLLATE "pg_catalog"."default" NOT NULL,

"author" varchar(20) COLLATE "pg_catalog"."default",

"cover" varchar(500) COLLATE "pg_catalog"."default",

"digest" varchar(200) COLLATE "pg_catalog"."default",

"source_url" varchar(800) COLLATE "pg_catalog"."default",

"content_url" varchar(600) COLLATE "pg_catalog"."default" NOT NULL,

"post_time" timestamp(6),

"create_time" timestamp(6) NOT NULL

)

;

COMMENT ON COLUMN "public"."tb_article"."id" IS '自增主键';

COMMENT ON COLUMN "public"."tb_article"."msg_id" IS '消息id (唯一)';

COMMENT ON COLUMN "public"."tb_article"."title" IS '标题';

COMMENT ON COLUMN "public"."tb_article"."author" IS '作者';

COMMENT ON COLUMN "public"."tb_article"."cover" IS '封面图';

COMMENT ON COLUMN "public"."tb_article"."digest" IS '关键字';

COMMENT ON COLUMN "public"."tb_article"."source_url" IS '原文地址';

COMMENT ON COLUMN "public"."tb_article"."content_url" IS '文章地址';

COMMENT ON COLUMN "public"."tb_article"."post_time" IS '发布时间';

COMMENT ON COLUMN "public"."tb_article"."create_time" IS '入库时间';

COMMENT ON TABLE "public"."tb_article" IS '公众号文章表';

-- ----------------------------

-- Indexes structure for table tb_article

-- ----------------------------

CREATE UNIQUE INDEX "unique_msg_id" ON "public"."tb_article" USING btree (

"msg_id" "pg_catalog"."int8_ops" ASC NULLS LAST

);附请求文章接口并解析数据保存到数据库的相关代码:

class WxMps(object):

"""微信公众号文章、评论抓取爬虫"""

def __init__(self, _biz, _pass_ticket, _app_msg_token, _cookie, _offset=0):

self.offset = _offset

self.biz = _biz # 公众号标志

self.msg_token = _app_msg_token # 票据(非固定)

self.pass_ticket = _pass_ticket # 票据(非固定)

self.headers = {

'Cookie': _cookie, # Cookie(非固定)

'User-Agent': 'Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) Version/4.0 Chrome/57.0.2987.132 '

}

wx_mps = 'wxmps' # 这里数据库、用户、密码一致(需替换成实际的)

self.postgres = pgs.Pgs(host='localhost', port='5432', db_name=wx_mps, user=wx_mps, password=wx_mps)

def start(self):

"""请求获取公众号的文章接口"""

offset = self.offset

while True:

api = 'https://mp.weixin.qq.com/mp/profile_ext?action=getmsg&__biz={0}&f=json&offset={1}' \

'&count=10&is_ok=1&scene=124&uin=777&key=777&pass_ticket={2}&wxtoken=&appmsg_token' \

'={3}&x5=1&f=json'.format(self.biz, offset, self.pass_ticket, self.msg_token)

resp = requests.get(api, headers=self.headers).json()

ret, status = resp.get('ret'), resp.get('errmsg') # 状态信息

if ret == 0 or status == 'ok':

print('Crawl article: ' + api)

offset = resp['next_offset'] # 下一次请求偏移量

general_msg_list = resp['general_msg_list']

msg_list = json.loads(general_msg_list)['list'] # 获取文章列表

for msg in msg_list:

comm_msg_info = msg['comm_msg_info'] # 该数据是本次推送多篇文章公共的

msg_id = comm_msg_info['id'] # 文章id

post_time = datetime.fromtimestamp(comm_msg_info['datetime']) # 发布时间

# msg_type = comm_msg_info['type'] # 文章类型

# msg_data = json.dumps(comm_msg_info, ensure_ascii=False) # msg原数据

app_msg_ext_info = msg.get('app_msg_ext_info') # article原数据

if app_msg_ext_info:

# 本次推送的首条文章

self._parse_articles(app_msg_ext_info, msg_id, post_time)

# 本次推送的其余文章

multi_app_msg_item_list = app_msg_ext_info.get('multi_app_msg_item_list')

if multi_app_msg_item_list:

for item in multi_app_msg_item_list:

msg_id = item['fileid'] # 文章id

if msg_id == 0:

msg_id = int(time.time() * 1000) # 设置唯一id,解决部分文章id=0出现唯一索引冲突的情况

self._parse_articles(item, msg_id, post_time)

print('next offset is %d' % offset)

else:

print('Before break , Current offset is %d' % offset)

break

def _parse_articles(self, info, msg_id, post_time):

"""解析嵌套文章数据并保存入库"""

title = info.get('title') # 标题

cover = info.get('cover') # 封面图

author = info.get('author') # 作者

digest = info.get('digest') # 关键字

source_url = info.get('source_url') # 原文地址

content_url = info.get('content_url') # 微信地址

# ext_data = json.dumps(info, ensure_ascii=False) # 原始数据

self.postgres.handler(self._save_article(), (msg_id, title, author, cover, digest,

source_url, content_url, post_time,

datetime.now()), fetch=True)

@staticmethod

def _save_article():

sql = 'insert into tb_article(msg_id,title,author,cover,digest,source_url,content_url,post_time,create_time) ' \

'values(%s,%s,%s,%s,%s,%s,%s,%s,%s)'

return sql

if __name__ == '__main__':

biz = 'MzI2NDk5NzA0Mw==' # "36氪"

pass_ticket = 'NDndxxaZ7p6Z9PYulWpLqMbI0i3ULFeCPIHBFu1sf5pX2IhkGfyxZ6b9JieSYRUy'

app_msg_token = '971_Z0lVNQBcGsWColSubRO9H13ZjrPhjuljyxLtiQ~~'

cookie = 'wap_sid2=CO3YwOQHEogBQnN4VTNhNmxQWmc3UHI2U3kteWhUeVExZHFVMnN0QXlsbzVJRUJKc1pkdVFUU2Y5UzhSVEtOZmt1VVlYTkR4SEllQ2huejlTTThJWndMQzZfYUw2SldLVGVMQUthUjc3QWdVMUdoaGN0Nml2SU05cXR1dTN2RkhRUVd1V2Y3SFJ5d01BQUF+fjCB1pLcBTgNQJVO'

# 以上信息不同公众号每次抓取都需要借助抓包工具做修改

wxMps = WxMps(biz, pass_ticket, app_msg_token, cookie)

wxMps.start() # 开始爬取文章分析文章评论接口

获取评论的思路大致是一样的,只是会更加麻烦一点。首先在手机端点开一篇有评论的文章,然后查看Fiddler抓取的请求:

公众号文章评论

公众号文章评论接口抓包请求

提取其中的URL和Cookie再次分析:

https://mp.weixin.qq.com/mp/appmsg_comment?action=getcomment&scene=0&__biz=MzI2NDk5NzA0Mw==&appmsgid=2247518723&idx=1&comment_id=433253969406607362&offset=0&limit=100&uin=777&key=777&pass_ticket=NDndxxaZ7p6Z9PYulWpLqMbI0i3ULFeCPIHBFu1sf5pX2IhkGfyxZ6b9JieSYRUy&wxtoken=777&devicetype=android-26&clientversion=26070237&appmsg_token=971_dLK7htA1j8LbMUk8pvJKRlC_o218HEgwDbS9uARPOyQ34_vfXv3iDstqYnq2gAyze1dBKm4ZMTlKeyfx&x5=1&f=json

Cookie: pgv_pvid=2027337976; pgv_info=ssid=s3015512850; rewardsn=; wxuin=2089823341; devicetype=android-26; version=26070237; lang=zh_CN; pass_ticket=NDndxxaZ7p6Z9PYulWpLqMbI0i3ULFeCPIHBFu1sf5pX2IhkGfyxZ6b9JieSYRUy; wap_sid2=CO3YwOQHEogBdENPSVdaS3pHOWc1V2QzY1NvZG9PYk1DMndPS3NfbGlHM0Vfal8zLU9kcUdkWTQxdUYwckFBT3RZM1VYUXFaWkFad3NVaWFXZ28zbEFIQ2pTa1lqZktfb01vcGdPLTQ0aGdJQ2xOSXoxTVFvNUg3SVpBMV9GRU1lbnotci1MWWl5d01BQUF+fjCj45PcBTgNQAE=; wxtokenkey=777接着分析参数:

__biz:同上

pass_ticket:同上

Cookie:同上

offset和limit:代表偏移量和请求数量,由于公众号评论最多展示100条,所以这两个参数也不用改它

comment_id:获取文章评论数据的标记id,固定但需要从当前文章结构(Html)解析提取

appmsgid:票据id,非固定每次需要从当前文章结构(Html)解析提取

appmsg_token:票据token,非固定每次需要从当前文章结构(Html)解析提取

可以看到最后三个参数要解析html获取(当初真的找了好久才想到看文章网页结构)。从文章请求接口可以获得文章地址,对应上面的content_url字段,但请求该地址前仍需要对url做相关处理,不然上面三个参数会有缺失,也就获取不到后面评论内容:

def _parse_article_detail(self, content_url, article_id):

"""从文章页提取相关参数用于获取评论,article_id是已保存的文章id"""

try:

api = content_url.replace('amp;', '').replace('#wechat_redirect', '').replace('http', 'https')

html = requests.get(api, headers=self.headers).text

except:

print('获取评论失败' + content_url)

else:

# group(0) is current line

str_comment = re.search(r'var comment_id = "(.*)" \|\| "(.*)" \* 1;', html)

str_msg = re.search(r"var appmsgid = '' \|\| '(.*)'\|\|", html)

str_token = re.search(r'window.appmsg_token = "(.*)";', html)

if str_comment and str_msg and str_token:

comment_id = str_comment.group(1) # 评论id(固定)

app_msg_id = str_msg.group(1) # 票据id(非固定)

appmsg_token = str_token.group(1) # 票据token(非固定)再回来看该接口返回的json数据,分析结构后然后定义数据表(含SQL):

文章评论数据表

-- ----------------------------

-- Table structure for tb_article_comment

-- ----------------------------

DROP TABLE IF EXISTS "public"."tb_article_comment";

CREATE TABLE "public"."tb_article_comment" (

"id" serial4 PRIMARY KEY,

"article_id" int4 NOT NULL,

"comment_id" varchar(50) COLLATE "pg_catalog"."default",

"nick_name" varchar(50) COLLATE "pg_catalog"."default" NOT NULL,

"logo_url" varchar(300) COLLATE "pg_catalog"."default",

"content_id" varchar(50) COLLATE "pg_catalog"."default" NOT NULL,

"content" varchar(3000) COLLATE "pg_catalog"."default" NOT NULL,

"like_num" int2,

"comment_time" timestamp(6),

"create_time" timestamp(6) NOT NULL

)

;

COMMENT ON COLUMN "public"."tb_article_comment"."id" IS '自增主键';

COMMENT ON COLUMN "public"."tb_article_comment"."article_id" IS '文章外键id';

COMMENT ON COLUMN "public"."tb_article_comment"."comment_id" IS '评论接口id';

COMMENT ON COLUMN "public"."tb_article_comment"."nick_name" IS '用户昵称';

COMMENT ON COLUMN "public"."tb_article_comment"."logo_url" IS '头像地址';

COMMENT ON COLUMN "public"."tb_article_comment"."content_id" IS '评论id (唯一)';

COMMENT ON COLUMN "public"."tb_article_comment"."content" IS '评论内容';

COMMENT ON COLUMN "public"."tb_article_comment"."like_num" IS '点赞数';

COMMENT ON COLUMN "public"."tb_article_comment"."comment_time" IS '评论时间';

COMMENT ON COLUMN "public"."tb_article_comment"."create_time" IS '入库时间';

COMMENT ON TABLE "public"."tb_article_comment" IS '公众号文章评论表';

-- ----------------------------

-- Indexes structure for table tb_article_comment

-- ----------------------------

CREATE UNIQUE INDEX "unique_content_id" ON "public"."tb_article_comment" USING btree (

"content_id" COLLATE "pg_catalog"."default" "pg_catalog"."text_ops" ASC NULLS LAST

);万里长征快到头了,最后贴上这部分代码,由于要先获取文章地址,所以和上面获取文章数据的代码是一起的:

import json

import re

import time

from datetime import datetime

import requests

from utils import pgs

class WxMps(object):

"""微信公众号文章、评论抓取爬虫"""

def __init__(self, _biz, _pass_ticket, _app_msg_token, _cookie, _offset=0):

self.offset = _offset

self.biz = _biz # 公众号标志

self.msg_token = _app_msg_token # 票据(非固定)

self.pass_ticket = _pass_ticket # 票据(非固定)

self.headers = {

'Cookie': _cookie, # Cookie(非固定)

'User-Agent': 'Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko) Version/4.0 Chrome/57.0.2987.132 '

}

wx_mps = 'wxmps' # 这里数据库、用户、密码一致(需替换成实际的)

self.postgres = pgs.Pgs(host='localhost', port='5432', db_name=wx_mps, user=wx_mps, password=wx_mps)

def start(self):

"""请求获取公众号的文章接口"""

offset = self.offset

while True:

api = 'https://mp.weixin.qq.com/mp/profile_ext?action=getmsg&__biz={0}&f=json&offset={1}' \

'&count=10&is_ok=1&scene=124&uin=777&key=777&pass_ticket={2}&wxtoken=&appmsg_token' \

'={3}&x5=1&f=json'.format(self.biz, offset, self.pass_ticket, self.msg_token)

resp = requests.get(api, headers=self.headers).json()

ret, status = resp.get('ret'), resp.get('errmsg') # 状态信息

if ret == 0 or status == 'ok':

print('Crawl article: ' + api)

offset = resp['next_offset'] # 下一次请求偏移量

general_msg_list = resp['general_msg_list']

msg_list = json.loads(general_msg_list)['list'] # 获取文章列表

for msg in msg_list:

comm_msg_info = msg['comm_msg_info'] # 该数据是本次推送多篇文章公共的

msg_id = comm_msg_info['id'] # 文章id

post_time = datetime.fromtimestamp(comm_msg_info['datetime']) # 发布时间

# msg_type = comm_msg_info['type'] # 文章类型

# msg_data = json.dumps(comm_msg_info, ensure_ascii=False) # msg原数据

app_msg_ext_info = msg.get('app_msg_ext_info') # article原数据

if app_msg_ext_info:

# 本次推送的首条文章

self._parse_articles(app_msg_ext_info, msg_id, post_time)

# 本次推送的其余文章

multi_app_msg_item_list = app_msg_ext_info.get('multi_app_msg_item_list')

if multi_app_msg_item_list:

for item in multi_app_msg_item_list:

msg_id = item['fileid'] # 文章id

if msg_id == 0:

msg_id = int(time.time() * 1000) # 设置唯一id,解决部分文章id=0出现唯一索引冲突的情况

self._parse_articles(item, msg_id, post_time)

print('next offset is %d' % offset)

else:

print('Before break , Current offset is %d' % offset)

break

def _parse_articles(self, info, msg_id, post_time):

"""解析嵌套文章数据并保存入库"""

title = info.get('title') # 标题

cover = info.get('cover') # 封面图

author = info.get('author') # 作者

digest = info.get('digest') # 关键字

source_url = info.get('source_url') # 原文地址

content_url = info.get('content_url') # 微信地址

# ext_data = json.dumps(info, ensure_ascii=False) # 原始数据

content_url = content_url.replace('amp;', '').replace('#wechat_redirect', '').replace('http', 'https')

article_id = self.postgres.handler(self._save_article(), (msg_id, title, author, cover, digest,

source_url, content_url, post_time,

datetime.now()), fetch=True)

if article_id:

self._parse_article_detail(content_url, article_id)

def _parse_article_detail(self, content_url, article_id):

"""从文章页提取相关参数用于获取评论,article_id是已保存的文章id"""

try:

html = requests.get(content_url, headers=self.headers).text

except:

print('获取评论失败' + content_url)

else:

# group(0) is current line

str_comment = re.search(r'var comment_id = "(.*)" \|\| "(.*)" \* 1;', html)

str_msg = re.search(r"var appmsgid = '' \|\| '(.*)'\|\|", html)

str_token = re.search(r'window.appmsg_token = "(.*)";', html)

if str_comment and str_msg and str_token:

comment_id = str_comment.group(1) # 评论id(固定)

app_msg_id = str_msg.group(1) # 票据id(非固定)

appmsg_token = str_token.group(1) # 票据token(非固定)

# 缺一不可

if appmsg_token and app_msg_id and comment_id:

print('Crawl article comments: ' + content_url)

self._crawl_comments(app_msg_id, comment_id, appmsg_token, article_id)

def _crawl_comments(self, app_msg_id, comment_id, appmsg_token, article_id):

"""抓取文章的评论"""

api = 'https://mp.weixin.qq.com/mp/appmsg_comment?action=getcomment&scene=0&__biz={0}' \

'&appmsgid={1}&idx=1&comment_id={2}&offset=0&limit=100&uin=777&key=777' \

'&pass_ticket={3}&wxtoken=777&devicetype=android-26&clientversion=26060739' \

'&appmsg_token={4}&x5=1&f=json'.format(self.biz, app_msg_id, comment_id,

self.pass_ticket, appmsg_token)

resp = requests.get(api, headers=self.headers).json()

ret, status = resp['base_resp']['ret'], resp['base_resp']['errmsg']

if ret == 0 or status == 'ok':

elected_comment = resp['elected_comment']

for comment in elected_comment:

nick_name = comment.get('nick_name') # 昵称

logo_url = comment.get('logo_url') # 头像

comment_time = datetime.fromtimestamp(comment.get('create_time')) # 评论时间

content = comment.get('content') # 评论内容

content_id = comment.get('content_id') # id

like_num = comment.get('like_num') # 点赞数

# reply_list = comment.get('reply')['reply_list'] # 回复数据

self.postgres.handler(self._save_article_comment(), (article_id, comment_id, nick_name, logo_url,

content_id, content, like_num, comment_time,

datetime.now()))

@staticmethod

def _save_article():

sql = 'insert into tb_article(msg_id,title,author,cover,digest,source_url,content_url,post_time,create_time) ' \

'values(%s,%s,%s,%s,%s,%s,%s,%s,%s) returning id'

return sql

@staticmethod

def _save_article_comment():

sql = 'insert into tb_article_comment(article_id,comment_id,nick_name,logo_url,content_id,content,like_num,' \

'comment_time,create_time) values(%s,%s,%s,%s,%s,%s,%s,%s,%s)'

return sql

if __name__ == '__main__':

biz = 'MzI2NDk5NzA0Mw==' # "36氪"

pass_ticket = 'NDndxxaZ7p6Z9PYulWpLqMbI0i3ULFeCPIHBFu1sf5pX2IhkGfyxZ6b9JieSYRUy'

app_msg_token = '971_Z0lVNQBcGsWColSubRO9H13ZjrPhjuljyxLtiQ~~'

cookie = 'wap_sid2=CO3YwOQHEogBQnN4VTNhNmxQWmc3UHI2U3kteWhUeVExZHFVMnN0QXlsbzVJRUJKc1pkdVFUU2Y5UzhSVEtOZmt1VVlYTkR4SEllQ2huejlTTThJWndMQzZfYUw2SldLVGVMQUthUjc3QWdVMUdoaGN0Nml2SU05cXR1dTN2RkhRUVd1V2Y3SFJ5d01BQUF+fjCB1pLcBTgNQJVO'

# 以上信息不同公众号每次抓取都需要借助抓包工具做修改

wxMps = WxMps(biz, pass_ticket, app_msg_token, cookie)

wxMps.start() # 开始爬取文章及评论文末小结

最后展示下数据库里的数据,单线程爬的慢而且又没这方面的数据需求,所以也只是随便试了下手:

以上是“Python爬取微信公众号文章和评论的案例”这篇文章的所有内容,感谢各位的阅读!相信大家都有了一定的了解,希望分享的内容对大家有所帮助,如果还想学习更多知识,欢迎关注亿速云行业资讯频道!

亿速云「云服务器」,即开即用、新一代英特尔至强铂金CPU、三副本存储NVMe SSD云盘,价格低至29元/月。点击查看>>

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。