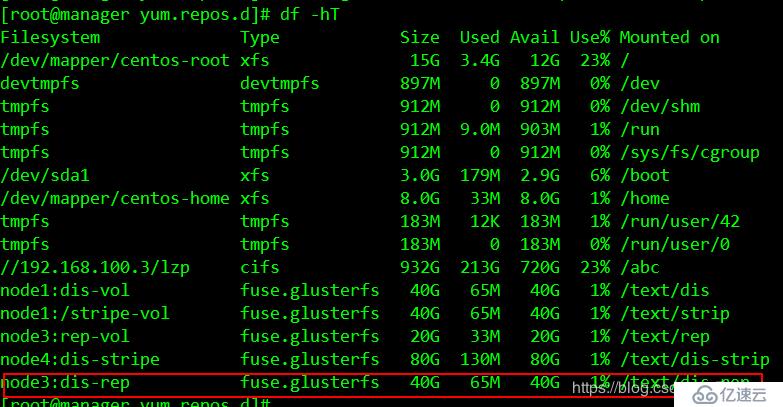

| 卷名称 | 卷类型 | 空间大小 | Brick |

|---|---|---|---|

| dis-volume | 分布式卷 | 40G | node1(/b1)、node2(/b1) |

| stripe-volume | 条带卷 | 40G | node1(/c1)、node2(/c1) |

| rep-volume | 复制卷 | 20G | node3(/b1)、node4(/b1) |

| dis-stripe | 分布式条带卷 | 40G | node1(/d1)、node2(/d1)、node3(/d1)、node4(/d1) |

| dis-rep | 分布式复制卷 | 20G | node1(/e1)、node2(/e1)、node3(/e1)、node4(/e1) |

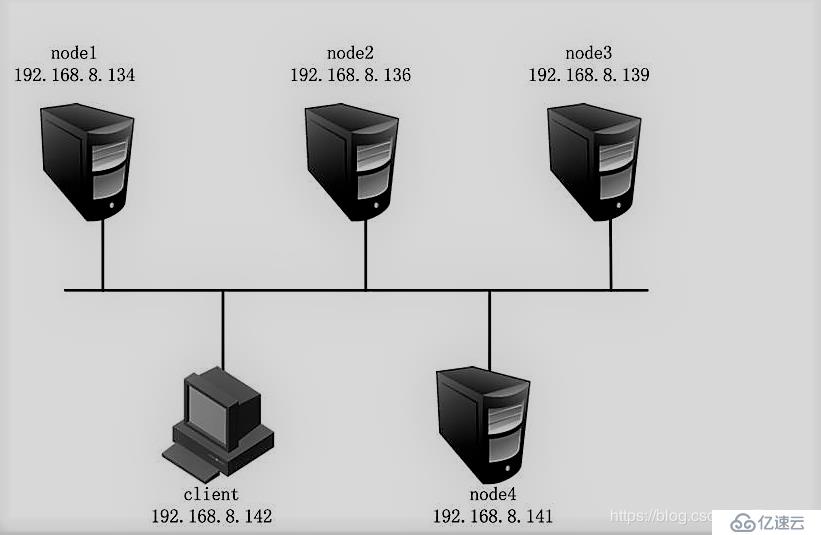

分别修改为node1、node2、node3、node4

[root@localhost ~]#hostnamectl set-hostname node1

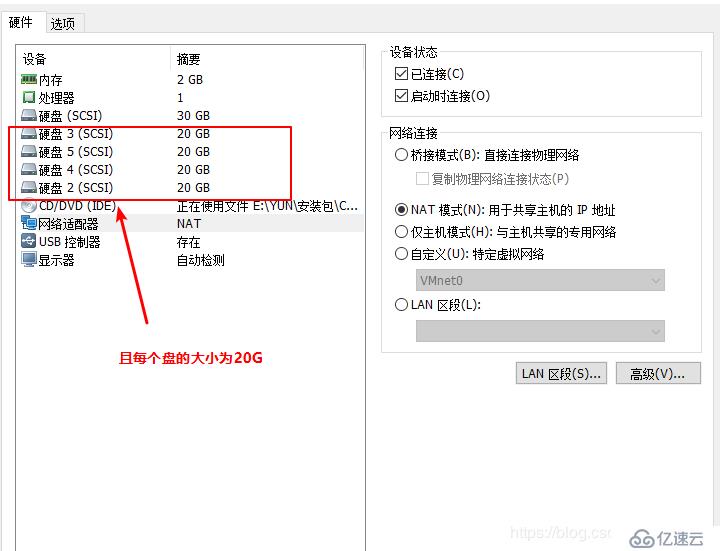

[root@localhost ~]# su在这里我们使用脚本执行挂载

#进入opt目录

[root@node1 ~]# cd /opt

#磁盘格式化、挂载脚本

[root@node1 opt]# vim a.sh

#! /bin/bash

echo "the disks exist list:"

fdisk -l |grep '磁盘 /dev/sd[a-z]'

echo "=================================================="

PS3="chose which disk you want to create:"

select VAR in `ls /dev/sd*|grep -o 'sd[b-z]'|uniq` quit

do

case $VAR in

sda)

fdisk -l /dev/sda

break ;;

sd[b-z])

#create partitions

echo "n

p

w" | fdisk /dev/$VAR

#make filesystem

mkfs.xfs -i size=512 /dev/${VAR}"1" &> /dev/null

#mount the system

mkdir -p /data/${VAR}"1" &> /dev/null

echo -e "/dev/${VAR}"1" /data/${VAR}"1" xfs defaults 0 0\n" >> /etc/fstab

mount -a &> /dev/null

break ;;

quit)

break;;

*)

echo "wrong disk,please check again";;

esac

done

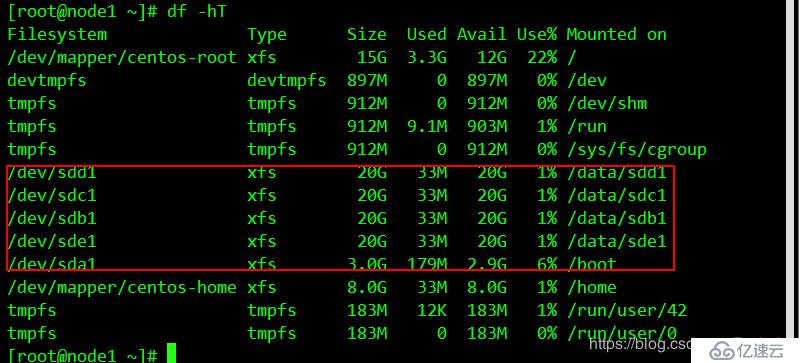

#给于脚本执行权限

[root@node1 opt]# chmod +x a.sh将脚本通过scp推送到其他三台服务器上

scp a.sh root@192.168.45.134:/opt

scp a.sh root@192.168.45.130:/opt

scp a.sh root@192.168.45.136:/opt这个只是样本

[root@node1 opt]# ./a.sh

the disks exist list:

==================================================

1) sdb

2) sdc

3) sdd

4) sde

5) quit

chose which disk you want to create:1 //选择要格式化的盘

Welcome to fdisk (util-linux 2.23.2).

Changes will remain in memory only, until you decide to write them.

Be careful before using the write command.

Device does not contain a recognized partition table

Building a new DOS disklabel with disk identifier 0x37029e96.

Command (m for help): Partition type:

p primary (0 primary, 0 extended, 4 free)

e extended

Select (default p): Partition number (1-4, default 1): First sector (2048-41943039, default 2048): Using default value 2048

Last sector, +sectors or +size{K,M,G} (2048-41943039, default 41943039): Using default value 41943039

Partition 1 of type Linux and of size 20 GiB is set

Command (m for help): The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

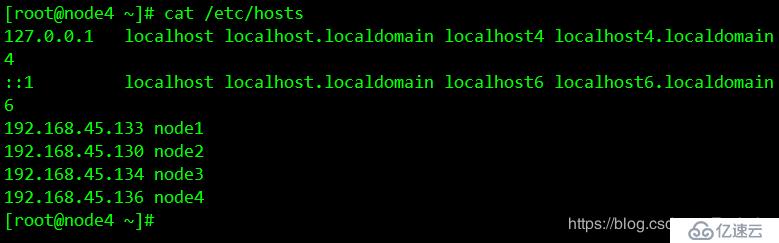

在第一台node1上修改

#在文件末尾添加

vim /etc/hosts

192.168.45.133 node1

192.168.45.130 node2

192.168.45.134 node3

192.168.45.136 node4通过scp将hosts文件推送给其他服务器和客户端

#将/etc/hosts文件推送给其他主机

[root@node1 opt]# scp /etc/hosts root@192.168.45.130:/etc/hosts

root@192.168.45.130's password:

hosts 100% 242 23.6KB/s 00:00

[root@node1 opt]# scp /etc/hosts root@192.168.45.134:/etc/hosts

root@192.168.45.134's password:

hosts 100% 242 146.0KB/s 00:00

[root@node1 opt]# scp /etc/hosts root@192.168.45.136:/etc/hosts

root@192.168.45.136's password:

hosts 在其他服务器上查看推送情况

[root@node1 ~]# systemctl stop firewalld.service

[root@node1 ~]# setenforce 0#进入yum文件路径

[root@node1 ~]# cd /etc/yum.repos.d/

#创建一个空文件夹

[root@node1 yum.repos.d]# mkdir abc

#将CentOS-文件全部移到到abc下

[root@node1 yum.repos.d]# mv CentOS-* abc

#创建私有yum源

[root@node1 yum.repos.d]# vim GLFS.repo

[demo]

name=demo

baseurl=http://123.56.134.27/demo

gpgcheck=0

enable=1

[gfsrepo]

name=gfsrepo

baseurl=http://123.56.134.27/gfsrepo

gpgcheck=0

enable=1

#重新加载yum源

[root@node1 yum.repos.d]# yum list

[root@node1 yum.repos.d]# yum -y install glusterfs glusterfs-server glusterfs-fuse glusterfs-rdma

在其他三台上进行同样的操作

[root@node1 yum.repos.d]# systemctl start glusterd.service

[root@node1 yum.repos.d]# systemctl enable glusterd.service[root@node1 yum.repos.d]# gluster peer probe node2

peer probe: success.

[root@node1 yum.repos.d]# gluster peer probe node3

peer probe: success.

[root@node1 yum.repos.d]# gluster peer probe node4

peer probe: success.

在其他服务器上查看节点信息

[root@node1 yum.repos.d]# gluster peer status

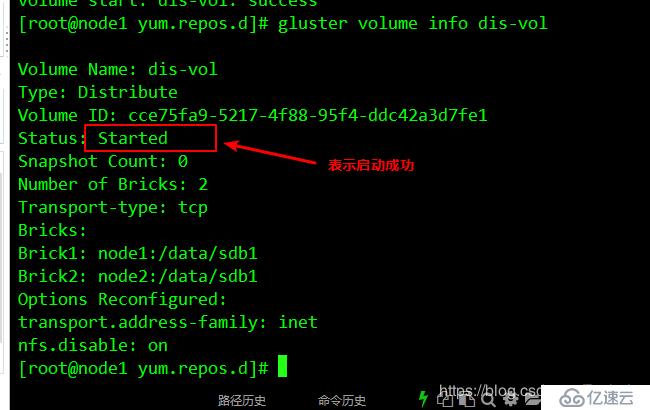

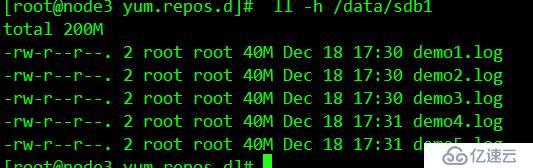

#创建分布式卷

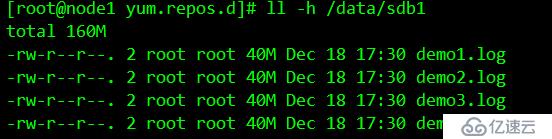

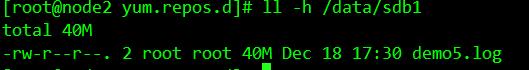

[root@node1 yum.repos.d]# gluster volume create dis-vol node1:/data/sdb1 node2:/data/sdb1 force

#检查信息

[root@node1 yum.repos.d]# gluster volume info dis-vol

#查看分布式现有卷

[root@node1 yum.repos.d]# gluster volume list

#启动卷

[root@node1 yum.repos.d]# gluster volume start dis-vol

#递归创建挂载点

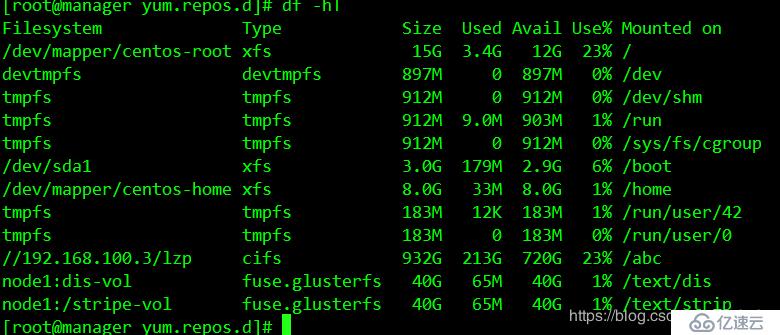

[root@manager yum.repos.d]# mkdir -p /text/dis

#将刚才创建的卷挂载到刚才创建的挂载点下

[root@manager yum.repos.d]# mount.glusterfs node1:dis-vol /text/dis

#创建卷

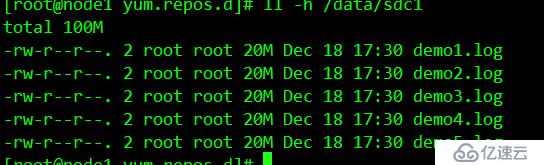

[root@node1 yum.repos.d]# gluster volume create stripe-vol stripe 2 node1:/data/sdc1 node2:/data/sdc1 force

#查看现有卷

[root@node1 yum.repos.d]# gluster volume list

dis-vol

stripe-vol

#启动条带卷

[root@node1 yum.repos.d]# gluster volume start stripe-vol

volume start: stripe-vol: success#创建挂载点

[root@manager yum.repos.d]# mkdir /text/strip

#挂载条带卷

[root@manager yum.repos.d]# mount.glusterfs node1:/stripe-vol /text/strip/查看挂载情况

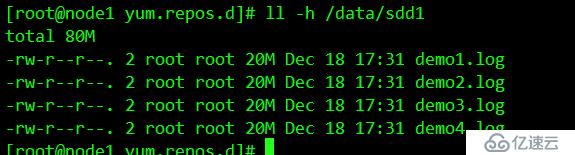

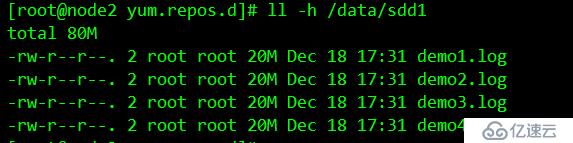

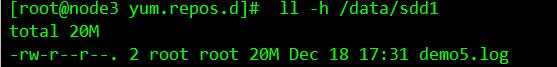

#创建复制卷

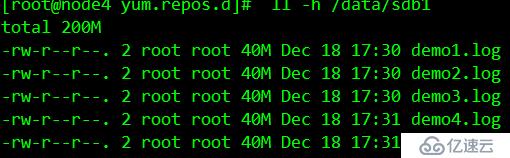

[root@node1 yum.repos.d]# gluster volume create rep-vol replica 2 node3:/data/sdb1 node4:/data/sdb1 force

volume create: rep-vol: success: please start the volume to access data

#开启复制卷

[root@node1 yum.repos.d]# gluster volume start rep-vol

volume start: rep-vol: success在客户机挂碍复制卷

[root@manager yum.repos.d]# mkdir /text/rep

[root@manager yum.repos.d]# mount.glusterfs node3:rep-vol /text/rep查看挂载

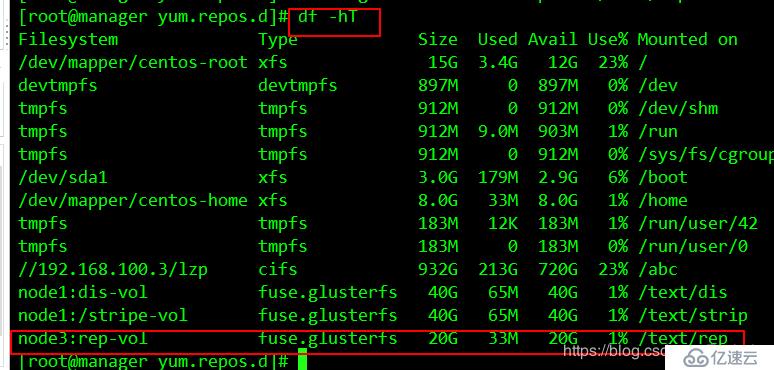

#创建分布式条带卷

[root@node1 yum.repos.d]# gluster volume create dis-stripe stripe 2 node1:/data/sdd1 node2:/data/sdd1 node3:/data/sdd1 node4:/data/sdd1 force

volume create: dis-stripe: success: please start the volume to access data

#启动分布式条带卷

[root@node1 yum.repos.d]# gluster volume start dis-stripe

volume start: dis-stripe: success在客户机上挂载

[root@manager yum.repos.d]# mkdir /text/dis-strip

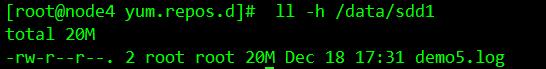

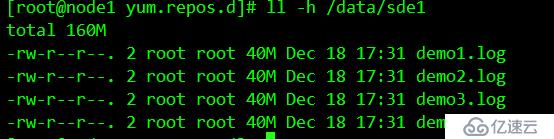

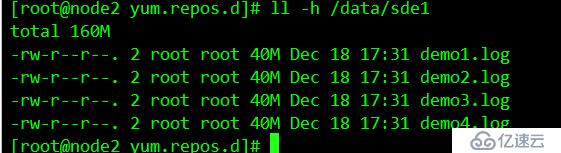

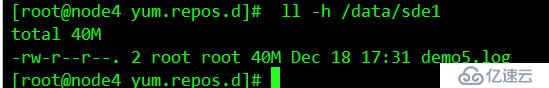

[root@manager yum.repos.d]# mount.glusterfs node4:dis-stripe /text/dis-strip/#创建分布式复制卷

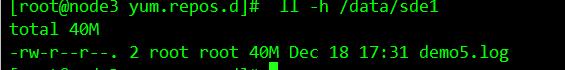

[root@node2 yum.repos.d]# gluster volume create dis-rep replica 2 node1:/data/sde1 node2:/data/sde1 node3:/data/sde1 node4:/data/sde1 force

volume create: dis-rep: success: please start the volume to access data

#开启复制卷

[root@node2 yum.repos.d]# gluster volume start dis-rep

volume start: dis-rep: success

# 查看现有卷

[root@node2 yum.repos.d]# gluster volume list

dis-rep

dis-stripe

dis-vol

rep-vol

stripe-vol

[root@manager yum.repos.d]# mkdir /text/dis-rep

[root@manager yum.repos.d]# mount.glusterfs node3:dis-rep /text/dis-rep/查看挂载

------------------------上边我们完成了卷的创建和挂载-------------

[root@manager yum.repos.d]# dd if=/dev/zero of=/demo1.log bs=1M count=40

40+0 records in

40+0 records out

41943040 bytes (42 MB) copied, 0.0175819 s, 2.4 GB/s

[root@manager yum.repos.d]# dd if=/dev/zero of=/demo2.log bs=1M count=40

40+0 records in

40+0 records out

41943040 bytes (42 MB) copied, 0.269746 s, 155 MB/s

[root@manager yum.repos.d]# dd if=/dev/zero of=/demo3.log bs=1M count=40

40+0 records in

40+0 records out

41943040 bytes (42 MB) copied, 0.34134 s, 123 MB/s

[root@manager yum.repos.d]# dd if=/dev/zero of=/demo4.log bs=1M count=40

40+0 records in

40+0 records out

41943040 bytes (42 MB) copied, 1.55335 s, 27.0 MB/s

[root@manager yum.repos.d]# dd if=/dev/zero of=/demo5.log bs=1M count=40

40+0 records in

40+0 records out

41943040 bytes (42 MB) copied, 1.47974 s, 28.3 MB/s

[root@manager yum.repos.d]# cp /demo* /text/dis

[root@manager yum.repos.d]# cp /demo* /text/strip

[root@manager yum.repos.d]# cp /demo* /text/rep

[root@manager yum.repos.d]# cp /demo* /text/dis-strip

[root@manager yum.repos.d]# cp /demo* /text/dis-rep

[root@manager yum.repos.d]# ls /text/

dis dis-rep dis-strip rep strip

[root@manager yum.repos.d]# ls /text/dis

demo1.log demo2.log demo3.log demo4.log

[root@manager yum.repos.d]# ls /text/dis-rep

demo1.log demo2.log demo3.log demo4.log demo5.log

[root@manager yum.repos.d]# ls /text/dis-strip/

demo5.log

[root@manager yum.repos.d]# ls /text/rep/

demo1.log demo2.log demo3.log demo4.log demo5.log

[root@manager yum.repos.d]# ls /text/strip/

[root@manager yum.repos.d]#

结果表示:

要删除卷需要先停止卷,在删除卷的时候,卷组必须处于开启状态

#停止卷

[root@manager yum.repos.d]# gluster volume delete dis-vol

#删除卷

[root@manager yum.repos.d]# gluster volume delete dis-vol#仅拒绝

[root@manager yum.repos.d]# gluster volume set dis-vol auth.reject 192.168.45.133

#仅允许

[root@manager yum.repos.d]# gluster volume set dis-vol auth.allow 192.168.45.133亿速云「云服务器」,即开即用、新一代英特尔至强铂金CPU、三副本存储NVMe SSD云盘,价格低至29元/月。点击查看>>

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。