这篇文章给大家分享的是有关scrapy怎么样测试python爬虫的数据的内容。小编觉得挺实用的,因此分享给大家做个参考。一起跟随小编过来看看吧。

进入到项目的根目录下,运行以下命令:

# 进入到项目目录 # cd /work/Code/scraper/TweetScraper scrapy crawl TweetScraper -a query="Novel coronavirus,#COVID-19"

注意,抓取Twitter的数据需要科学上网或者服务器部署在海外,所以使用的是海外的服务器。

[root@cs TweetScraper]# scrapy crawl TweetScraper -a query="Novel coronavirus,#COVID-19"

2020-04-16 19:22:40 [scrapy.utils.log] INFO: Scrapy 2.0.1 started (bot: TweetScraper)

2020-04-16 19:22:40 [scrapy.utils.log] INFO: Versions: lxml 4.2.1.0, libxml2 2.9.8, cssselect 1.1.0, parsel 1.5.2, w3lib 1.21.0, Twisted 20.3.0, Python 3.6.5 |Anaconda, Inc.| (default, Apr 29 2018, 16:14:56) - [GCC 7.2.0], pyOpenSSL 18.0.0 (OpenSSL 1.0.2o 27 Mar 2018), cryptography 2.2.2, Platform Linux-3.10.0-862.el7.x86_64-x86_64-with-centos-7.5.1804-Core

2020-04-16 19:22:40 [scrapy.crawler] INFO: Overridden settings:

{'BOT_NAME': 'TweetScraper',

'LOG_LEVEL': 'INFO',

'NEWSPIDER_MODULE': 'TweetScraper.spiders',

'SPIDER_MODULES': ['TweetScraper.spiders'],

'USER_AGENT': 'TweetScraper'}

2020-04-16 19:22:40 [scrapy.extensions.telnet] INFO: Telnet Password: 1fb55da389e595db

2020-04-16 19:22:40 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.memusage.MemoryUsage',

'scrapy.extensions.logstats.LogStats']

2020-04-16 19:22:41 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2020-04-16 19:22:41 [scrapy.middleware] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

Mysql连接成功###################################### MySQLCursorBuffered: (Nothing executed yet)

2020-04-16 19:22:41 [TweetScraper.pipelines] INFO: Table 'tweets' already exists

2020-04-16 19:22:41 [scrapy.middleware] INFO: Enabled item pipelines:

['TweetScraper.pipelines.SavetoMySQLPipeline']

2020-04-16 19:22:41 [scrapy.core.engine] INFO: Spider opened

2020-04-16 19:22:41 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2020-04-16 19:22:41 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2020-04-16 19:23:45 [scrapy.extensions.logstats] INFO: Crawled 1 pages (at 1 pages/min), scraped 11 items (at 11 items/min)

2020-04-16 19:24:44 [scrapy.extensions.logstats] INFO: Crawled 2 pages (at 1 pages/min), scraped 22 items (at 11 items/min)

^C2020-04-16 19:26:27 [scrapy.crawler] INFO: Received SIGINT, shutting down gracefully. Send again to force

2020-04-16 19:26:27 [scrapy.core.engine] INFO: Closing spider (shutdown)

2020-04-16 19:26:43 [scrapy.extensions.logstats] INFO: Crawled 3 pages (at 1 pages/min), scraped 44 items (at 11 items/min)

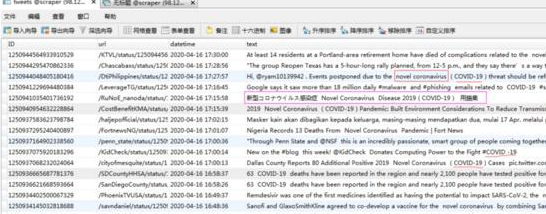

我们可以看到,该项目运行OK,抓取到的数据也已经被保存在数据库了。

感谢各位的阅读!关于scrapy怎么样测试python爬虫的数据就分享到这里了,希望以上内容可以对大家有一定的帮助,让大家可以学到更多知识。如果觉得文章不错,可以把它分享出去让更多的人看到吧!

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。