这篇文章主要介绍了MySQL 搭建MHA架构部署的方法,具有一定借鉴价值,感兴趣的朋友可以参考下,希望大家阅读完这篇文章之后大有收获,下面让小编带着大家一起了解一下。

MHA (Master High Availability)目前在MySQL高可用方面是一个相对成熟的解决方案,它由日本人youshimaton开发,是一套优秀的作为MySQL高可用性环境下故障切换和主从提升的高可用软件。在MySQL故障切换过程中,MHA能做到0~30秒之内自动完成数据库的故障切换操作,并且在进行故障切换的过程中,MHA能最大程度上保证数据库的一致性,以达到真正意义上的高可用。

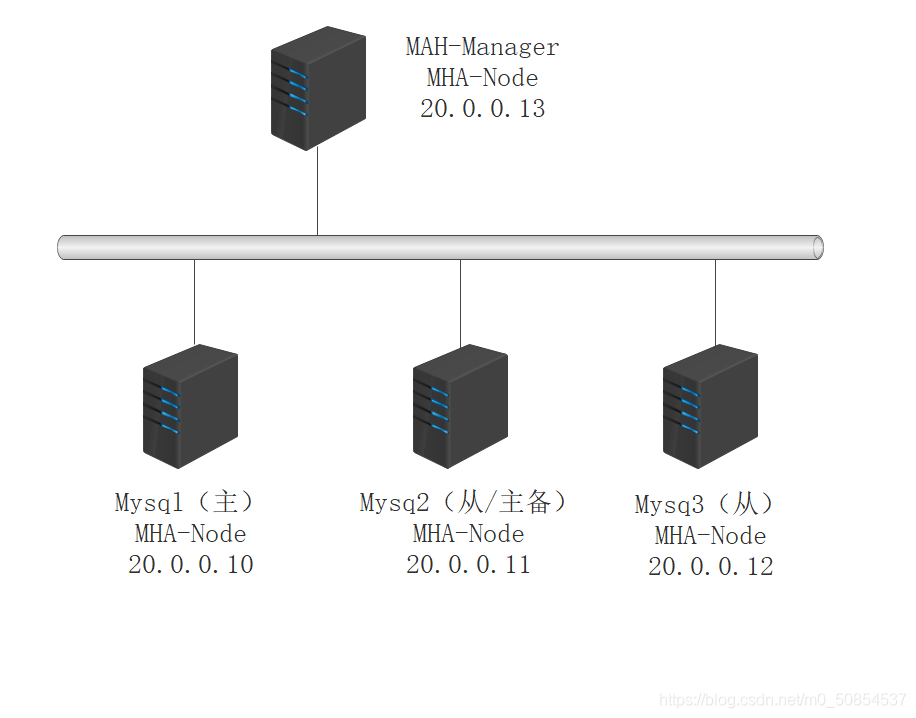

MHA由两部分组成:MHA Manager (管理节点)和MHANode(数据节点)。MHA Manager可以独立部署在一台独立的机器上管理多个Master-Slave集群,也可以部署在一台Slave上。当Master 出现故障是,它可以自动将最新数据的Slave 提升为新的Master,然后将所有其他的Slave重新指向新的Master。整个故障转移过程对应用程序是完全透明的。

目前MHA主要支持一主多从的架构,要搭建MHA,要求一个复制集群必须最少有3台数据库服务器,一主二从,即一台充当Master ,一台充当备用Master,另一台充当从库。出于成本考虑,淘宝在此基础上进行了改造,目前淘宝开发的 TMHA 已经支持一主一从。

1.从宕机崩溃的 Master保存二进制日志事件(binlog event) ;

2.识别含有最新更新的Slave;

3.应用差异的中继日志(relay log)到其他Slave;

4.应用从 Master 保存的二进制日志事件;

5.提升—个 Slave为新的Master;

6.使其他的 Slave 连接新的 Master 进行复制;

Manager工具包

Node工具包

1:Manager工具包

masterha_check_ssh:检查MHA的 SSH配置情况

masterha_check_repl:检查MySQL复制状况

masterha_manager:启动MHA

masterha_check_status:检测当前MHA运行状态

masterha_master_monitor:检测Master是否宕机

masterha_master_switch:控制故障转移(自动或手动)

masterha_conf_host:添加或删除配置的server 信息

2:Node工具包

通常由MHA Manager的脚本触发,无需人工操作

save_binary_logs:保存和复制Master 的 binlog日志

apply_diff_relay_logs:识别差异的中级日志时间并将其应用到其他 Slave

filter_mysqlbinlog:去除不必要的ROOLBACK事件(已经废弃)

purge_relay_logs:清除中继日志(不阻塞SQL线程)

自动故障切换过程中,MHA试图从宕机的主服务器上保存进制日志,最大程度的保证数据不丢失

使用半同步复制,可以大大降低数据丢失的风险

目前MHA支持一主多从架构,最少三台服务,即一主两从

MySQL 版本使用 5.6.36、cmake 版本使用 2.8.6

1:安装编译依赖的环境

[root@master ~]# yum -y install ncurses-devel gcc-c++ perl-Module-Install

2.:安装 gmake 编译软件

[root@master ~]# tar zxvf cmake-2.8.6.tar.gz [root@master ~]# cd cmake-2.8.6 [root@master cmake-2.8.6]# ./configure [root@master cmake-2.8.6]# gmake && gmake install

3:安装 MySQL 数据库

[root@master ~]# tar -zxvf mysql-5.6.36.tar.gz [root@master ~]# cd mysql-5.6.36 [root@master mysql-5.6.36]# cmake -DCMAKE_INSTALL_PREFIX=/usr/local/mysql -DDEFAULT_CHARSET=utf8 -DDEFAULT_COLLATION=utf8_general_ci -DWITH_EXTRA_CHARSETS=all -DSYSCONFDIR=/etc [root@master mysql-5.6.36]# make && make install [root@master mysql-5.6.36]# cp support-files/my-default.cnf /etc/my.cnf [root@master mysql-5.6.36]# cp support-files/mysql.server /etc/rc.d/init.d/mysqld [root@master ~]# chmod +x /etc/rc.d/init.d/mysqld [root@master ~]# chkconfig --add mysqld [root@master ~]# echo "PATH=$PATH:/usr/local/mysql/bin" >> /etc/profile [root@master ~]# source /etc/profile chown -R mysql.mysql /usr/local/mysql groupadd mysql [root@master ~]# useradd -M -s /sbin/nologin mysql -g mysql [root@master ~]# chown -R mysql.mysql /usr/local/mysql [root@master ~]# mkdir -p /data/mysql [root@master ~]# /usr/local/mysql/scripts/mysql_install_db --basedir=/usr/local/mysql --datadir=/usr/local/mysql/data --user=mysql

4:修改 Master 的主配置文件/etc/my.cnf 文件

将原来配置全部删除

[root@master ~]# vi /etc/my.cnf [client] port = 3306 socket = /usr/local/mysql/mysql.sock [mysql] port = 3306 socket = /usr/local/mysql/mysql.sock [mysqld] user = mysql basedir = /usr/local/mysql datadir = /usr/local/mysql/data port = 3306 pid-file = /usr/local/mysql/mysqld.pid socket = /usr/local/mysql/mysql.sock server-id = 1 log_bin = master-bin log-slave-updates = true sql_mode=NO_ENGINE_SUBSTITUTION,STRICT_TRANS_TABLES,NO_AUTO_CREATE_USER,NO_AUTO_VALUE_ON_ZERO,NO_ZERO_IN_DATE,NO_ZERO_DATE,ERROR_FOR_DIVISION_BY_ZERO,PIPES_AS_CONCAT,ANSI_QUOTES

另外两台 slave 数据库

三台服务器的 server-id 不能一样,其余一样正常写入

server-id = 2 log_bin = master-bin relay-log = relay-log-bin relay-log-index = slave-relay-bin.index

server-id = 3 log_bin = master-bin relay-log = relay-log-bin relay-log-index = slave-relay-bin.index

5:三台数据库分别做两个软链接,软链接是为 HMA 服务的

[root@master ~]# ln -s /usr/local/mysql/bin/mysql /usr/sbin/ [root@master ~]# ln -s /usr/local/mysql/bin/mysqlbinlog /usr/sbin/

6:三台数据库启动 MySQL

[root@master ~]# /usr/local/mysql/bin/mysqld_safe --user=mysql & [root@master ~]# service mysqld restart Shutting down MySQL.. SUCCESS! Starting MySQL. SUCCESS!

登录数据库

[root@master ~]# mysql

1:在所有数据库节点上授权两个用户,一个是从库同步使用,另外一个是 manager 使用

mysql> grant replication slave on *.* to 'myslave'@'20.0.0.%' identified by '123'; mysql> grant all privileges on *.* to 'mha'@'20.0.0.%' identified by 'manager'; mysql> flush privileges;

2:下面三条授权按理论是不用添加的,但是做案例实验环境时候通过 MHA 检查MySQL 主从有报错,报两个从库通过主机名连接不上主库,所以所有数据库加上下面的授权。

mysql> grant all privileges on *.* to 'mha'@'master' identified by 'manager'; mysql> grant all privileges on *.* to 'mha'@'slave1' identified by 'manager'; mysql> grant all privileges on *.* to 'mha'@'slave2' identified by 'manager';

3:在 master 主机上查看二进制文件和同步点

mysql> show master status; +-------------------+----------+--------------+------------------+-------------------+ | File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set | +-------------------+----------+--------------+------------------+-------------------+ | master-bin.000001 | 608 | | | | +-------------------+----------+--------------+------------------+-------------------+ 1 row in set (0.00 sec)

4:在 slave1 和 slave2 分别执行同步

mysql> change master to master_host='20.0.0.10',master_user='myslave',master_password='123',master_log_file='master-bin.000001',master_log_pos=608 mysql> start slave;

5:查看 IO 和 SQL 线程都是 yes 代表同步是否正常

mysql> show slave status\G; *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 20.0.0.10 Master_User: myslave Master_Port: 3306 Connect_Retry: 60 Master_Log_File: master-bin.000001 Read_Master_Log_Pos: 608 Relay_Log_File: relay-log-bin.000002 Relay_Log_Pos: 284 Relay_Master_Log_File: master-bin.000001 Slave_IO_Running: Yes Slave_SQL_Running: Yes Replicate_Do_DB: Replicate_Ignore_DB:

必须设置两个从库为只读模式

mysql> set global read_only=1;

6:在 master 主库插入两条数据,测试是否同步

mysql> create database test_db; Query OK, 1 row affected (0.00 sec) mysql> use test_db; Database changed mysql> create table test(id int); Query OK, 0 rows affected (0.13 sec) mysql> insert into test(id) values (1); Query OK, 1 row affected (0.03 sec)

7:在两个从库分别查询如下所示说明主从同步正常

mysql> select * from test_db.test; +------+ | id | +------+ | 1 | +------+ 1 row in set (0.00 sec)

1:所有服务器上都安装 MHA 依赖的环境,首先安装 epel 源(3+1)

[root@master ~]# cd /etc/yum.repos.d/ [root@master yum.repos.d]# ll 总用量 20 drwxr-xr-x. 2 root root 187 10月 10 18:08 backup -rw-r--r--. 1 root root 1458 12月 28 23:07 CentOS7-Base-163.repo -rw-r--r--. 1 root root 951 12月 29 14:52 epel.repo -rw-r--r--. 1 root root 1050 11月 1 04:33 epel.repo.rpmnew -rw-r--r--. 1 root root 1149 11月 1 04:33 epel-testing.repo -rw-r--r--. 1 root root 228 10月 27 18:43 local.repo

三台数据库加上一台 mha-manager

[root@mha-manager ~]# yum install epel-release --nogpgcheck [root@mha-manager ~]# yum install -y perl-DBD-MySQL perl-Config-Tiny perl-Log-Dispatch perl-ParalExtUtils-CBuilder perl-ExtUtils-MakeMaker perl-CPAN

2:在所有服务器上必须先安装 node 组件 (3+1)

[root@mha-manager ~]# tar zxvf mha4mysql-node-0.57.tar.gz [root@mha-manager ~]# cd mha4mysql-node-0.57 [root@mha-manager mha4mysql-node-0.57]# perl Makefile.PL [root@mha-manager mha4mysql-node-0.57]# make && make install

3:在 mha-manager 上安装 manager 组件

[root@mha-manager ~]# tar zxvf mha4mysql-manager-0.57.tar.gz [root@mha-manager ~]# cd mha4mysql-manager-0.57/ [root@mha-manager mha4mysql-manager-0.57]# perl Makefile.PL [root@mha-manager mha4mysql-manager-0.57]# make && make install

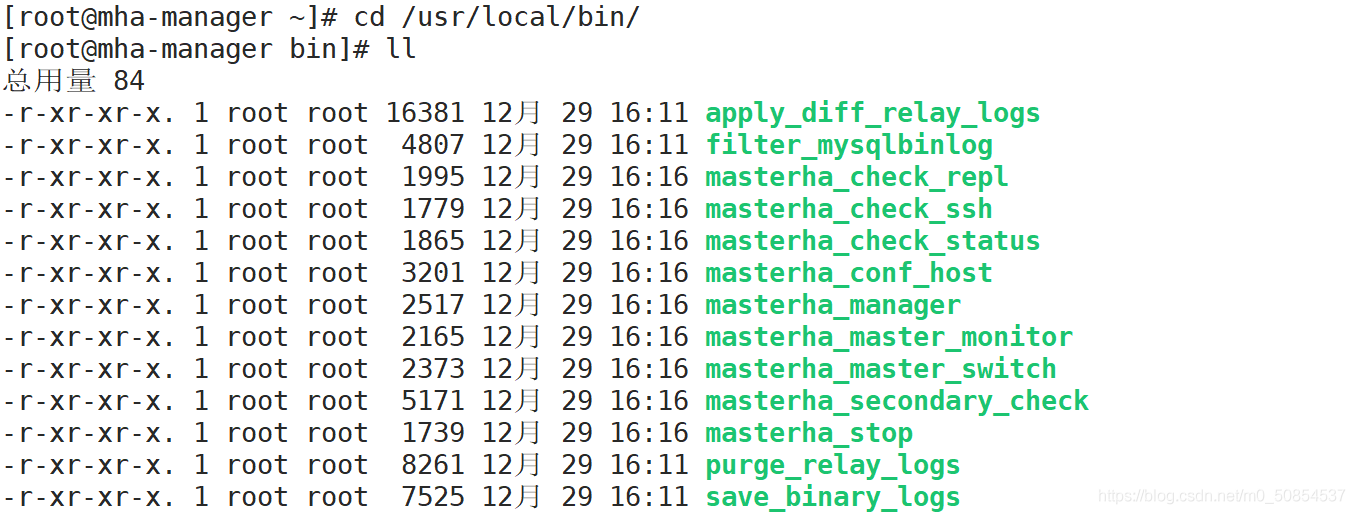

manager 安装后在/usr/local/bin 下面会生成几个工具

masterha_check_ssh 检查 MHA 的 SSH 配置状况

masterha_check_repl 检查 MySQL 复制状况

masterha_manger 启动 manager的脚本

masterha_check_status 检测当前 MHA 运行状态

masterha_master_monitor 检测 master 是否宕机

masterha_master_switch 控制故障转移(自动或者手动)

masterha_conf_host 添加或删除配置的 server 信息

masterha_stop 关闭manager

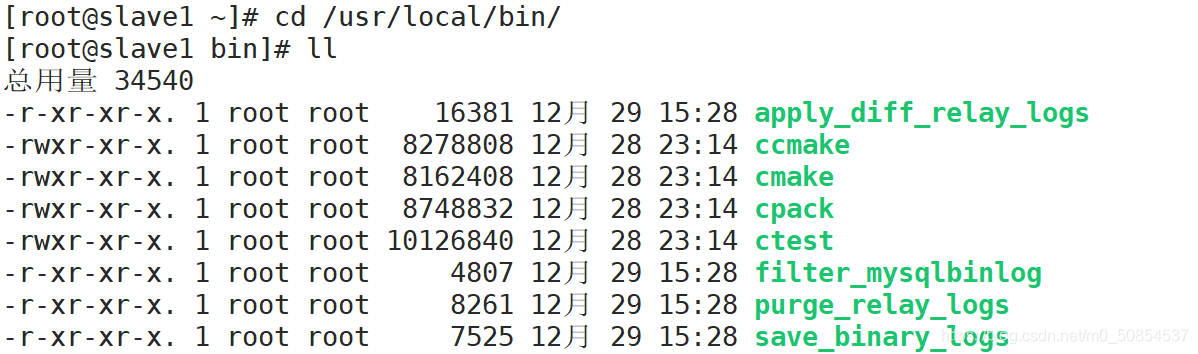

node 安装后也会在/usr/local/bin 下面会生成几个脚本

save_binary_logs 保存和复制 master 的二进制日志

apply_diff_relay_logs 识别差异的中继日志事件并将其差异的事件应用于其他的 slave

filter_mysqlbinlog 去除不必要的 ROLLBACK 事件(MHA 已不再使用这个工具)

purge_relay_logs 清除中继日志(不会阻塞 SQL 线程)

1:在 manager 上配置到所有节点的无密码认证

(1)生成密钥

[root@mha-manager ~]# ssh-keygen -t rsa # 一直回车

(2)生成密钥之后发送给其他3个数据库

[root@mha-manager ~]# ssh-copy-id 20.0.0.10 # 输入:yes 密码:123456 [root@mha-manager ~]# ssh-copy-id 20.0.0.11 [root@mha-manager ~]# ssh-copy-id 20.0.0.12

(3)登录测试

[root@mha-manager ~]# ssh root@20.0.0.10 Last login: Tue Dec 29 14:52:09 2020 from 20.0.0.1 [root@master ~]# exit 登出 Connection to 20.0.0.10 closed. [root@mha-manager ~]# ssh root@20.0.0.11 Last login: Tue Dec 29 13:20:07 2020 from 20.0.0.1 [root@slave1 ~]# exit 登出 Connection to 20.0.0.11 closed. [root@mha-manager ~]# ssh root@20.0.0.12 Last login: Tue Oct 27 19:45:24 2020 from 20.0.0.1 [root@slave2 ~]# exit 登出 Connection to 20.0.0.12 closed.

2:在 master 上配置到数据库节点的无密码认证

(1)生成密钥

[root@master ~]# ssh-keygen -t rsa

(2)生成密钥之后发送给其他2个数据库

[root@master ~]# ssh-copy-id 20.0.0.11 [root@master ~]# ssh-copy-id 20.0.0.12

(3)登录测试

[root@master ~]# ssh root@20.0.0.11 Last login: Tue Dec 29 16:40:06 2020 from 20.0.0.13 [root@slave1 ~]# exit 登出 Connection to 20.0.0.11 closed. [root@master ~]# ssh root@20.0.0.12 Last login: Tue Oct 27 23:05:20 2020 from 20.0.0.13 [root@slave2 ~]# exit 登出 Connection to 20.0.0.12 closed.

3:在 slave1 上配置到数据库节点的无密码认证

(1)生成密钥

[root@slave1 ~]# ssh-keygen -t rsa

(2)生成密钥之后发送给其他2个数据库

[root@slave1 ~]# ssh-copy-id 20.0.0.10 [root@slave1 ~]# ssh-copy-id 20.0.0.12

(3)登录测试

[root@slave1 ~]# ssh root@20.0.0.10 Last login: Tue Dec 29 16:39:55 2020 from 20.0.0.13 [root@master ~]# exit 登出 Connection to 20.0.0.10 closed. [root@slave1 ~]# ssh root@20.0.0.12 Last login: Tue Oct 27 23:14:06 2020 from 20.0.0.10 [root@slave2 ~]# exit 登出 Connection to 20.0.0.12 closed.

4:在 slave2 上配置到数据库节点的无密码认证

(1)生成密钥

[root@slave2 ~]# ssh-keygen -t rsa

(2)生成密钥之后发送给其他2个数据库

[root@slave2 ~]# ssh-copy-id 20.0.0.10 [root@slave2 ~]# ssh-copy-id 20.0.0.11

(3)登录测试

[root@slave2 ~]# ssh root@20.0.0.10 Last login: Tue Dec 29 16:59:43 2020 from 20.0.0.11 [root@master ~]# exit 登出 Connection to 20.0.0.10 closed. [root@slave2 ~]# ssh root@20.0.0.11 Last login: Tue Dec 29 16:48:51 2020 from 20.0.0.10 [root@slave1 ~]# exit 登出 Connection to 20.0.0.11 closed.

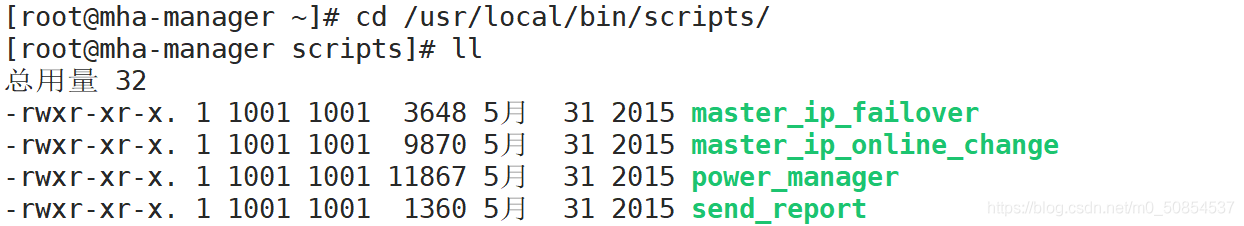

1:在 manager 节点上复制相关脚本到/usr/local/bin 目录

(1)拷贝

[root@mha-manager ~]# cp -ra /root/mha4mysql-manager-0.57/samples/scripts/ /usr/local/bin/

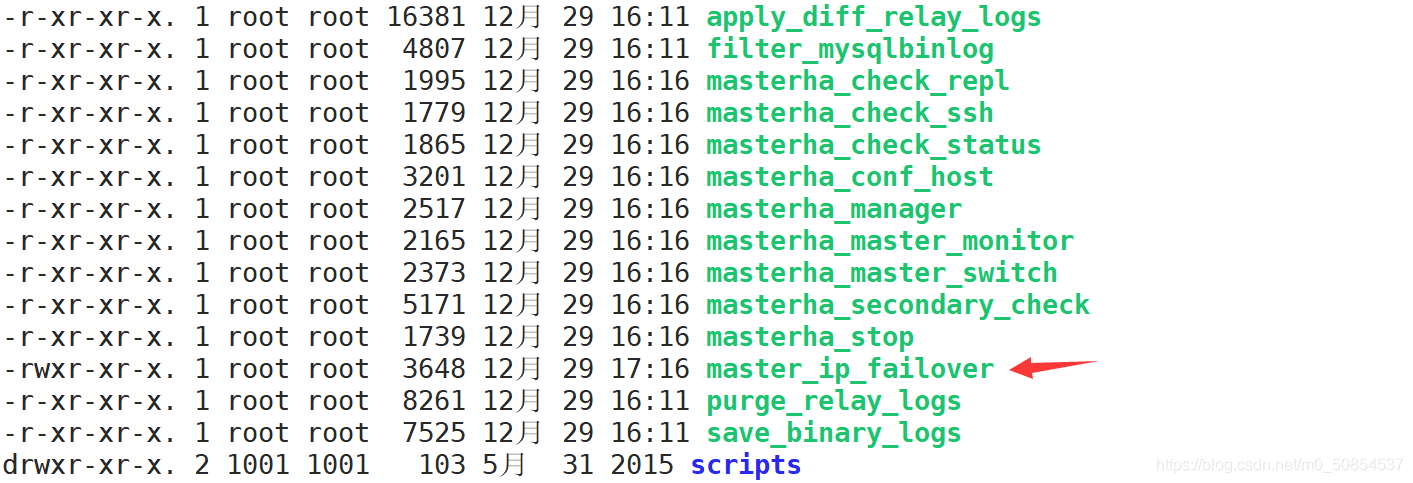

(2)拷贝后会有四个执行文件

master_ip_failover #自动切换时 VIP 管理的脚本

master_ip_online_change #在线切换时 vip 的管理

power_manager #故障发生后关闭主机的脚本

send_report #因故障切换后发送报警的脚本

(3)复制上述的自动切换时 VIP 管理的脚本到/usr/local/bin 目录,这里使用脚本管理 VIP

[root@mha-manager scripts]# cp master_ip_failover /usr/local/bin/ [root@mha-manager scripts]# cd .. [root@mha-manager bin]# ll 总用量 88

2:修改自动切换脚本

[root@mha-manager ~]# vi /usr/local/bin/master_ip_failover # 删除里面所有内容

#!/usr/bin/env perl

use strict;

use warnings FATAL => 'all';

use Getopt::Long;

my (

$command, $ssh_user, $orig_master_host, $orig_master_ip,

$orig_master_port, $new_master_host, $new_master_ip, $new_master_port

);

#############################添加内容部分#########################################

my $vip = '20.0.0.200';

my $brdc = '20.0.0.255';

my $ifdev = 'ens33';

my $key = '1';

my $ssh_start_vip = "/sbin/ifconfig ens33:$key $vip";

my $ssh_stop_vip = "/sbin/ifconfig ens33:$key down";

my $exit_code = 0;

#my $ssh_start_vip = "/usr/sbin/ip addr add $vip/24 brd $brdc dev $ifdev label $ifdev:$key;/usr/sbin/arping -q -A -c 1 -I $ifdev $vip;iptables -F;";

#my $ssh_stop_vip = "/usr/sbin/ip addr del $vip/24 dev $ifdev label $ifdev:$key";

##################################################################################

GetOptions(

'command=s' => \$command,

'ssh_user=s' => \$ssh_user,

'orig_master_host=s' => \$orig_master_host,

'orig_master_ip=s' => \$orig_master_ip,

'orig_master_port=i' => \$orig_master_port,

'new_master_host=s' => \$new_master_host,

'new_master_ip=s' => \$new_master_ip,

'new_master_port=i' => \$new_master_port,

);

exit &main();

sub main {

print "\n\nIN SCRIPT TEST====$ssh_stop_vip==$ssh_start_vip===\n\n";

if ( $command eq "stop" || $command eq "stopssh" ) {

my $exit_code = 1;

eval {

print "Disabling the VIP on old master: $orig_master_host \n";

&stop_vip();

$exit_code = 0;

};

if ($@) {

warn "Got Error: $@\n";

exit $exit_code;

}

exit $exit_code;

}

elsif ( $command eq "start" ) {

my $exit_code = 10;

eval {

print "Enabling the VIP - $vip on the new master - $new_master_host \n";

&start_vip();

$exit_code = 0;

};

if ($@) {

warn $@;

exit $exit_code;

}

exit $exit_code;

}

elsif ( $command eq "status" ) {

print "Checking the Status of the script.. OK \n";

exit 0;

}

else {

&usage();

exit 1;

}

}

sub start_vip() {

`ssh $ssh_user\@$new_master_host \" $ssh_start_vip \"`;

}

# A simple system call that disable the VIP on the old_master

sub stop_vip() {

`ssh $ssh_user\@$orig_master_host \" $ssh_stop_vip \"`;

}

sub usage {

print

"Usage: master_ip_failover --command=start|stop|stopssh|status --orig_master_host=host --orig_master_ip=ip --orig_master_port=port --new_master_host=host --new_master_ip=ip --new_master_port=port\n";

}3:创建 MHA 软件目录并拷贝配置文件

[root@mha-manager ~]# mkdir /etc/mha [root@mha-manager ~]# cp mha4mysql-manager-0.57/samples/conf/app1.cnf /etc/mha [root@mha-manager ~]# vi /etc/mha/app1.cnf [server default] manager_workdir=/var/log/masterha/app1 manager_log=/var/log/masterha/app1/manager.log master_binlog_dir=/usr/local/mysql/data master_ip_failover_script=/usr/local/bin/master_ip_failover master_ip_online_change_script=/usr/local/bin/master_ip_online_change password=manager user=mha ping_interval=1 remote_workdir=/tmp repl_password=123 repl_user=myslave secondary_check_script=/usr/local/bin/masterha_secondary_check -s 20.0.0.11 -s 20.0.0.12 shutdown_script="" ssh_user=root [server1] hostname=20.0.0.10 port=3306 [server2] hostname=20.0.0.11 port=3306 candidate_master=1 check_repl_delay=0 [server3] hostname=20.0.0.12 port=3306

1:测试 ssh 无密码认证,如果正常最后会输出 successfully

[root@mha-manager ~]# masterha_check_ssh --conf=<server_config_file> must be set. [root@mha-manager ~]# masterha_check_ssh --conf=/etc/mha/app1.cnf Tue Dec 29 20:19:16 2020 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping. Tue Dec 29 20:19:16 2020 - [info] Reading application default configuration from /etc/mha/app1.cnf.. Tue Dec 29 20:19:16 2020 - [info] Reading server configuration from /etc/mha/app1.cnf.. Tue Dec 29 20:19:16 2020 - [info] Starting SSH connection tests.. Tue Dec 29 20:19:17 2020 - [debug] Tue Dec 29 20:19:16 2020 - [debug] Connecting via SSH from root@20.0.0.10(20.0.0.10:22) to root@20.0.0.11(20.0.0.11:22).. Tue Dec 29 20:19:16 2020 - [debug] ok. Tue Dec 29 20:19:16 2020 - [debug] Connecting via SSH from root@20.0.0.10(20.0.0.10:22) to root@20.0.0.12(20.0.0.12:22).. Tue Dec 29 20:19:17 2020 - [debug] ok. Tue Dec 29 20:19:18 2020 - [debug] Tue Dec 29 20:19:17 2020 - [debug] Connecting via SSH from root@20.0.0.12(20.0.0.12:22) to root@20.0.0.10(20.0.0.10:22).. Tue Dec 29 20:19:17 2020 - [debug] ok. Tue Dec 29 20:19:17 2020 - [debug] Connecting via SSH from root@20.0.0.12(20.0.0.12:22) to root@20.0.0.11(20.0.0.11:22).. Tue Dec 29 20:19:18 2020 - [debug] ok. Tue Dec 29 20:19:18 2020 - [debug] Tue Dec 29 20:19:16 2020 - [debug] Connecting via SSH from root@20.0.0.11(20.0.0.11:22) to root@20.0.0.10(20.0.0.10:22).. Tue Dec 29 20:19:17 2020 - [debug] ok. Tue Dec 29 20:19:17 2020 - [debug] Connecting via SSH from root@20.0.0.11(20.0.0.11:22) to root@20.0.0.12(20.0.0.12:22).. Tue Dec 29 20:19:17 2020 - [debug] ok. Tue Dec 29 20:19:18 2020 - [info] All SSH connection tests passed successfully.

2:测试 MySQL 主从连接情况,最后出现 MySQL Replication Health is OK 字样说明

[root@mha-manager ~]# masterha_check_repl --conf=/etc/mha/app1.cnf Tue Dec 29 20:30:29 2020 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping. Tue Dec 29 20:30:29 2020 - [info] Reading application default configuration from /etc/mha/app1.cnf.. Tue Dec 29 20:30:29 2020 - [info] Reading server configuration from /etc/mha/app1.cnf.. Tue Dec 29 20:30:29 2020 - [info] MHA::MasterMonitor version 0.57. Tue Dec 29 20:30:30 2020 - [info] GTID failover mode = 0 Tue Dec 29 20:30:30 2020 - [info] Dead Servers: Tue Dec 29 20:30:30 2020 - [info] Alive Servers: Tue Dec 29 20:30:30 2020 - [info] 20.0.0.10(20.0.0.10:3306) Tue Dec 29 20:30:30 2020 - [info] 20.0.0.11(20.0.0.11:3306) Tue Dec 29 20:30:30 2020 - [info] 20.0.0.12(20.0.0.12:3306) Tue Dec 29 20:30:30 2020 - [info] Alive Slaves: Tue Dec 29 20:30:30 2020 - [info] 20.0.0.11(20.0.0.11:3306) Version=5.6.36-log (oldest major version between slaves) log-bin:enabled .......省略 Checking the Status of the script.. OK Tue Dec 29 20:30:55 2020 - [info] OK. Tue Dec 29 20:30:55 2020 - [warning] shutdown_script is not defined. Tue Dec 29 20:30:55 2020 - [info] Got exit code 0 (Not master dead). MySQL Replication Health is OK.

查看 20.0.0.200 是否存在

这个 VIP 地址不会因为manager 节点停止 MHA 服务而消失

第一次启动mha,主库上并不会主动的生成vip地址,需要手动开启

[root@master ~]# ifconfig ens33:1 20.0.0.200/24 up [root@master ~]# ip addr 2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:8d:e2:af brd ff:ff:ff:ff:ff:ff inet 20.0.0.10/24 brd 20.0.0.255 scope global ens33 valid_lft forever preferred_lft forever inet 20.0.0.200/24 brd 20.0.0.255 scope global secondary ens33:1 valid_lft forever preferred_lft forever inet6 fe80::a6c1:f3d4:160:102a/64 scope link valid_lft forever preferred_lft forever

[root@mha-manager ~]# nohup masterha_manager --conf=/etc/mha/app1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null > /var/log/masterha/app1/manager.log 2>&1 & [1] 57152 [root@mha-manager ~]# masterha_check_status --conf=/etc/mha/app1.cnf app1 (pid:57152) is running(0:PING_OK), master:20.0.0.10

1:将 master 主服务器 down 掉

[root@master ~]# pkill mysqld

2:查看日志信息

[root@mha-manager ~]# cat /var/log/masterha/app1/manager.log master 20.0.0.10(20.0.0.10:3306) is down! # 20.0.0.10 以停掉 Check MHA Manager logs at mha-manager:/var/log/masterha/app1/manager.log for details. Started automated(non-interactive) failover. Invalidated master IP address on 20.0.0.10(20.0.0.10:3306) The latest slave 20.0.0.11(20.0.0.11:3306) has all relay logs for recovery. Selected 20.0.0.11(20.0.0.11:3306) as a new master. # 20.0.0.11 成为主服务器 20.0.0.11(20.0.0.11:3306): OK: Applying all logs succeeded. 20.0.0.11(20.0.0.11:3306): OK: Activated master IP address. 20.0.0.12(20.0.0.12:3306): This host has the latest relay log events. Generating relay diff files from the latest slave succeeded.

3:查看虚拟地址

虚拟地址已到 20.0.0.11 上面

[root@slave1 ~]# ip addr 2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:49:77:39 brd ff:ff:ff:ff:ff:ff inet 20.0.0.11/24 brd 20.0.0.255 scope global ens33 valid_lft forever preferred_lft forever inet 20.0.0.200/8 brd 20.255.255.255 scope global ens33:1 valid_lft forever preferred_lft forever inet6 fe80::5cbb:1621:4281:3b24/64 scope link valid_lft forever preferred_lft forever

4:查看主从状态

查看主服务器的二进制文件

[root@slave1 ~]# mysql mysql> show master status; +-------------------+----------+--------------+------------------+-------------------+ | File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set | +-------------------+----------+--------------+------------------+-------------------+ | master-bin.000003 | 120 | | | | +-------------------+----------+--------------+------------------+-------------------+ 1 row in set (0.00 sec)

查看从2的状态

[root@slave2 ~]# mysql mysql> show slave status\G; *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 20.0.0.11 Master_User: myslave Master_Port: 3306 Connect_Retry: 60 Master_Log_File: master-bin.000003 Read_Master_Log_Pos: 120 Relay_Log_File: relay-log-bin.000002 Relay_Log_Pos: 284 Relay_Master_Log_File: master-bin.000003 Slave_IO_Running: Yes Slave_SQL_Running: Yes Replicate_Do_DB: Replicate_Ignore_DB:

1:开启 down 掉的数据库

[root@master ~]# systemctl start mysqld [root@master ~]# systemctl status mysqld ● mysqld.service - LSB: start and stop MySQL Loaded: loaded (/etc/rc.d/init.d/mysqld; bad; vendor preset: disabled) Active: active (running) since 二 2020-12-29 21:50:03 CST; 25s ago Docs: man:systemd-sysv-generator(8) Process: 977 ExecStart=/etc/rc.d/init.d/mysqld start (code=exited, status=0/SUCCESS) CGroup: /system.slice/mysqld.service ├─1026 /bin/sh /usr/local/mysql/bin/mysqld_safe --datadir=/usr/local/mysql/data --pid-fi... └─1358 /usr/local/mysql/bin/mysqld --basedir=/usr/local/mysql --datadir=/usr/local/m

2:down 掉的数据库进行主从复制

主从复制

[root@master ~]# mysql mysql> change master to master_host='20.0.0.11',master_user='myslave',master_password='123',master_log_file='master-bin.000003',master_log_pos=120; Query OK, 0 rows affected, 2 warnings (0.01 sec) # 20.0.0.11 是主服务器 down 掉之后成为的主服务器 mysql> start slave; Query OK, 0 rows affected (0.01 sec)

查看状态

mysql> show slave status\G; *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 20.0.0.11 Master_User: myslave Master_Port: 3306 Connect_Retry: 60 Master_Log_File: master-bin.000003 Read_Master_Log_Pos: 120 Relay_Log_File: mysqld-relay-bin.000002 Relay_Log_Pos: 284 Relay_Master_Log_File: master-bin.000003 Slave_IO_Running: Yes Slave_SQL_Running: Yes Replicate_Do_DB: Replicate_Ignore_DB:

3:修改 mha 配置文件

[root@mha-manager ~]# vi /etc/mha/app1.cnf secondary_check_script=/usr/local/bin/masterha_secondary_check -s 20.0.0.10 -s 20.0.0.12 # 由于 20.0.0.11 成为主服务器,所以要添加 20.0.0.10 和 20.0.0.12 为从服务器 [server1] hostname=20.0.0.10 candidate_master=1 check_repl_delay=0 port=3306 [server2] hostname=20.0.0.11 port=3306 # 由于 20.0.0.10 down 掉,所以 server1 文件自动删除,重新添加 server1,并设为备选主服务器,server2 修改

4:进入数据库重新授权

[root@master ~]# mysql mysql> grant all privileges on *.* to 'mha'@'master' identified by 'manager'; Query OK, 0 rows affected (0.00 sec) mysql> flush privileges; Query OK, 0 rows affected (0.00 sec)

5:再次启动 mha

[root@mha-manager ~]# nohup masterha_manager --conf=/etc/mha/app1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null > /var/log/masterha/app1/manager.log 2>&1 & [1] 58927 [root@mha-manager ~]# masterha_check_status --conf=/etc/mha/app1.cnf app1 (pid:58927) is running(0:PING_OK), master:20.0.0.11

6:再次查看日志

[root@mha-manager ~]# cat /var/log/masterha/app1/manager.log ...... Tue Dec 29 22:16:53 2020 - [info] Dead Servers: # 停掉的服务 Tue Dec 29 22:16:53 2020 - [info] Alive Servers: # 存活的服务 Tue Dec 29 22:16:53 2020 - [info] 20.0.0.10(20.0.0.10:3306) Tue Dec 29 22:16:53 2020 - [info] 20.0.0.11(20.0.0.11:3306) Tue Dec 29 22:16:53 2020 - [info] 20.0.0.12(20.0.0.12:3306) .......

7:主数据库写入数据同步并查看

其他数据库都可以查到

mysql> create database ooo; Query OK, 1 row affected (0.00 sec) mysql> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | mysql | | ooo | | performance_schema | | test | | test_db | +--------------------+ 6 rows in set (0.00 sec)

感谢你能够认真阅读完这篇文章,希望小编分享的“MySQL 搭建MHA架构部署的方法”这篇文章对大家有帮助,同时也希望大家多多支持亿速云,关注亿速云行业资讯频道,更多相关知识等着你来学习!

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。