hive是大数据技术簇中进行数据仓库应用的基础组件,是其它类似数据仓库应用的对比基准。基础的数据操作我们可以通过脚本方式以hive-client进行处理。若需要开发应用程序,则需要使用hive的jdbc驱动进行连接。本文以hive wiki上示例为基础,详细讲解了如何使用jdbc连接hive数据库。hive wiki原文地址:

https://cwiki.apache.org/confluence/display/Hive/HiveClient

https://cwiki.apache.org/confluence/display/Hive/HiveServer2+Clients#HiveServer2Clients-JDBC

首先hive必须以服务方式启动,我们平台选用hdp平台,hdp2.2平台默认启动时hive server2 模式。hiveserver2是比hiveserver更高级的服务模式,提供了hiveserver不能提供的并发控制、安全机制等高级功能。服务器启动以不同模式启动,客户端代码的编码方式也略有不同,具体见代码。

服务启动完成之后,在eclipse环境中编辑代码。代码如下:

import java.sql.SQLException; import java.sql.Connection; import java.sql.ResultSet; import java.sql.Statement; import java.sql.DriverManager; public class HiveJdbcClient { /*hiverserver 版本使用此驱动*/ //private static String driverName = "org.apache.hadoop.hive.jdbc.HiveDriver"; /*hiverserver2 版本使用此驱动*/ private static String driverName = "org.apache.hive.jdbc.HiveDriver"; public static void main(String[] args) throws SQLException { try { Class.forName(driverName); } catch (ClassNotFoundException e) { e.printStackTrace(); System.exit(1); } /*hiverserver 版本jdbc url格式*/ //Connection con = DriverManager.getConnection("jdbc:hive://hostip:10000/default", "", ""); /*hiverserver2 版本jdbc url格式*/ Connection con = DriverManager.getConnection("jdbc:hive2://hostip:10000/default", "hive", "hive"); Statement stmt = con.createStatement(); //参数设置测试 //boolean resHivePropertyTest = stmt // .execute("SET tez.runtime.io.sort.mb = 128"); boolean resHivePropertyTest = stmt .execute("set hive.execution.engine=tez"); System.out.println(resHivePropertyTest); String tableName = "testHiveDriverTable"; stmt.executeQuery("drop table " + tableName); ResultSet res = stmt.executeQuery("create table " + tableName + " (key int, value string)"); //show tables String sql = "show tables '" + tableName + "'"; System.out.println("Running: " + sql); res = stmt.executeQuery(sql); if (res.next()) { System.out.println(res.getString(1)); } //describe table sql = "describe " + tableName; System.out.println("Running: " + sql); res = stmt.executeQuery(sql); while (res.next()) { System.out.println(res.getString(1) + "\t" + res.getString(2)); } // load data into table // NOTE: filepath has to be local to the hive server // NOTE: /tmp/a.txt is a ctrl-A separated file with two fields per line String filepath = "/tmp/a.txt"; sql = "load data local inpath '" + filepath + "' into table " + tableName; System.out.println("Running: " + sql); res = stmt.executeQuery(sql); // select * query sql = "select * from " + tableName; System.out.println("Running: " + sql); res = stmt.executeQuery(sql); while (res.next()) { System.out.println(String.valueOf(res.getInt(1)) + "\t" + res.getString(2)); } // regular hive query sql = "select count(1) from " + tableName; System.out.println("Running: " + sql); res = stmt.executeQuery(sql); while (res.next()) { System.out.println(res.getString(1)); } } }

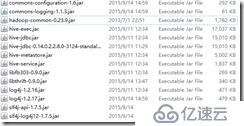

可以将如下jar包放在eclipse buildpath,可以在启动时放在classpath路径。

其中jdbcdriver可用hive-jdbc.jar,这样的话,其他的jar也必须包含,或者用jdbc-standalone jar包,用此jar包其他jar包就可以不用包含。其中hadoop-common包一定要包含。

执行后等待结果正确运行。若出现异常,则根据提示进行解决。提示不明确的几个异常的解决方案如下:

1. 假如classpath或者buildpath中不包含hadoop-common-0.23.9.jar,出现如下错误

Exception in thread "main" java.lang.NoClassDefFoundError: org/apache/hadoop/conf/Configuration at org.apache.hive.jdbc.HiveConnection.createBinaryTransport(HiveConnection.java:393) at org.apache.hive.jdbc.HiveConnection.openTransport(HiveConnection.java:187) at org.apache.hive.jdbc.HiveConnection.<init>(HiveConnection.java:163) at org.apache.hive.jdbc.HiveDriver.connect(HiveDriver.java:105) at java.sql.DriverManager.getConnection(DriverManager.java:664) at java.sql.DriverManager.getConnection(DriverManager.java:247) at HiveJdbcClient.main(HiveJdbcClient.java:28) Caused by: java.lang.ClassNotFoundException: org.apache.hadoop.conf.Configuration at java.net.URLClassLoader.findClass(URLClassLoader.java:381) at java.lang.ClassLoader.loadClass(ClassLoader.java:424) at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:331) at java.lang.ClassLoader.loadClass(ClassLoader.java:357) ... 7 more

2. HIVE JDBC连接服务器卡死:

假如使用hiveserver 版本JDBCdriver 连接hiverserver2,将可能出现此问题,具体在JDBCDriver连接上之后根据协议要求请求hiveserver2返回数据时,hiveserver2不返回任何数据,因此JDBC driver将卡死不返回。

3. TezTask出错,返回错误号1.

Exception in thread "main" java.sql.SQLException: Error while processing statement: FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.tez.TezTask at org.apache.hive.jdbc.HiveStatement.execute(HiveStatement.java:296) at org.apache.hive.jdbc.HiveStatement.executeQuery(HiveStatement.java:392) at HiveJdbcClient.main(HiveJdbcClient.java:40)

错误号1代表用户认证失败,在连接时必须指定用户名密码,有可能通过服务器设置可以不需要用户认证就可以执行,hdp默认安装配置用户名密码是hive,hive

3. TezTask出错,返回错误号2.

TaskAttempt 3 failed, info=[Error: Failure while running task:java.lang.IllegalArgumentException: tez.runtime.io.sort.mb 256 should be larger than 0 and should be less than the available task memory (MB):133 at com.google.common.base.Preconditions.checkArgument(Preconditions.java:88) at org.apache.tez.runtime.library.common.sort.impl.ExternalSorter.getInitialMemoryRequirement(ExternalSorter.java:291) at org.apache.tez.runtime.library.output.OrderedPartitionedKVOutput.initialize(OrderedPartitionedKVOutput.java:95) at org.apache.tez.runtime.LogicalIOProcessorRuntimeTask$InitializeOutputCallable.call(LogicalIOProcessorRuntimeTask.java:430) at org.apache.tez.runtime.LogicalIOProcessorRuntimeTask$InitializeOutputCallable.call(LogicalIOProcessorRuntimeTask.java:409) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617) at java.lang.Thread.run(Thread.java:745) ]], Vertex failed as one or more tasks failed. failedTasks:1, Vertex vertex_1441168955561_1508_2_00 [Map 1] killed/failed due to:null] Vertex killed, vertexName=Reducer 2, vertexId=vertex_1441168955561_1508_2_01, diagnostics=[Vertex received Kill while in RUNNING state., Vertex killed as other vertex failed. failedTasks:0, Vertex vertex_1441168955561_1508_2_01 [Reducer 2] killed/failed due to:null] DAG failed due to vertex failure. failedVertices:1 killedVertices:1 FAILED: Execution Error, return code 2 from org.apache.hadoop.hive.ql.exec.tez.TezTask

code 2,代表错误是参数错误,一般是指对应的值不合适,以上堆栈指示tez.runtime.io.sort.mb参数256比可用内存大,因此修改配置文件或者执行查询之前先设置其大小即可。

通过以上设置以及参数修正之后,应用程序就能正确的使用jdbc连接hive数据库。

另可以用squirrel-sql GUI客户端管理hivedb,驱动设置方式与代码中对应jar包、驱动类、url等使用同样方式设置,测试成功建立好alias就可以开始连接hive,可以比较方便的管理和操作hive数据库。

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。