1 apiserver 提供资源操作的唯一入口,并提供认证,授权,访问控制API注册和发现等机制

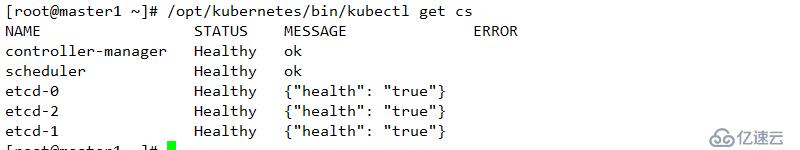

2 scheduler 负责资源的调度,按照预定的调度策略将POD调度到相应节点上

3 controller 负责维护集群的状态,如故障检测,自动扩展,滚动更新等

1 kubelet 维护容器的声明周期,同时也负载挂载和网络管理

2 kube-proxy 负责为service提供cluster内部服务的服务发现和负载均衡

1 etcd 保存整个集群状态

2 flannel 为集群提供网络环境

1 coreDNS 负责为整个集群提供DNS服务

2 ingress controller 为服务提供外网入口

3 promentheus 提供资源监控

4 dashboard 提供GUI

| 角色 | IP地址 | 相关组件 |

|---|---|---|

| master1 | 192.168.1.10 | docker, etcd,kubectl,flannel,kube-apiserver,kube-controller-manager,kube-scheduler |

| master2 | 192.168.1.20 | docker,etcd,kubectl,flannel,kube-apiserver,kube-controller-manager,kube-scheduler |

| node1 | 192.168.1.30 | kubelet,kube-proxy,docker,flannel,etcd |

| node2 | 192.168.1.40 | kubelet,kube-proxy,docker,flannel |

| nginx 负载均衡器 | 192.168.1.100 | nginx |

备注:

1 关闭selinux

2 firewalled防火墙关闭(关闭开机自启动)

3 设置时间同步服务器

4 配置master和node节点之间的域名解析,可直接配置在/etc/hosts中

5 配置禁用交换分区

echo "vm.swappiness = 0">> /etc/sysctl.conf

sysctl -p

etcd 是一个键值存储功能的数据库,其可以实现节点之间的leader选举功能,集群的所有转换信息都在etcd中存储,其他的etcd服务节点就会成为follower,在此过程供其他的follower会同步leader的数据,由于etcd集群必须能够选择出leader才能正常工作,因此其部署必须是奇数

相关etcd 选型

在考虑etcd读写效率以及稳定性的情况下,基本可以选型如下:

只有单台或者两台服务器做kubernetes的服务集群,只需要部署一台etcd节点即可;

只有三台或者四台服务器做kubernetes的服务集群,只需要部署三台etcd节点即可;

只有五台或者六台服务器做kubernetes的服务集群,只需要部署五台etcd节点即可;

etcd 内部通信使用是点到点的HTTPS通信

向外部通信是加密的点到点通信,其在kubernetes集群中其是通过与apiserver交互实现互相之间的通信

etcd之间的通信需要CA证书,etcd与客户端之间的通信也需要CA证书,与api server 也需要证书

1 使用cfssl 生成自签名证书,下载对应工具

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd642 授权并移动

chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64

mv cfssl_linux-amd64 /usr/local/bin/cfssl

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo3 创建文件,并生成对应证书

1 ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}2 ca-csr.json

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shaanxi",

"ST": "xi'an"

}

]

}3 server-csr.json

{

"CN": "etcd",

"hosts": [

"192.168.1.10",

"192.168.1.20",

"192.168.1.30"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shaanxi",

"ST": "xi'an"

}

]

}4 生成证书

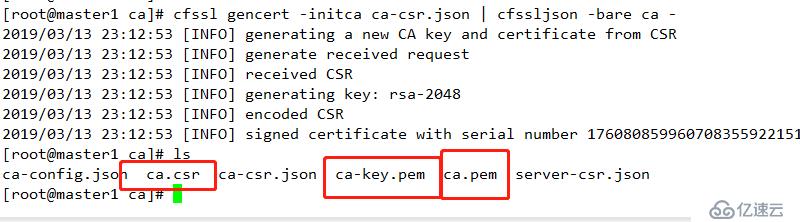

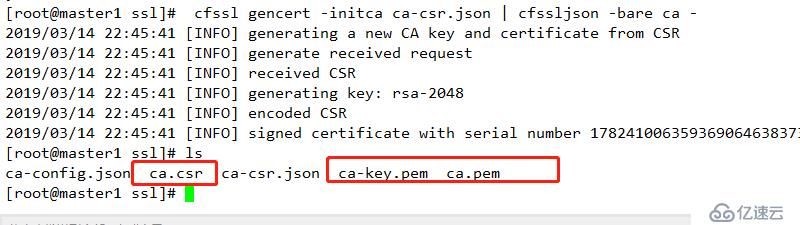

1 cfssl gencert -initca ca-csr.json | cfssljson -bare ca -结果如下

2 cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server结果如下

1 下载相关软件包

wget https://github.com/etcd-io/etcd/releases/download/v3.2.12/etcd-v3.2.12-linux-amd64.tar.gz2 创建相关配置文件目录并解压相关配置

mkdir /opt/etcd/{bin,cfg,ssl} -p

tar xf etcd-v3.2.12-linux-amd64.tar.gz

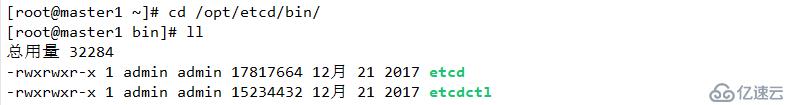

mv etcd-v3.2.12-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/结果如下:

3 创建配置文件etcd

#[Member]

ETCD_NAME="etcd01"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.1.10:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.1.10:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.1.10:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.1.10:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.1.10:2380,etcd02=https://192.168.1.20:2380,etcd03=https://192.168.1.30:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

名词解析:

ETCD_NAME #节点名称

ETCD_DATA_DIR # 数据目录,用于存储节点ID,集群ID,等数据

ETCD_LISTEN_PEER_URLS #监听URL,用于与其他节点通信(本地IP加端口)

ETCD_LISTEN_CLIENT_URLS # 客户端访问监听地址

ETCD_INITAL_ADVERTISE_PEER_URLS #集群通告地址

ETCD_ADVERTISE_CLIENT_URLS #客户端通告地址,告知其他节点通讯

ETCD_INITIAL_CLUSTER #集群节点地址,集群中所有节点地址

ETCD_INITIAL_CLUSTER_TOKEN #集群token

ETCD_INITIAL_CLUSTER_STATE # 加入集群的当前状态,new 是新集群,exitsting 表示加入已有集群

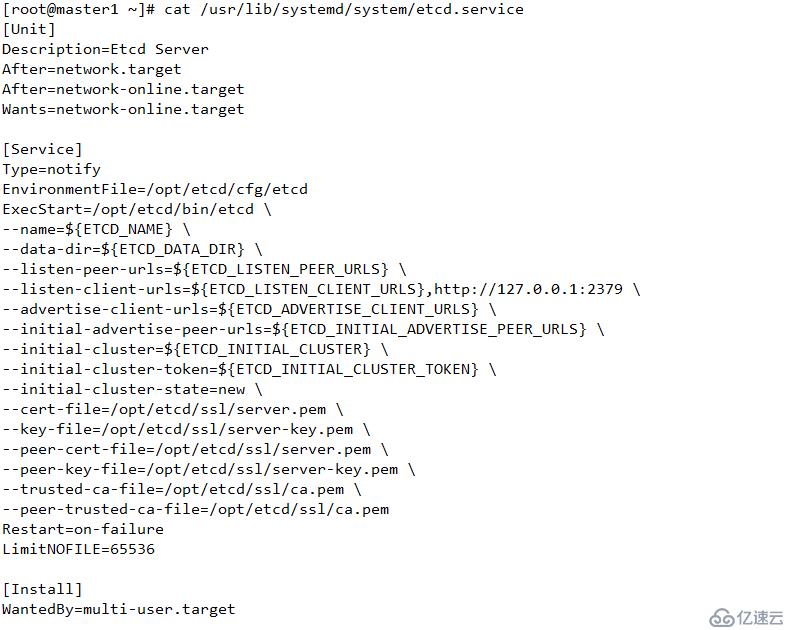

4 创建启动文件 etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd

ExecStart=/opt/etcd/bin/etcd \

--name=${ETCD_NAME} \

--data-dir=${ETCD_DATA_DIR} \

--listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=/opt/etcd/ssl/server.pem \

--key-file=/opt/etcd/ssl/server-key.pem \

--peer-cert-file=/opt/etcd/ssl/server.pem \

--peer-key-file=/opt/etcd/ssl/server-key.pem \

--trusted-ca-file=/opt/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target结果如下

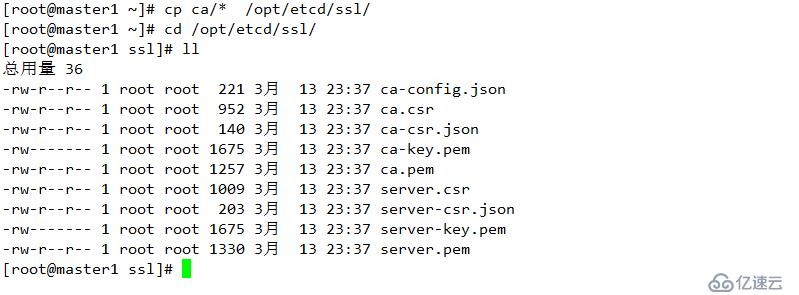

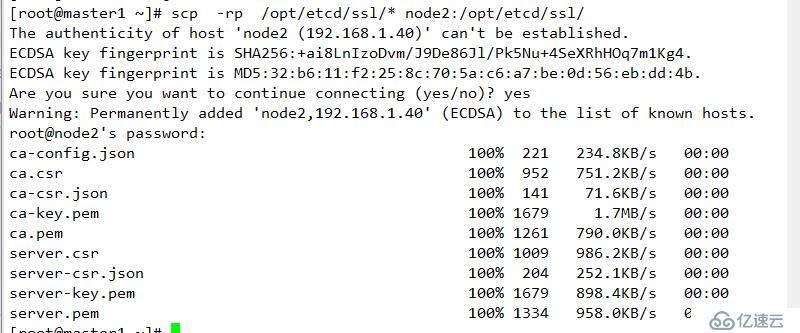

复制密钥信息到指定位置

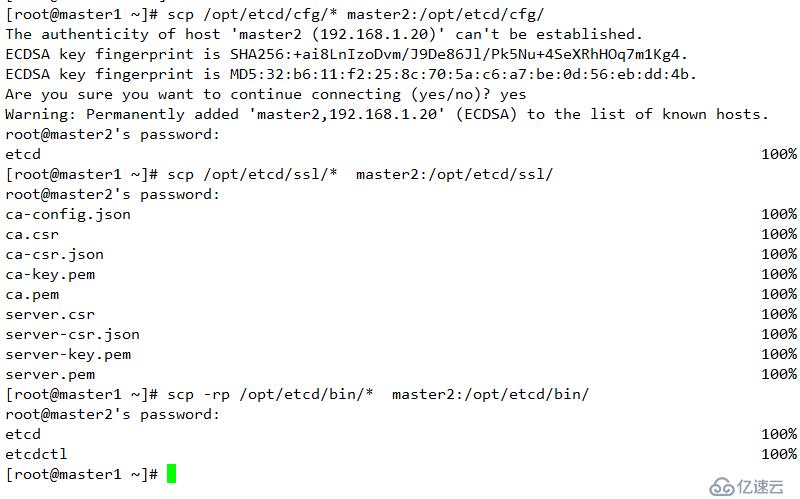

master2

1 创建文件

mkdir /opt/etcd/{bin,cfg,ssl} -p2 复制相关配置文件至指定节点master2

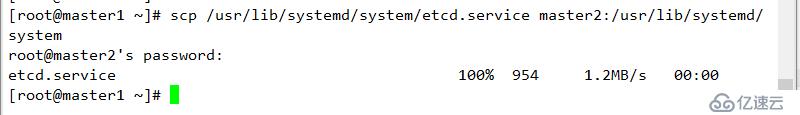

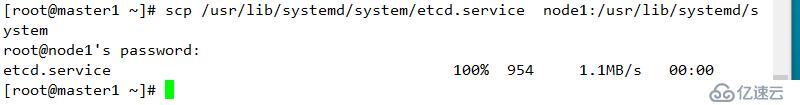

修改配置信息

etcd 配置

#[Member]

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.1.20:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.1.20:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.1.20:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.1.20:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.1.10:2380,etcd02=https://192.168.1.20:2380,etcd03=https://192.168.1.30:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

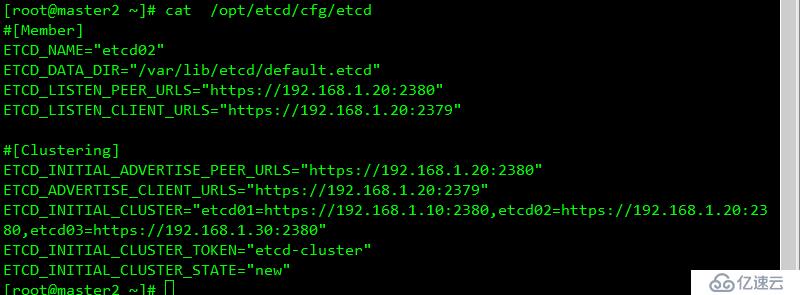

ETCD_INITIAL_CLUSTER_STATE="new"结果如下

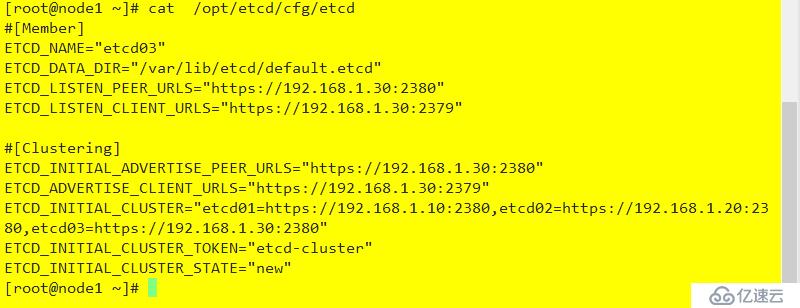

node1 节点配置,同master2

mkdir /opt/etcd/{bin,cfg,ssl} -p

scp /opt/etcd/cfg/* node1:/opt/etcd/cfg/

scp /opt/etcd/bin/* node1:/opt/etcd/bin/

scp /opt/etcd/ssl/* node1:/opt/etcd/ssl/

修改配置文件

#[Member]

ETCD_NAME="etcd03"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.1.30:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.1.30:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.1.30:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.1.30:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.1.10:2380,etcd02=https://192.168.1.20:2380,etcd03=https://192.168.1.30:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"配置结果如下

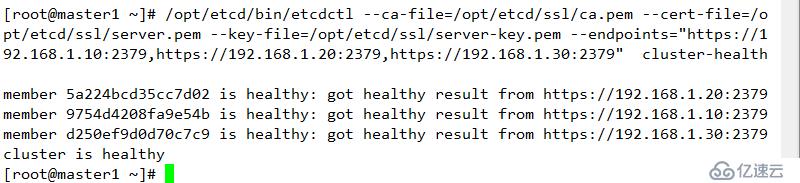

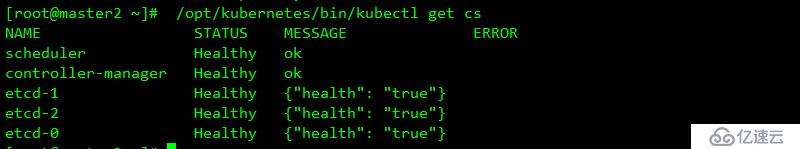

三个节点启动并设置开机自启动 (同时启动)

systemctl start etcd

systemctl enable etcd.service验证:

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.1.10:2379,https://192.168.1.20:2379,https://192.168.1.30:2379" cluster-health结果如下

**ETCD扩展:https://www.kubernetes.org.cn/5021.html

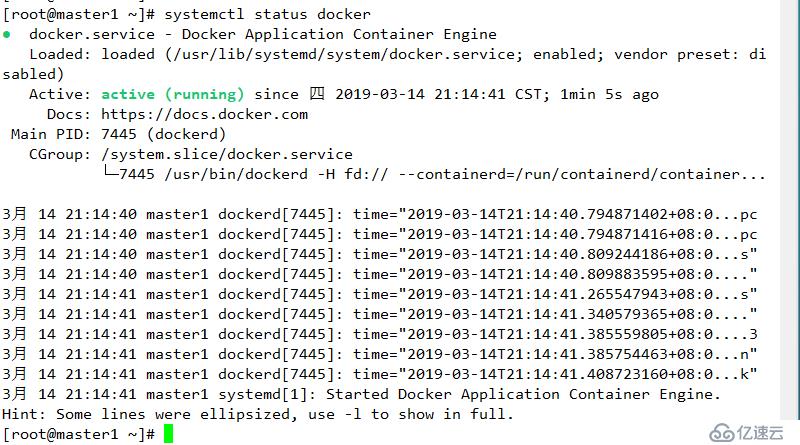

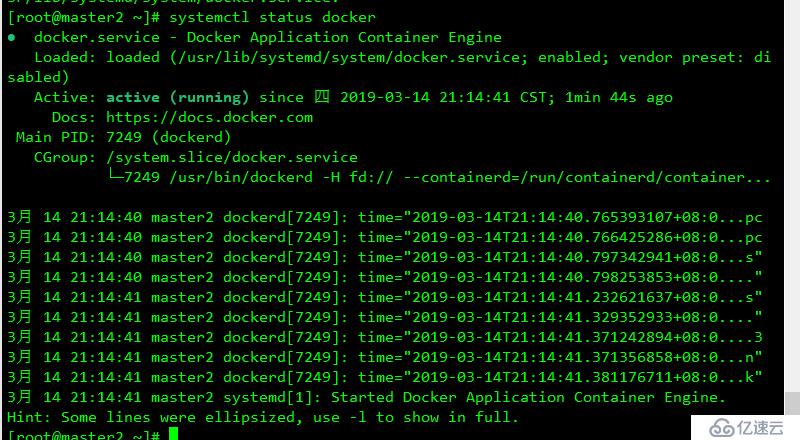

yum install -y yum-utils device-mapper-persistent-data lvm2yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo![] yum install docker-ce -ycurl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://bc437cce.m.daocloud.iosystemctl restart docker

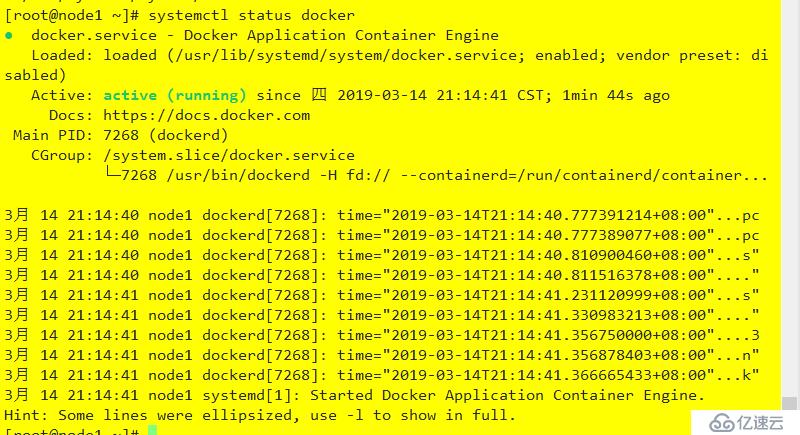

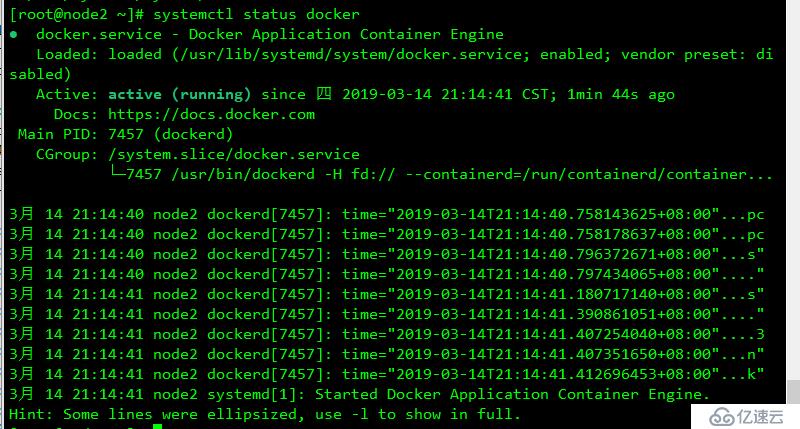

systemctl enable docker结果如下

flannel 默认使用vxlan(Linux 内核自3.7.0之后支持)方式为后端网络的传输机制,其不支持网络策略,其是基于 Linux TUN/TAP 传输,借助etcd维护网络的分配情况

flannel 对于子网冲突的解决方式:预留一个网络(后面的写入etcd的网络),而后自动为每个节点的docker容器引擎分配一个子网,并将其分配的信息保存于etcd持久化存储中。

flannel三种模式 :

1 vxlan

2升级版 vxlan(direct routing VXLAN) 同一网络的节点使用host-gw通信,其他通信使用vxlan方式实现

3 host-gw: 及 host gateway,通过节点上创建到目标容器地址的路由直接完成报文转发,这种方式要求各个节点必须在同一个三层网络中,host-gw有较好的转发性能,易于设定,若经过多个网络,会牵扯更多路由,性能有所下降

4 UDP:使用普通的UDP报文完成隧道转发,性能低,仅在前两种不支持的情况下使用

flanneld,要用于etcd 存储自身一个子网信息,因此需保证能够成功链接etcd,写入预定子网网段

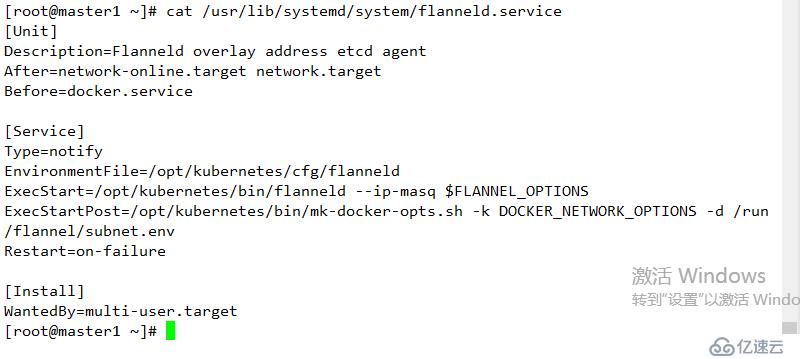

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.1.10:2379,https://192.168.1.20:2379,https://192.168.1.30:2379" set /coreos.com/network/config '{"Network":"172.17.0.0/16","Backend":{"Type":"vxlan"}}'![]wget https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gztar xf flannel-v0.10.0-linux-amd64.tar.gz mkdir /opt/kubernetes/bin -p mv flanneld mk-docker-opts.sh /opt/kubernetes/bin/ mkdir /opt/kubernetes/cfgFLANNEL_OPTIONS="--etcd-endpoints=https://192.168.1.10:2379,https://192.168.1.20:2379,https://192.168.1.30:2379 -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem" cat /usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target结果如下

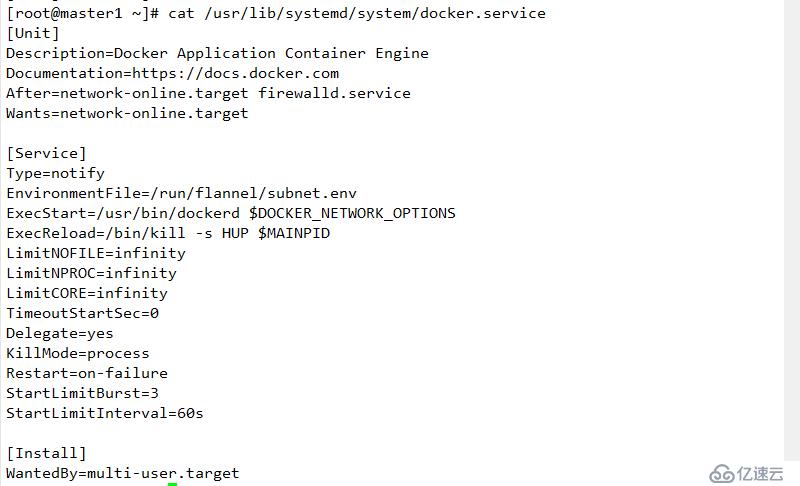

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target结果如下

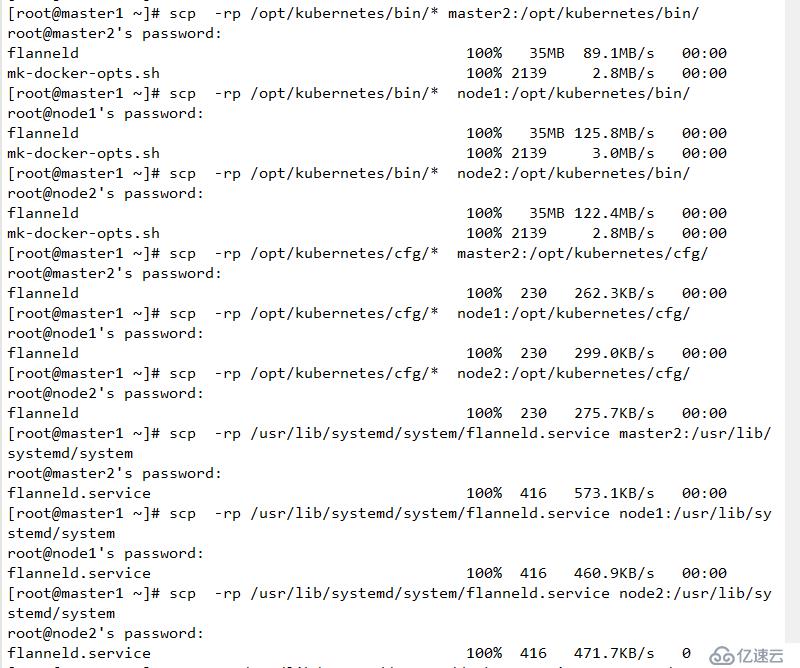

1 其他节点创建相关etcd目录和相关kubernetes目录

mkdir /opt/kubernetes/{cfg,bin} -p

mkdir /opt/etcd/ssl -p2 复制相关配置文件至指定主机

复制相关flannd访问

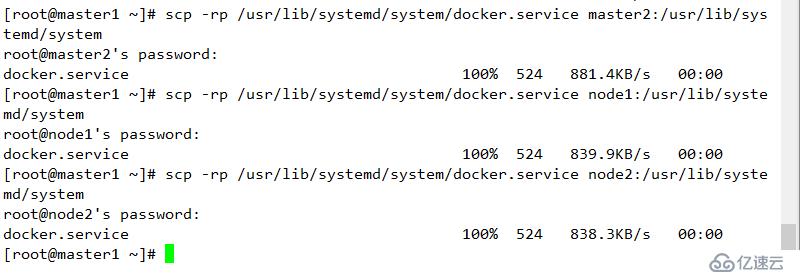

复制docker配置信息

systemctl daemon-reload

systemctl start flanneld

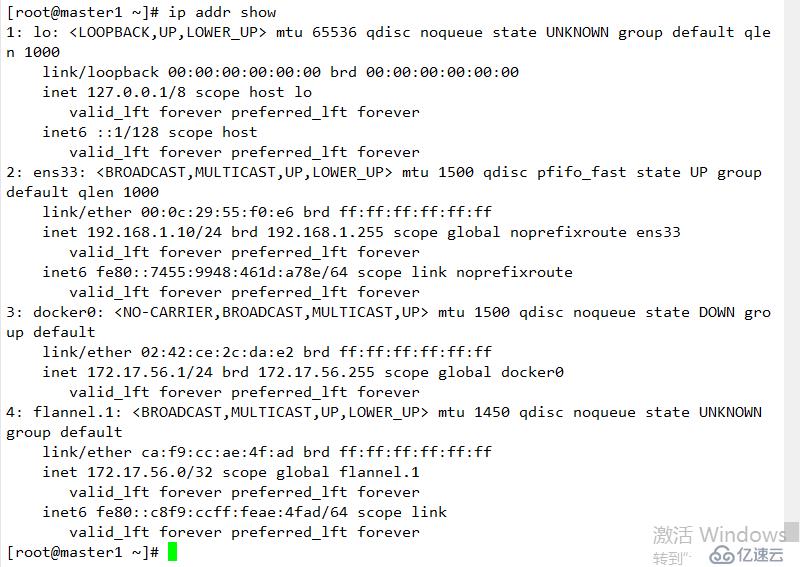

systemctl enable flanneld11 查看结果

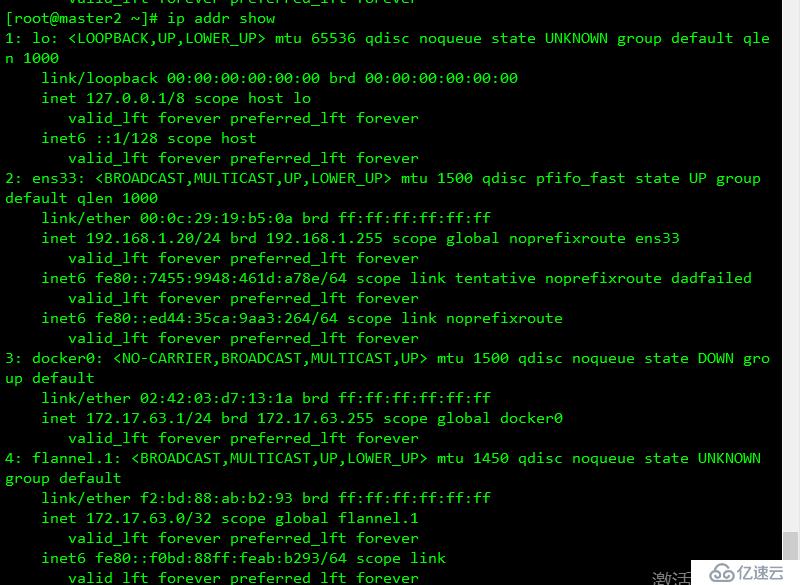

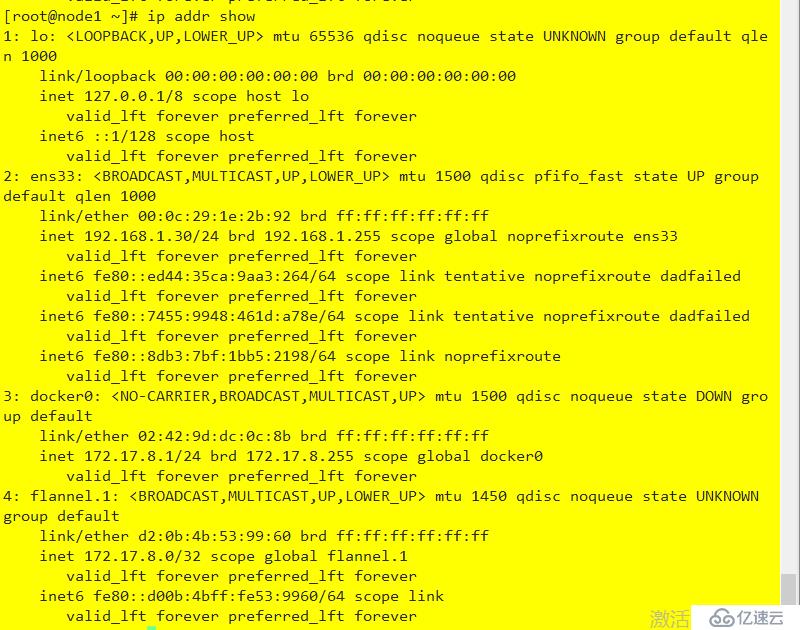

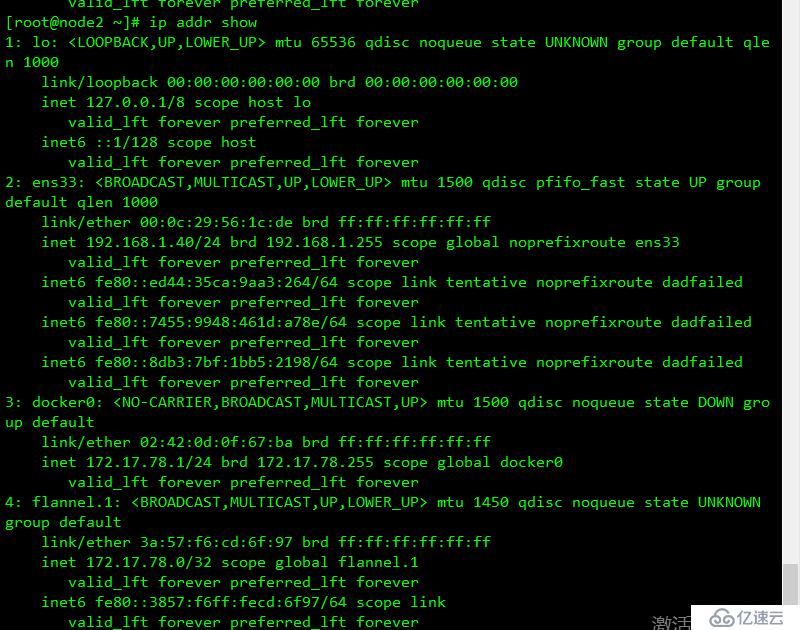

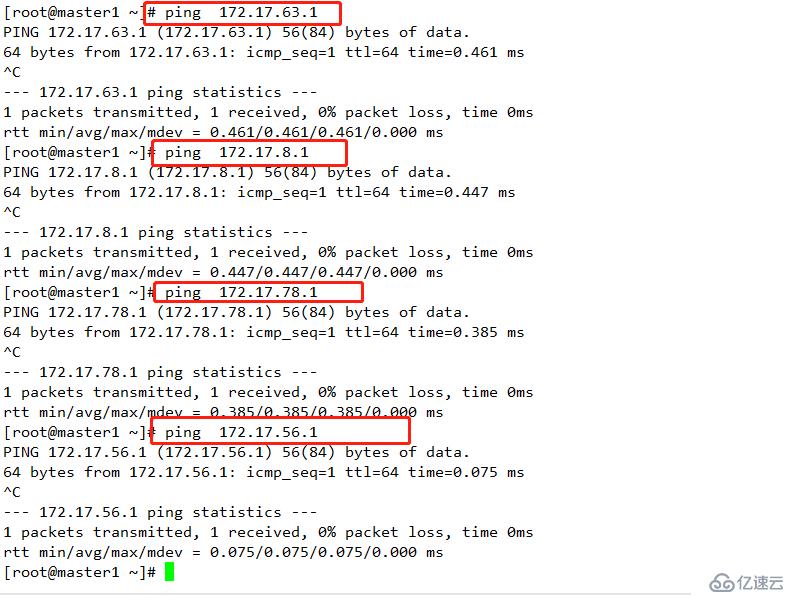

注意:(确保docker0 和 flannel.1在同一网段,且能每个节点能与其他节点docker0的IP通信)

结果如下

查看etcd中的flannel配置

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.1.10:2379,https://192.168.1.20:2379,https://192.168.1.30:2379" get /coreos.com/network/config

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.1.10:2379,https://192.168.1.20:2379,https://192.168.1.30:2379" get /coreos.com/network/subnets/172.17.56.0-24API-SERVER 提供了资源操作的唯一入口,并提供认证、授权、访问控制、API 注册和发现等机制

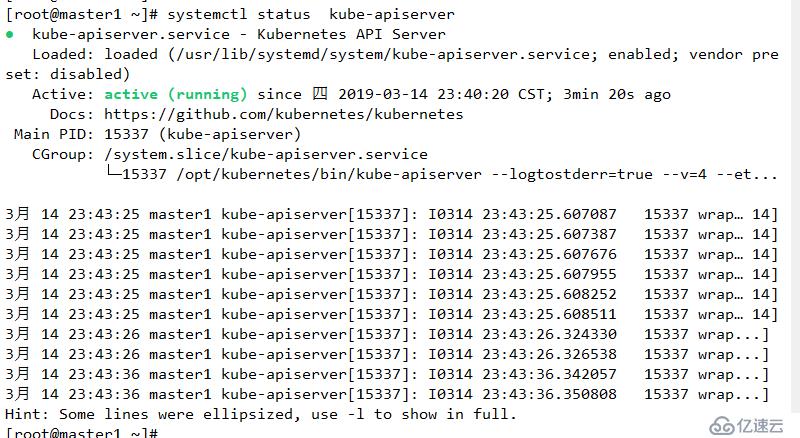

查看相关资源日志

journalctl -exu kube-apiserver

mkdir /opt/kubernetes/ssl cat ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}cat ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shaanxi",

"ST": "xi'an",

"O": "k8s",

"OU": "System"

}

]

}cat server-csr.json

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.1.10",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.1.10",

"192.168.1.20",

"192.168.1.100",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shannxi",

"ST": "xi'an",

"O": "k8s",

"OU": "System"

}

]

}

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shannxi",

"ST": "xi'an",

"O": "k8s",

"OU": "System"

}

]

}kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Shaanxi",

"ST": "xi'an",

"O": "k8s",

"OU": "System"

}

]

}1

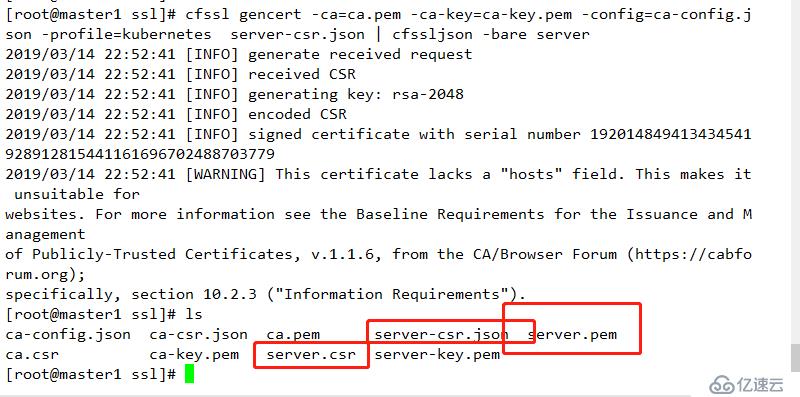

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -查看

2

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server 查看结果

3

生成证书

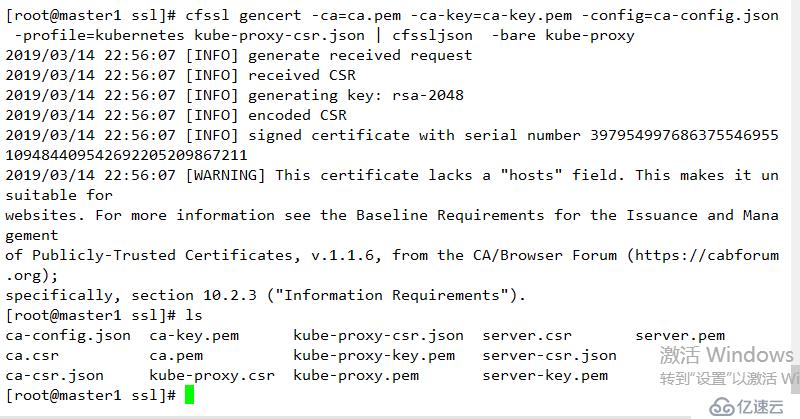

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

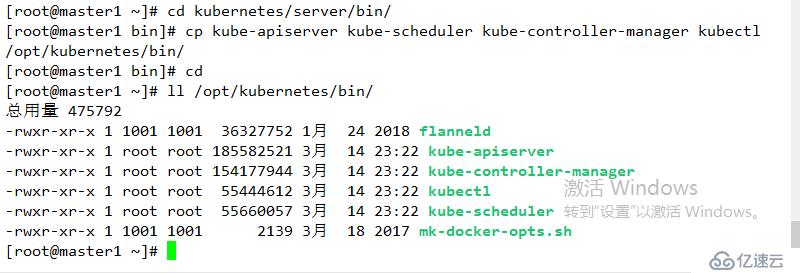

wget https://storage.googleapis.com/kubernetes-release/release/v1.11.6/kubernetes-server-linux-amd64.tar.gz解压数据包

tar xf kubernetes-server-linux-amd64.tar.gz 进入指定目录

cd kubernetes/server/bin/移动二进制文件到指定目录:

cp kube-apiserver kube-scheduler kube-controller-manager kubectl /opt/kubernetes/bin/结果如下

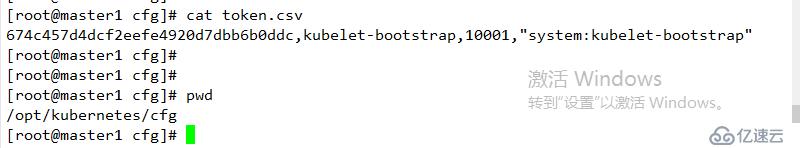

创建token,后面会用到

674c457d4dcf2eefe4920d7dbb6b0ddc,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

格式如下 :

说明 :

第一列: 随机字符串,自己生成

第二列:用户名

第三列:UID

第四列: 用户组

结果如下:

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://192.168.1.10:2379,https://192.168.1.20:2379,https://192.168.1.30:2379 \

--bind-address=192.168.1.10 \

--secure-port=6443 \

--advertise-address=192.168.1.10 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

名词解析

--logtostderr 启用日志

--v 日志等级

--etcd-servers etcd 集群地址

--bind-address 监听地址

--secure-port https安全端口

--advertise-address 集群通道地址

--allow-privileged 用户授权

--service-cluster-ip-range Service 虚拟IP地址段

--enable-admission-plugins 准入控制模块

--authorization-mode 认证授权,启用RBAC 授权和节点自管理

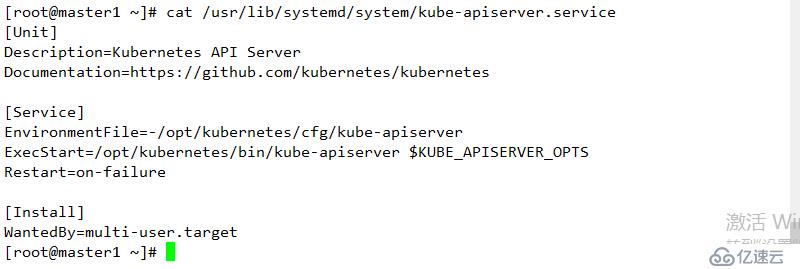

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target结果如下:

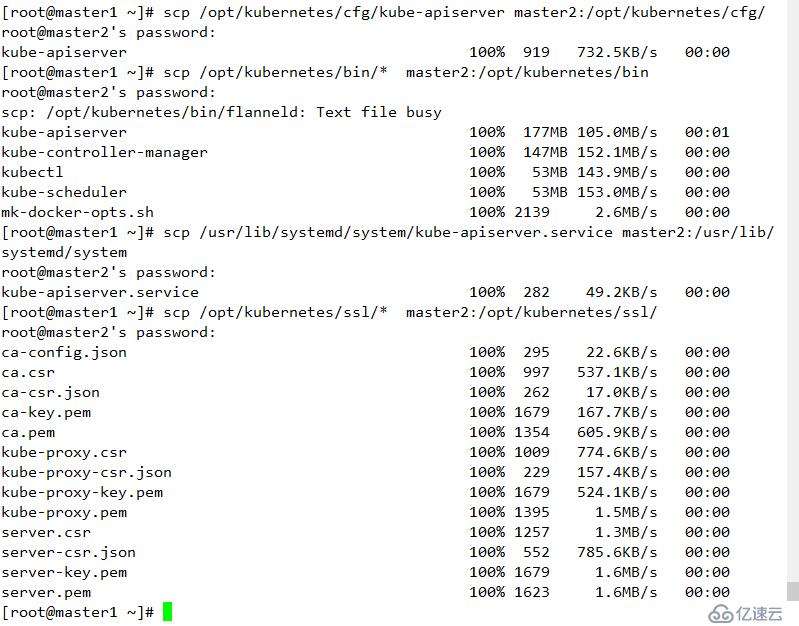

scp /opt/kubernetes/cfg/kube-apiserver master2:/opt/kubernetes/cfg/

scp /opt/kubernetes/bin/* master2:/opt/kubernetes/bin

scp /usr/lib/systemd/system/kube-apiserver.service master2:/usr/lib/systemd/system

scp /opt/kubernetes/ssl/* master2:/opt/kubernetes/ssl/

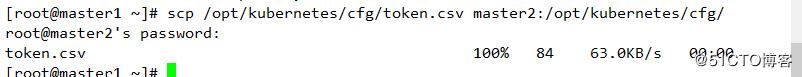

scp /opt/kubernetes/cfg/token.csv master2:/opt/kubernetes/cfg/结果:

/opt/kubernetes/cfg/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://192.168.1.10:2379,https://192.168.1.20:2379,https://192.168.1.30:2379 \

--bind-address=192.168.1.20 \

--secure-port=6443 \

--advertise-address=192.168.1.20 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"systemctl daemon-reload

systemctl start kube-apiserver

systemctl enable kube-apiserver

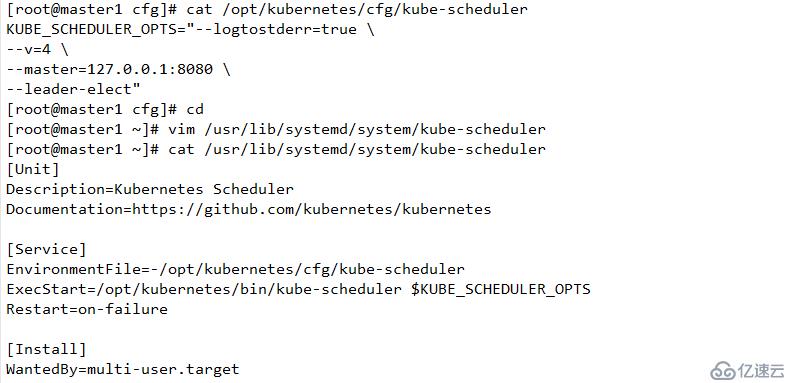

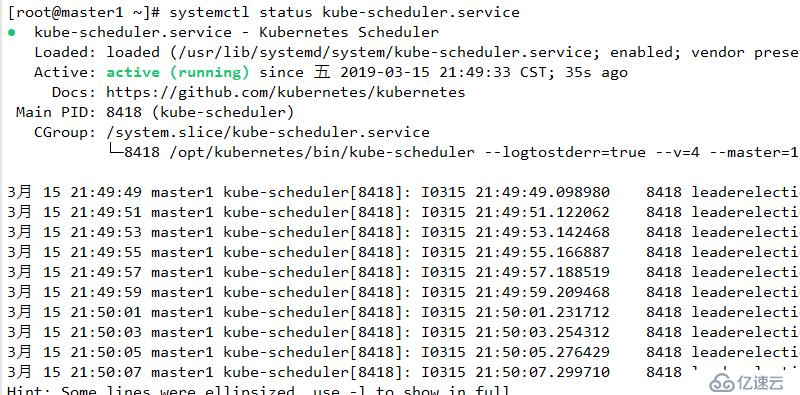

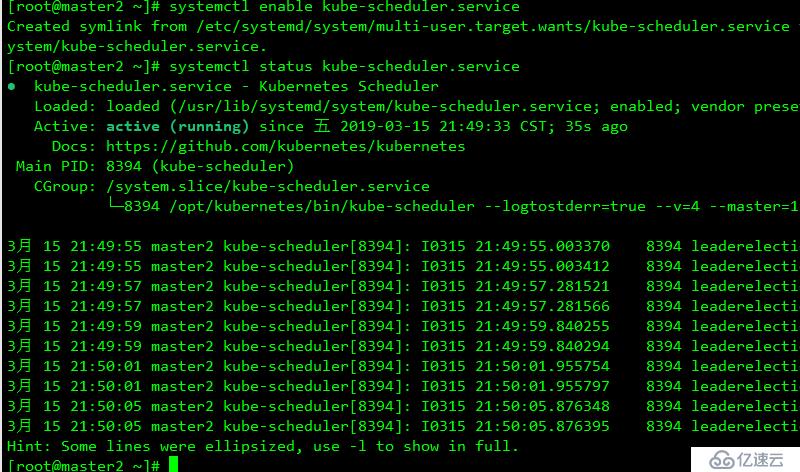

scheduler 负责资源的调度,按照预定的调度策略将POD调度到相应的节点上

/opt/kubernetes/cfg/kube-scheduler

KUBE_SCHEDULER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect"参数说明:

--master 链接本地apiserver

--leader-elect "该组件启动多个时,自动选举"

/usr/lib/systemd/system/kube-scheduler

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target结果

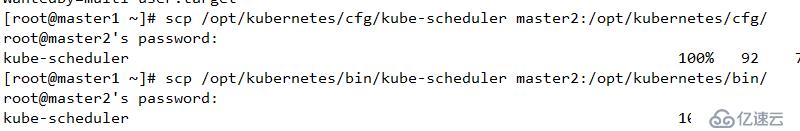

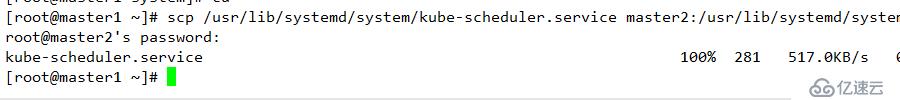

scp /opt/kubernetes/cfg/kube-scheduler master2:/opt/kubernetes/cfg/

scp /opt/kubernetes/bin/kube-scheduler master2:/opt/kubernetes/bin/

scp /usr/lib/systemd/system/kube-scheduler.service master2:/usr/lib/systemd/system

systemctl daemon-reload

systemctl start kube-scheduler.service

systemctl enable kube-scheduler.service查看结果

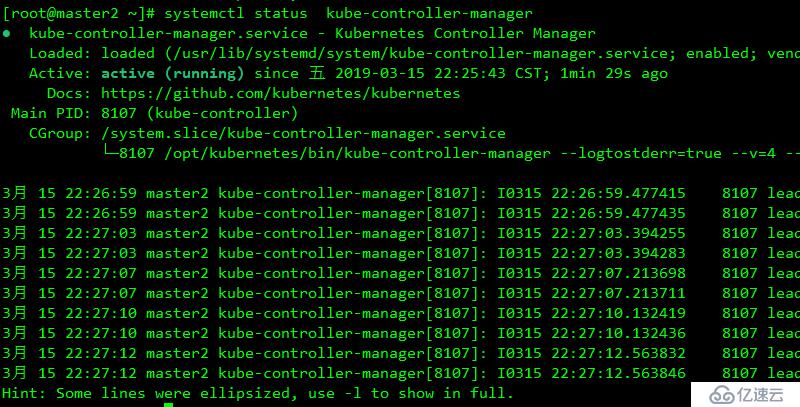

负责维护集群的状态,如故障检测,自动扩展,滚动更新等

/opt/kubernetes/cfg/kube-controller-manager

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect=true \

--address=127.0.0.1 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem"/usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.targetscp /opt/kubernetes/bin/kube-controller-manager master2:/opt/kubernetes/bin/

scp /opt/kubernetes/cfg/kube-controller-manager master2:/opt/kubernetes/cfg/

scp /usr/lib/systemd/system/kube-controller-manager master2:/usr/lib/systemd/systemsystemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager查看结果

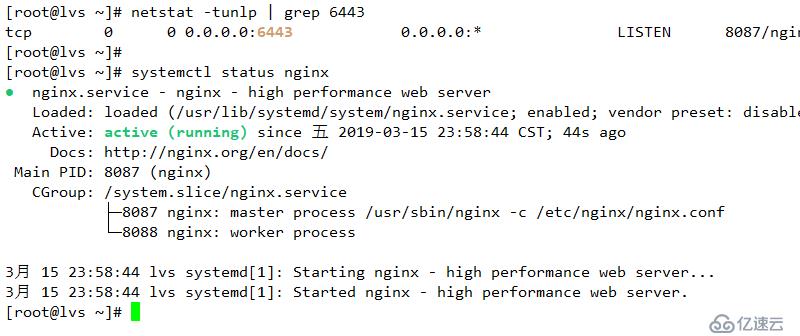

用于为master1和master2 节点提供负载均衡

/etc/yum.repos.d/nginx.repo

[nginx]

name=nginx repo

baseurl=http://nginx.org/packages/centos/7/x86_64/

gpgcheck=0

enabled=1yum -y install nginx

/etc/nginx/nginx.conf

user nginx;

worker_processes 1;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

#tcp_nopush on;

keepalive_timeout 65;

#gzip on;

include /etc/nginx/conf.d/*.conf;

}

stream {

upstream api-server {

server 192.168.1.10:6443;

server 192.168.1.20:6443;

}

server {

listen 6443;

proxy_pass api-server;

}

}systemctl start nginx

systemctl enable nginx

负责维护容器的生命周期,同时也负责挂载和网络的管理

cd /opt/kubernetes/bin/

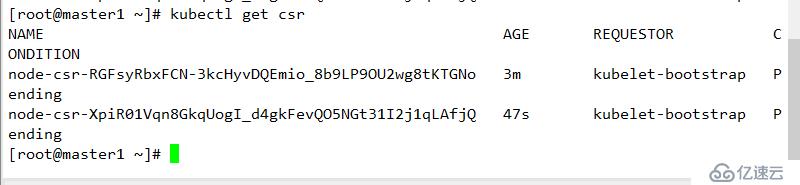

./kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

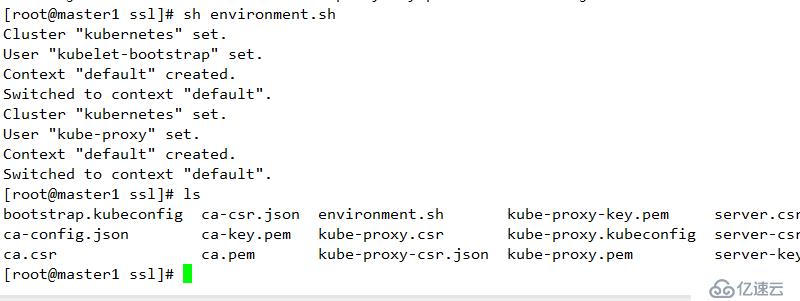

--user=kubelet-bootstrap在生成证书的目录下执行

cd /opt/kubernetes/ssl/

脚本如下

environment.sh

# 创建kubelet bootstrapping kubeconfig

# 下面的随机数为上面生成的随机数

BOOTSTRAP_TOKEN=674c457d4dcf2eefe4920d7dbb6b0ddc

# 其IP地址和端口对应LVS负载均衡器的虚拟IP地址

KUBE_APISERVER="https://192.168.1.100:6443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=./ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#----------------------

# 创建kube-proxy kubeconfig文件

kubectl config set-cluster kubernetes \

--certificate-authority=./ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=./kube-proxy.pem \

--client-key=./kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig/etc/profile

export PATH=/opt/kubernetes/bin:$PATH

source /etc/profile

sh environment.sh

scp -rp bootstrap.kubeconfig kube-proxy.kubeconfig node1:/opt/kubernetes/cfg/

scp -rp bootstrap.kubeconfig kube-proxy.kubeconfig node2:/opt/kubernetes/cfg/cd /root/kubernetes/server/binscp kubelet kube-proxy node1:/opt/kubernetes/bin/

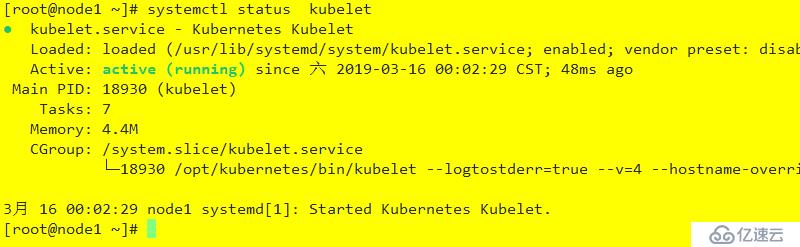

scp kubelet kube-proxy node2:/opt/kubernetes/bin//opt/kubernetes/cfg/kubelet

KUBELET_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.1.30 \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet.config \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"参数说明:

--hostname-override 在集群中显示的主机名

-kuveconfig:指定kubeconfig 文件位置,自动生成

--bootstrap-kubecondig 指定文件配置

--cert-dir 颁发证书存在位置

--pod-infra-container-image 管理POD网络镜像

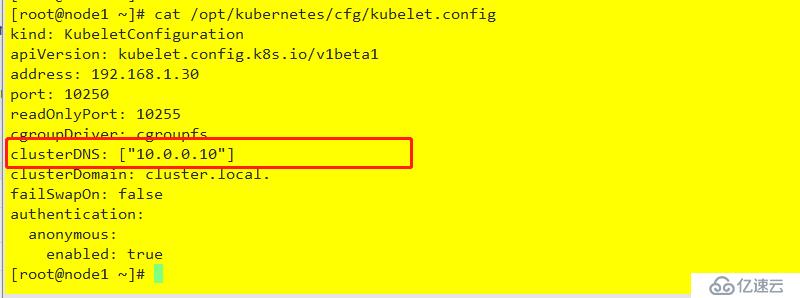

/opt/kubernetes/cfg/kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 192.168.1.30

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["10.0.0.10"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true/usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet $KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.targetscp /opt/kubernetes/cfg/kubelet node2:/opt/kubernetes/cfg/

scp /opt/kubernetes/cfg/kubelet.config node2:/opt/kubernetes/cfg/

scp /usr/lib/systemd/system/kubelet.service node2:/usr/lib/systemd/system/opt/kubernetes/cfg/kubelet

KUBELET_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.1.40 \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet.config \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"/opt/kubernetes/cfg/kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 192.168.1.40

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["10.0.0.10"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

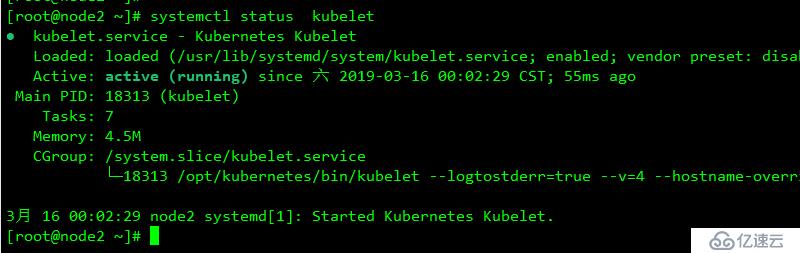

enabled: truesystemctl daemon-reload

systemctl enable kubelet

systemctl restart kubelet

负责为service提供cluster内部的服务发现和负载均衡(通过创建相关的iptables和ipvs规则实现)

/opt/kubernetes/cfg/kube-proxy

KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.1.30 \

--cluster-cidr=10.0.0.0/24 \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"/usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTS

Restart=on-failure

[Install]

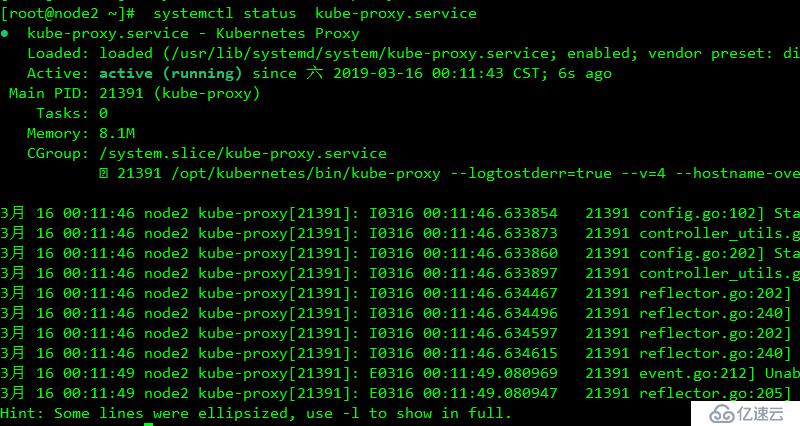

WantedBy=multi-user.targetscp /opt/kubernetes/cfg/kube-proxy node2:/opt/kubernetes/cfg/

scp /usr/lib/systemd/system/kube-proxy.service node2:/usr/lib/systemd/system/opt/kubernetes/cfg/kube-proxy

KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=192.168.1.40 \

--cluster-cidr=10.0.0.0/24 \

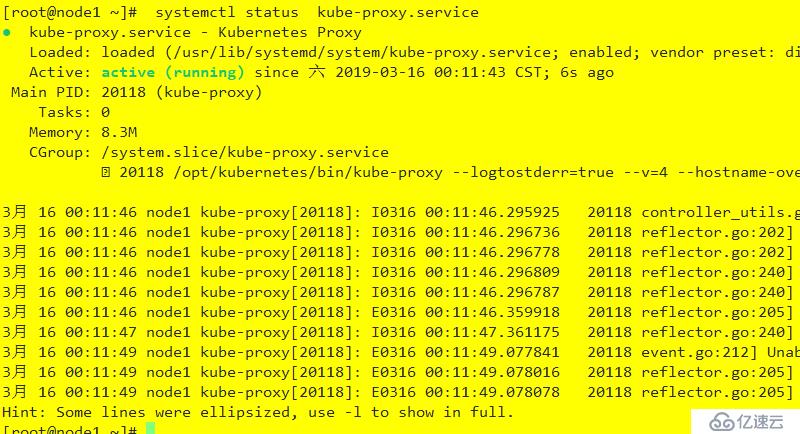

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig" systemctl daemon-reload

systemctl enable kube-proxy.service

systemctl start kube-proxy.service

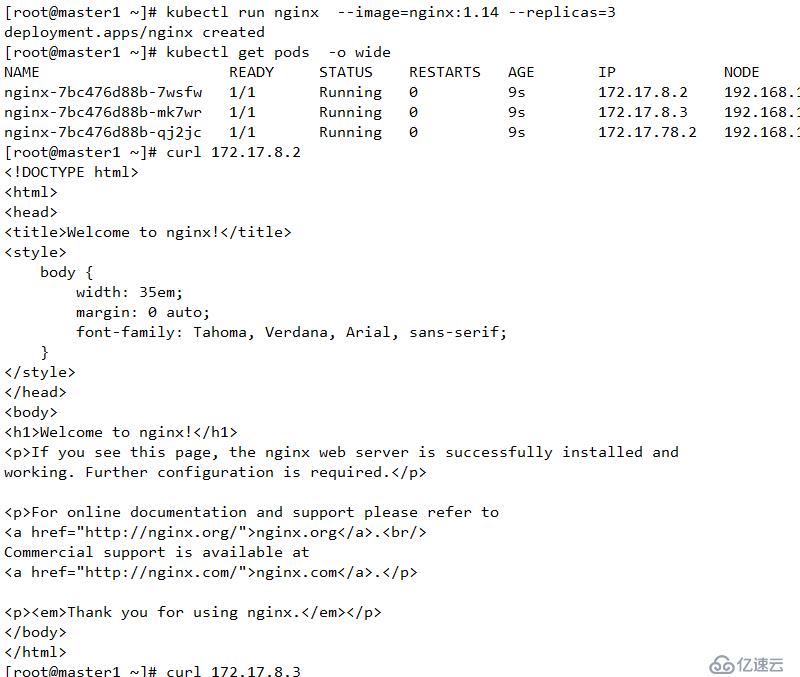

kubectl run nginx --image=nginx:1.14 --replicas=3

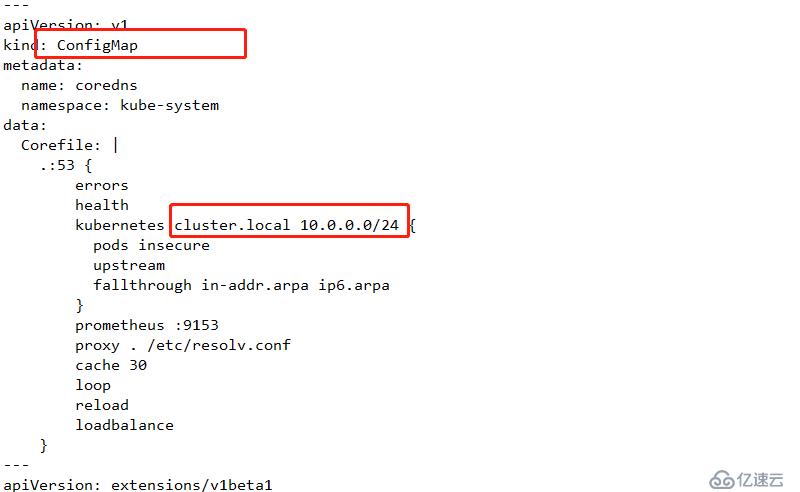

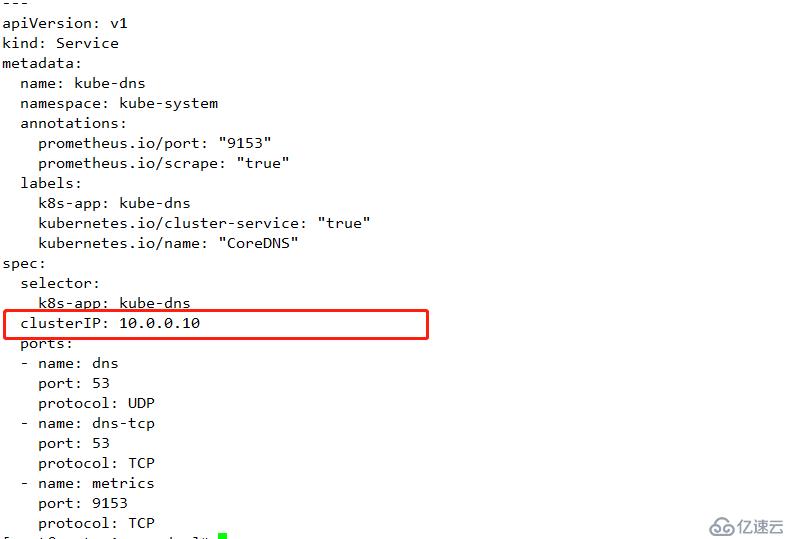

负责为整个集群提供内部DNS解析

mkdir coredns

wget https://raw.githubusercontent.com/coredns/deployment/master/kubernetes/coredns.yaml.sed上面内容及service的IP地址网段:

下面修改成与 /opt/kubernetes/cfg/kubelet.config 中clusterDNS配置的值相同

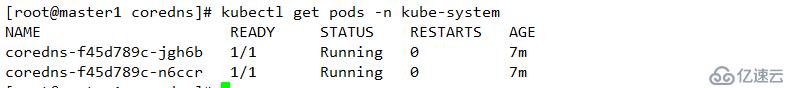

kubectl apply -f coredns.yaml.sed kubectl get pods -n kube-system

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

health

kubernetes cluster.local 10.0.0.0/24 { #此处是service的网络地址范围

pods insecure

upstream

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

proxy . /etc/resolv.conf

cache 30

loop

reload

loadbalance

}

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: kube-dns

kubernetes.io/name: "CoreDNS"

spec:

replicas: 2

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: kube-dns

template:

metadata:

labels:

k8s-app: kube-dns

spec:

serviceAccountName: coredns

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

nodeSelector:

beta.kubernetes.io/os: linux

containers:

- name: coredns

image: coredns/coredns:1.3.0

imagePullPolicy: IfNotPresent

resources:

limits:

memory: 170Mi

requests:

cpu: 100m

memory: 70Mi

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

readOnly: true

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

securityContext:

allowPrivilegeEscalation: false

capabilities:

add:

- NET_BIND_SERVICE

drop:

- all

readOnlyRootFilesystem: true

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: kube-dns

namespace: kube-system

annotations:

prometheus.io/port: "9153"

prometheus.io/scrape: "true"

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: kube-dns

clusterIP: 10.0.0.10 #此处是DNS的地址,其在service的集群网络中

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

- name: metrics

port: 9153

protocol: TCP

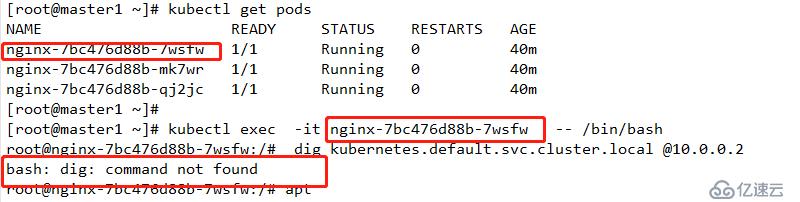

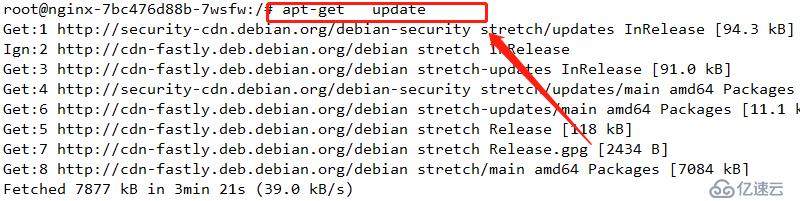

apt-get update

apt-get install dnsutils

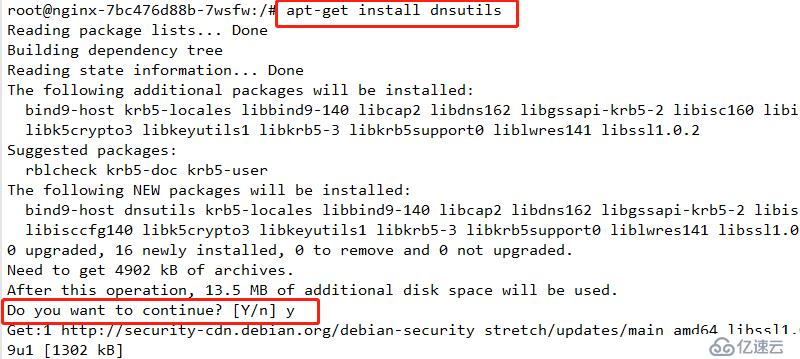

dig kubernetes.default.svc.cluster.local @10.0.0.10

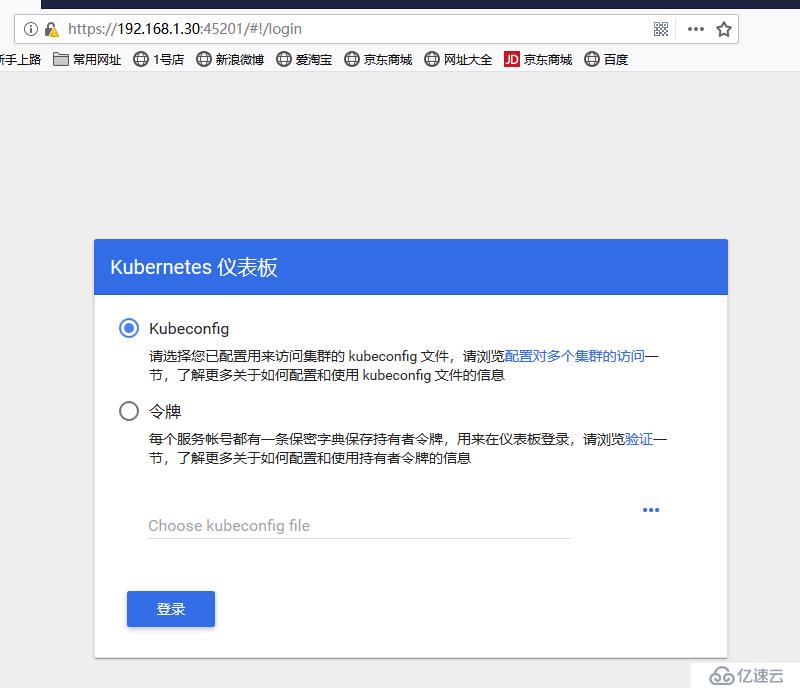

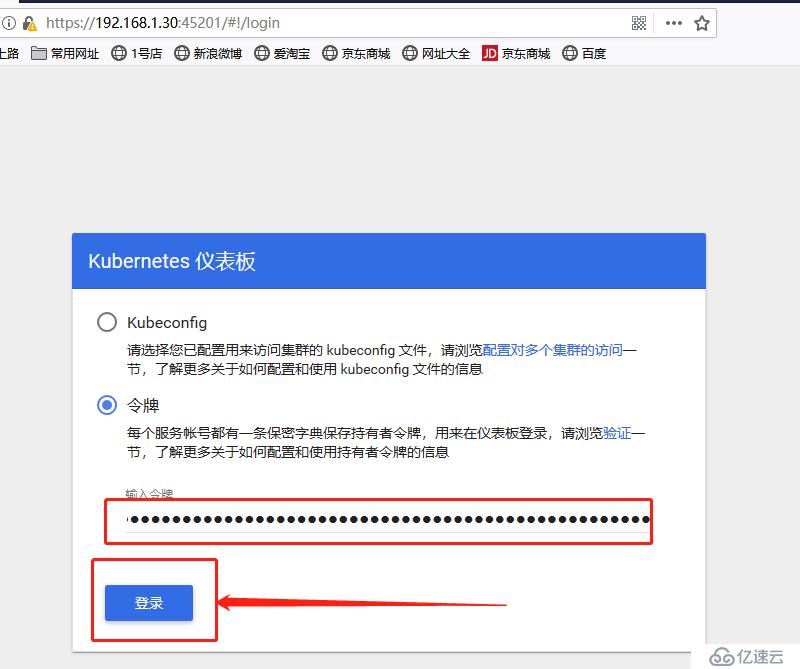

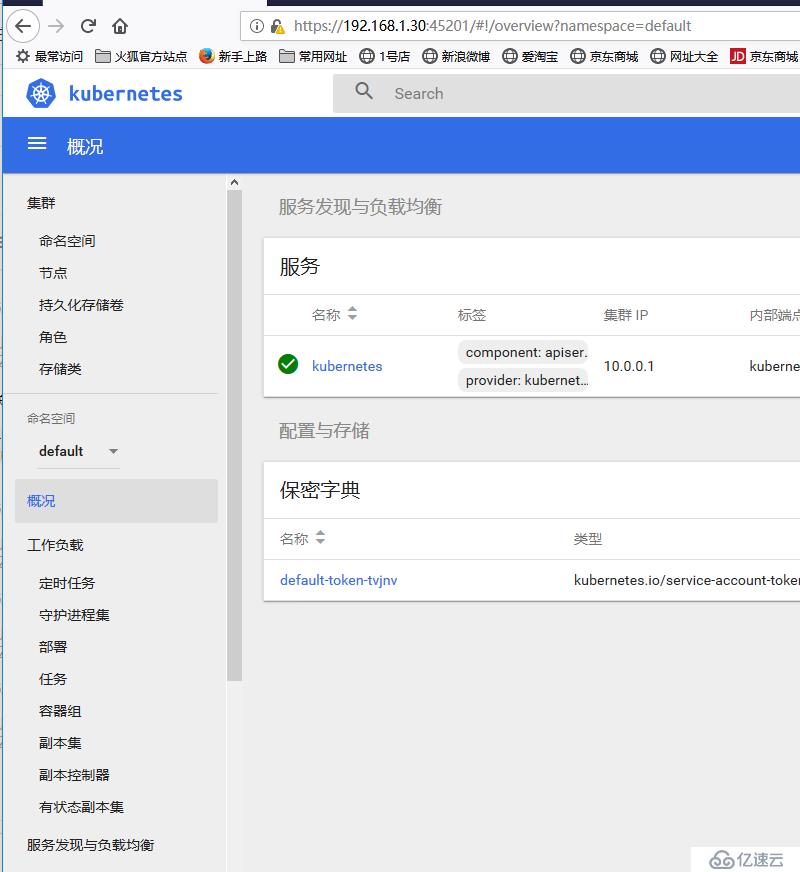

用于为集群提供图形化接口

mkdir dashboardcd dashboard/

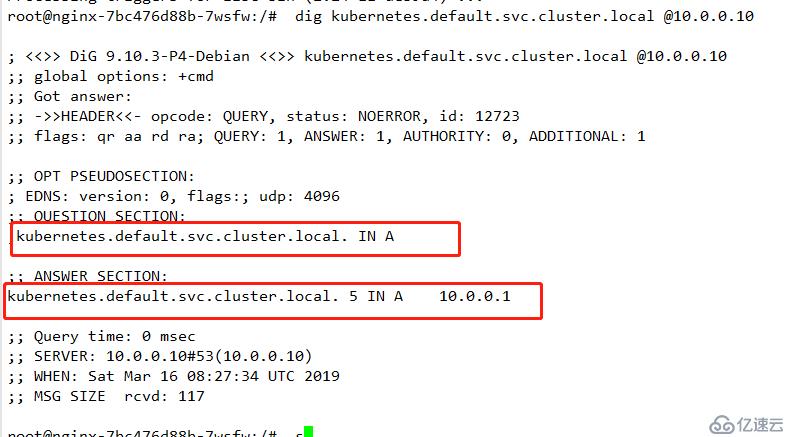

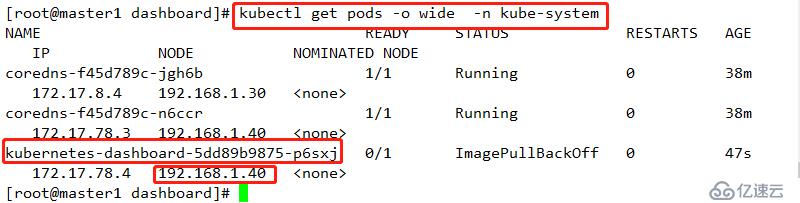

wget https://raw.githubusercontent.com/kubernetes/dashboard/v1.10.1/src/deploy/recommended/kubernetes-dashboard.yaml kubectl apply -f kubernetes-dashboard.yaml kubectl get pods -o wide -n kube-system

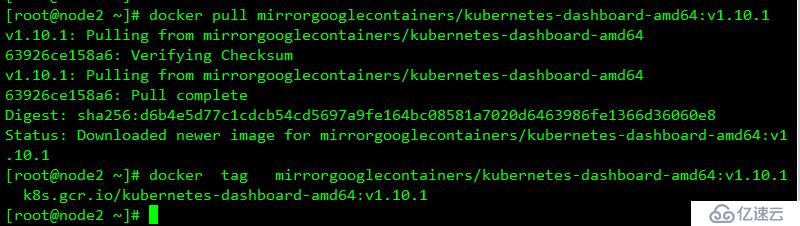

docker pull mirrorgooglecontainers/kubernetes-dashboard-amd64:v1.10.1

docker tag mirrorgooglecontainers/kubernetes-dashboard-amd64:v1.10.1 k8s.gcr.io/kubernetes-dashboard-amd64:v1.10.1

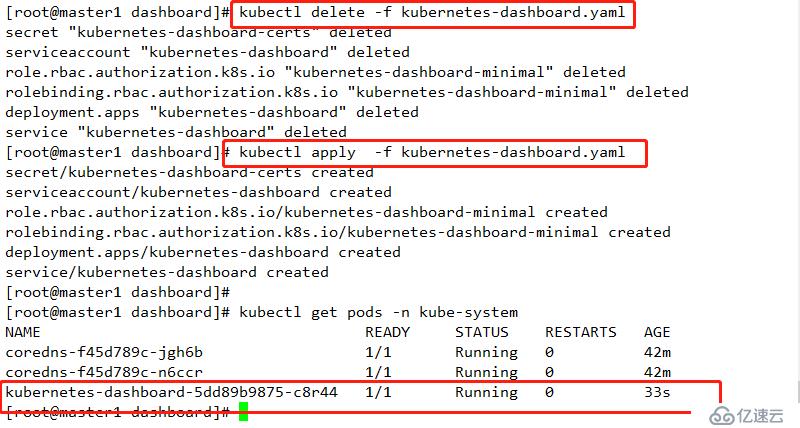

kubectl delete -f kubernetes-dashboard.yaml

kubectl apply -f kubernetes-dashboard.yaml

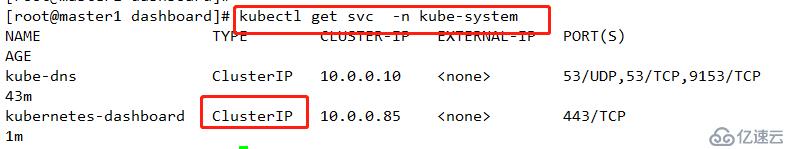

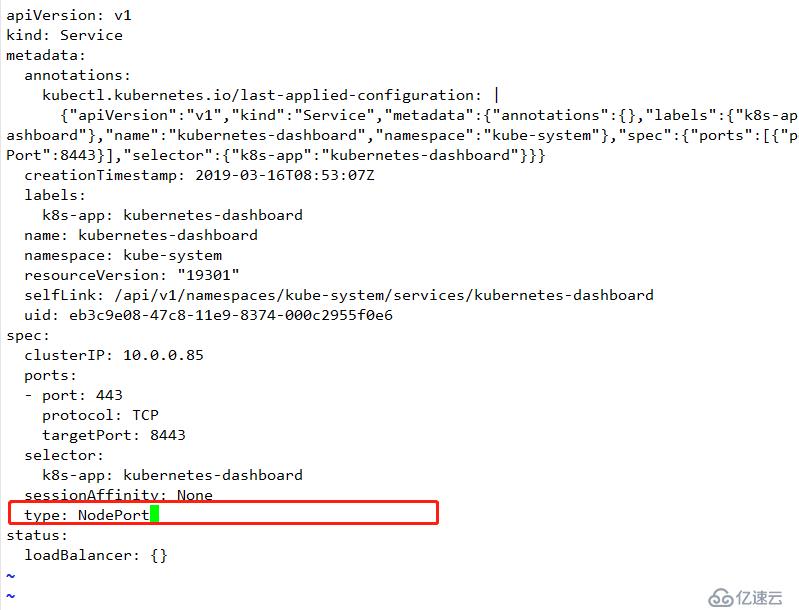

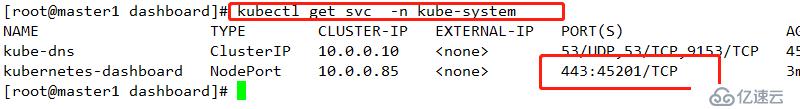

查看模式

kubectl edit svc -n kube-system kubernetes-dashboard

查看结果

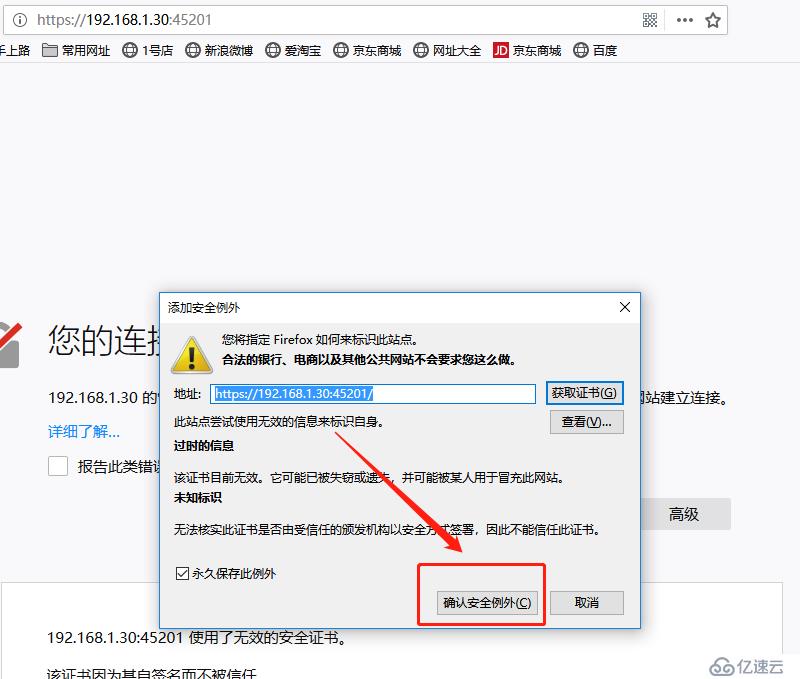

https://192.168.1.30:45201/ # 其必须是https,其次,其端口是上述的端口号添加例外

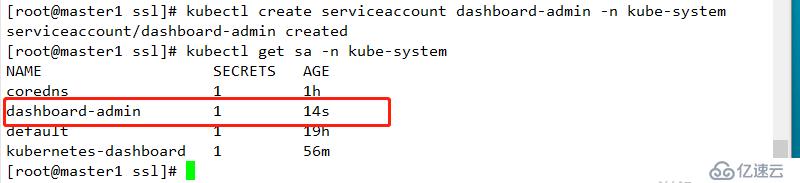

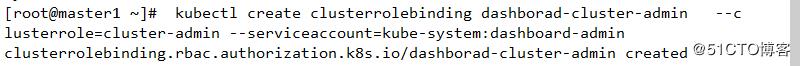

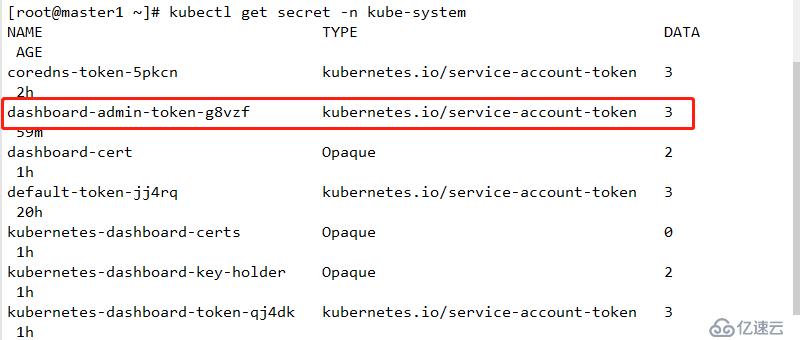

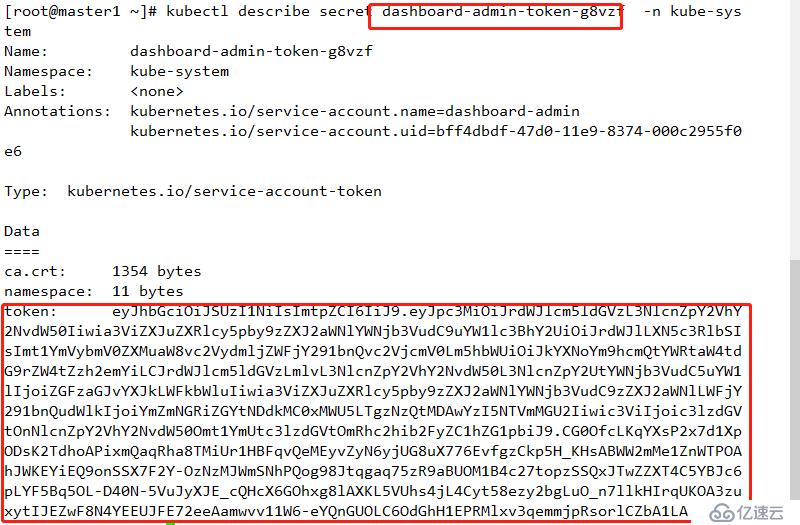

kubectl create serviceaccount dashboard-admin -n kube-system

kubectl create clusterrolebinding dashborad-cluster-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

mkdir ingress

cd ingress

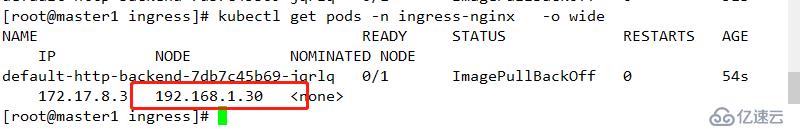

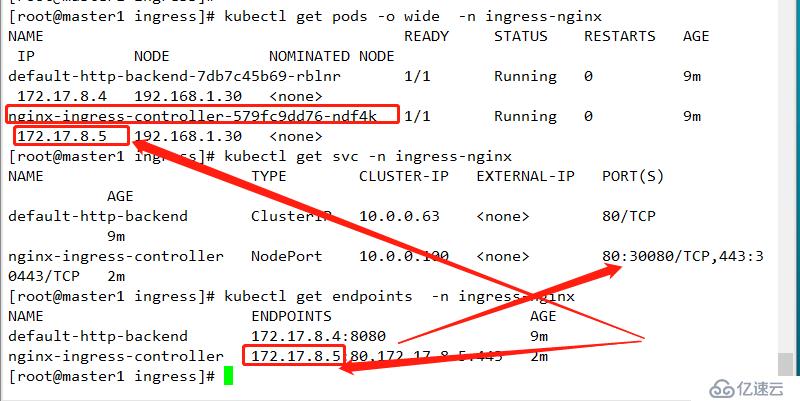

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/nginx-0.20.0/deploy/mandatory.yaml kubectl apply -f mandatory.yaml 查看运行节点

kubectl get pods -n ingress-nginx -o wide

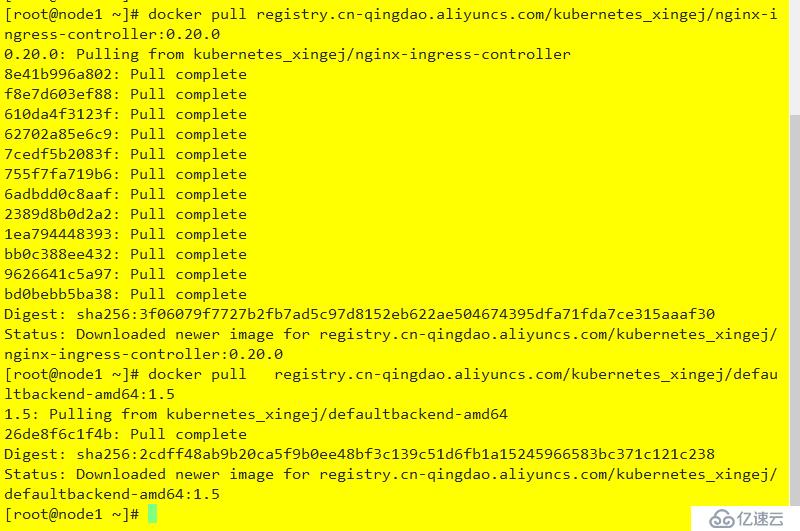

到指定节点拉取镜像

docker pull registry.cn-qingdao.aliyuncs.com/kubernetes_xingej/nginx-ingress-controller:0.20.0

docker pull registry.cn-qingdao.aliyuncs.com/kubernetes_xingej/defaultbackend-amd64:1.5

docker tag registry.cn-qingdao.aliyuncs.com/kubernetes_xingej/nginx-ingress-controller:0.20.0 quay.io/kubernetes-ingress-controller/nginx-ingress-controller:0.20.0

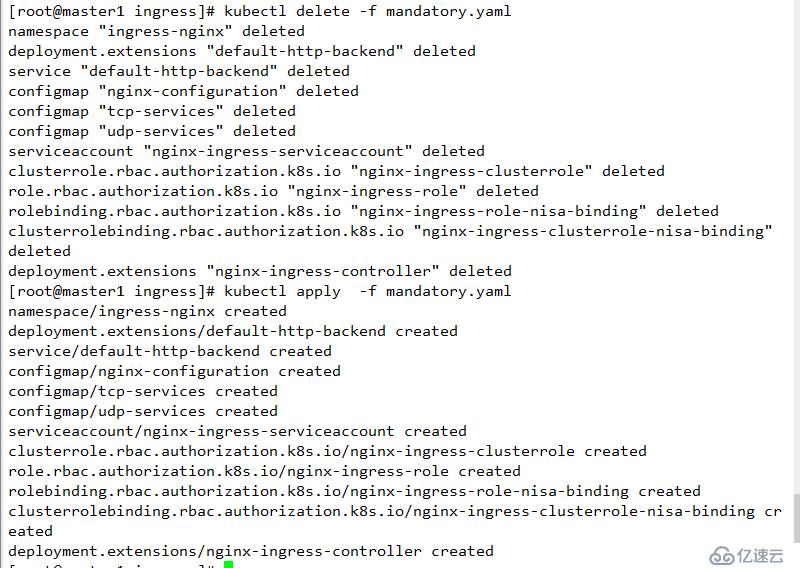

docker tag registry.cn-qingdao.aliyuncs.com/kubernetes_xingej/defaultbackend-amd64:1.5 k8s.gcr.io/defaultbackend-amd64:1.5删除重新部署

kubectl delete -f mandatory.yaml

kubectl apply -f mandatory.yaml

查看

kubectl get pods -n ingress-nginx

若此处无法创建,则可能是apiserver认证不过,可通过删除/opt/kubernetes/cfg/kube-apiserver 中的enable-admission-plugins 中的SecurityContextDeny,ServiceAccount并重启apiserver重新部署即可

apiVersion: v1

kind: Service

metadata:

name: nginx-ingress-controller

namespace: ingress-nginx

spec:

type: NodePort

clusterIP: 10.0.0.100

ports:

- port: 80

name: http

nodePort: 30080

- port: 443

name: https

nodePort: 30443

selector:

app.kubernetes.io/name: ingress-nginxkubectl apply -f service.yaml

查看

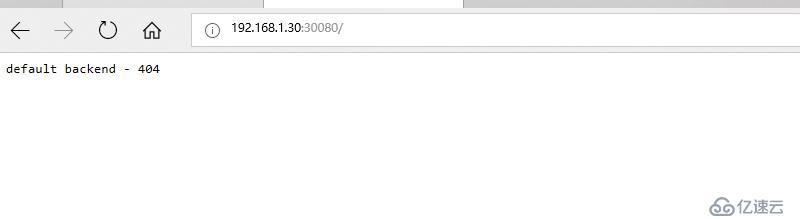

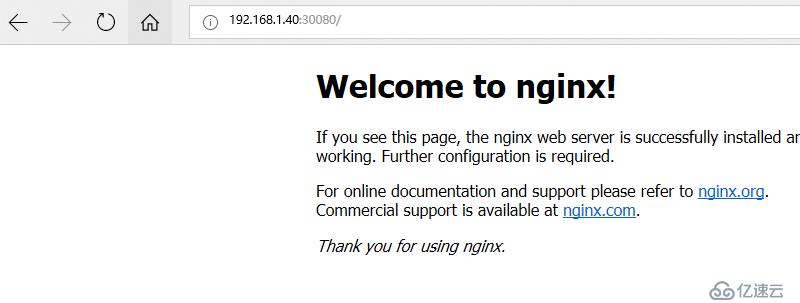

验证

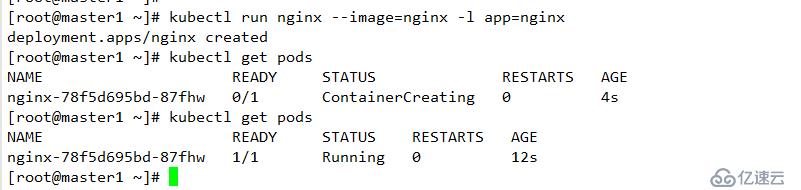

配置默认后端站点

#cat ingress/nginx.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: default-backend-nginx

namespace: default

spec:

backend:

serviceName: nginx

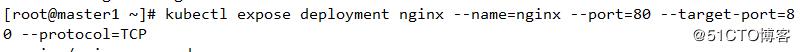

servicePort: 80部署

kubectl apply -f ingress/nginx.yaml 查看

mkdir metrics-server

cd metrics-server/

yum -y install git

git clone https://github.com/kubernetes-incubator/metrics-server.git #metrics-server-deployment.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: metrics-server

namespace: kube-system

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: metrics-server

namespace: kube-system

labels:

k8s-app: metrics-server

spec:

selector:

matchLabels:

k8s-app: metrics-server

template:

metadata:

name: metrics-server

labels:

k8s-app: metrics-server

spec:

serviceAccountName: metrics-server

volumes:

# mount in tmp so we can safely use from-scratch images and/or read-only containers

- name: tmp-dir

emptyDir: {}

containers:

- name: metrics-server

image: registry.cn-beijing.aliyuncs.com/minminmsn/metrics-server:v0.3.1

imagePullPolicy: Always

volumeMounts:

- name: tmp-dir

mountPath: /tmp

#service.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: metrics-ingress

namespace: kube-system

annotations:

nginx.ingress.kubernetes.io/ingress.class: nginx

nginx.ingress.kubernetes.io/secure-backends: "true"

nginx.ingress.kubernetes.io/ssl-passthrough: "true"

spec:

tls:

- hosts:

- metrics.minminmsn.com

secretName: ingress-secret

rules:

- host: metrics.minminmsn.com

http:

paths:

- path: /

backend:

serviceName: metrics-server

servicePort: 443#/opt/kubernetes/cfg/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://192.168.1.10:2379,https://192.168.1.20:2379,https://192.168.1.30:2379 \

--bind-address=192.168.1.10 \

--secure-port=6443 \

--advertise-address=192.168.1.10 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \

# 添加如下配置

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \

--requestheader-allowed-names=aggregator \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-group-headers=X-Remote-Group \

--requestheader-username-headers=X-Remote-User \

--proxy-client-cert-file=/opt/kubernetes/ssl/kube-proxy.pem \

--proxy-client-key-file=/opt/kubernetes/ssl/kube-proxy-key.pem"重启apiserver

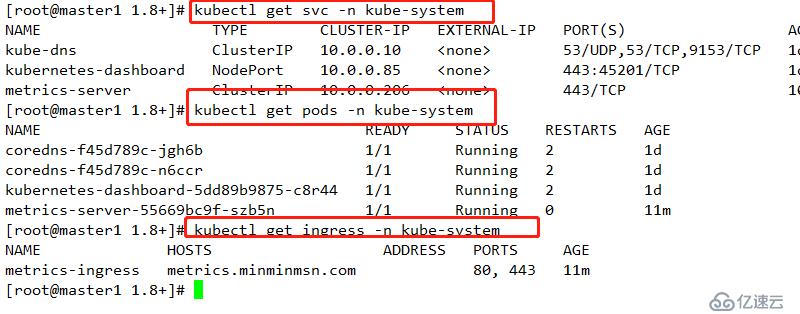

systemctl restart kube-apiserver.servicecd metrics-server/deploy/1.8+/

kubectl apply -f .查看配置

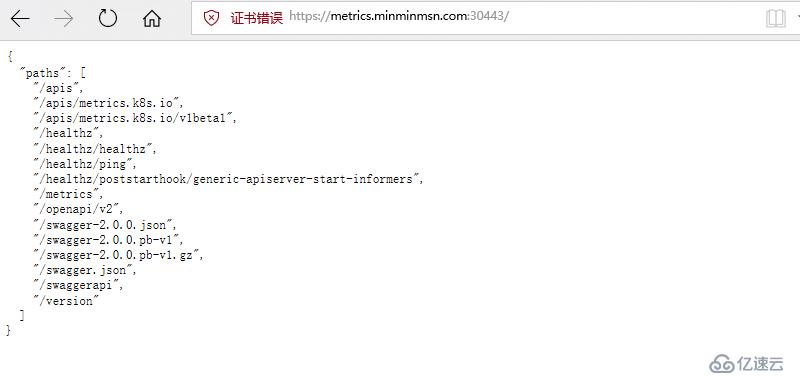

此处之前使用的是30443端口映射443端口,需要https进行访问

git clone https://github.com/iKubernetes/k8s-prom.git

cd k8s-prom/

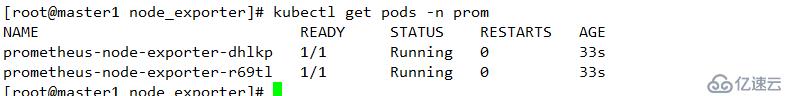

kubectl apply -f namespace.yamlcd node_exporter/

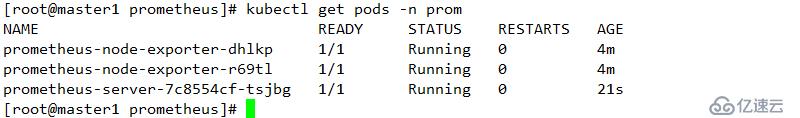

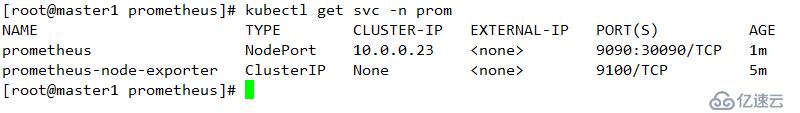

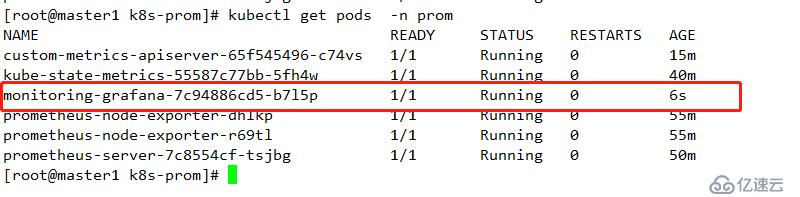

kubectl apply -f .查看

kubectl get pods -n prom

cd ../prometheus/

#prometheus-deploy.yaml

#删除其中的limit限制,结果如下

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: prom

labels:

app: prometheus

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

component: server

#matchExpressions:

#- {key: app, operator: In, values: [prometheus]}

#- {key: component, operator: In, values: [server]}

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false'

spec:

serviceAccountName: prometheus

containers:

- name: prometheus

image: prom/prometheus:v2.2.1

imagePullPolicy: Always

command:

- prometheus

- --config.file=/etc/prometheus/prometheus.yml

- --storage.tsdb.path=/prometheus

- --storage.tsdb.retention=720h

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: /etc/prometheus/prometheus.yml

name: prometheus-config

subPath: prometheus.yml

- mountPath: /prometheus/

name: prometheus-storage-volume

volumes:

- name: prometheus-config

configMap:

name: prometheus-config

items:

- key: prometheus.yml

path: prometheus.yml

mode: 0644

- name: prometheus-storage-volume

emptyDir: {}

# 部署

kubectl apply -f .查看

kubectl get pods -n prom

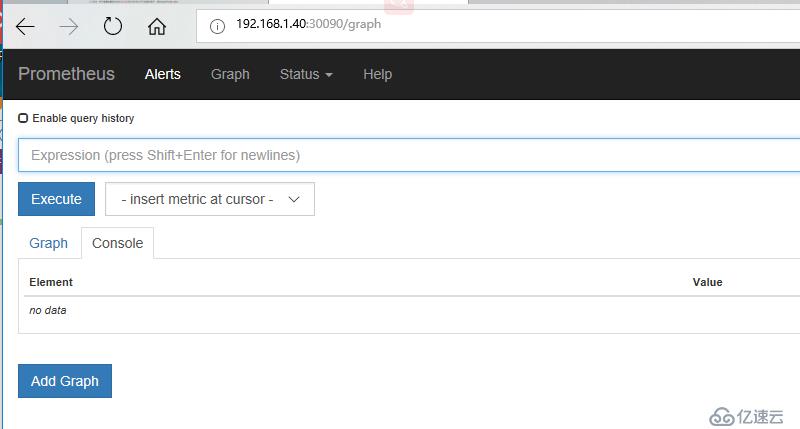

验证

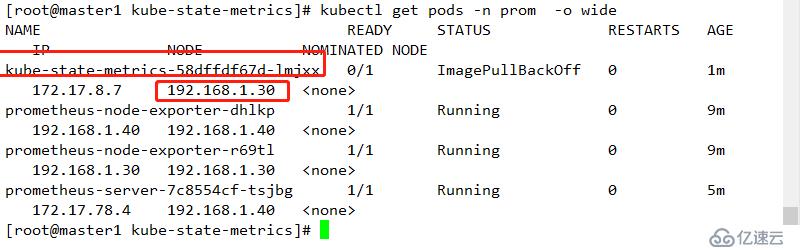

cd ../kube-state-metrics/

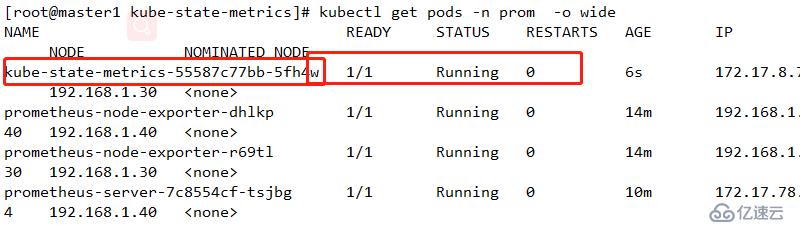

kubectl apply -f .查看部署节点

到指定节点拉取镜像

docker pull quay.io/coreos/kube-state-metrics:v1.3.1

docker tag quay.io/coreos/kube-state-metrics:v1.3.1 gcr.io/google_containers/kube-state-metrics-amd64:v1.3.1

#重新部署

kubectl delete -f .

kubectl apply -f .查看

准备证书

cd /opt/kubernetes/ssl/

(umask 077;openssl genrsa -out serving.key 2048)

openssl req -new -key serving.key -out serving.csr -subj "/CN=serving"

openssl x509 -req -in serving.csr -CA ./kubelet.crt -CAkey ./kubelet.key -CAcreateserial -out serving.crt -days 3650

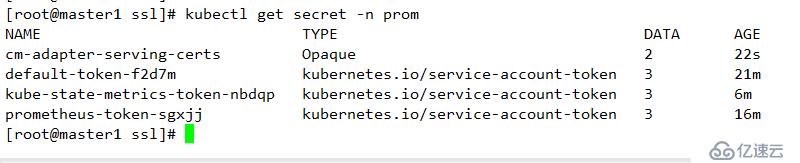

kubectl create secret generic cm-adapter-serving-certs --from-file=serving.crt=./serving.crt --from-file=serving.key=./serving.key -n prom查看

kubectl get secret -n prom

部署资源

cd k8s-prometheus-adapter/

mv custom-metrics-apiserver-deployment.yaml custom-metrics-apiserver-deployment.yaml.bak

wget https://raw.githubusercontent.com/DirectXMan12/k8s-prometheus-adapter/master/deploy/manifests/custom-metrics-apiserver-deployment.yaml

# 修改名称空间

#custom-metrics-apiserver-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: custom-metrics-apiserver

name: custom-metrics-apiserver

namespace: prom

spec:

replicas: 1

selector:

matchLabels:

app: custom-metrics-apiserver

template:

metadata:

labels:

app: custom-metrics-apiserver

name: custom-metrics-apiserver

spec:

serviceAccountName: custom-metrics-apiserver

containers:

- name: custom-metrics-apiserver

image: directxman12/k8s-prometheus-adapter-amd64

args:

- --secure-port=6443

- --tls-cert-file=/var/run/serving-cert/serving.crt

- --tls-private-key-file=/var/run/serving-cert/serving.key

- --logtostderr=true

- --prometheus-url=http://prometheus.prom.svc:9090/

- --metrics-relist-interval=1m

- --v=10

- --config=/etc/adapter/config.yaml

ports:

- containerPort: 6443

volumeMounts:

- mountPath: /var/run/serving-cert

name: volume-serving-cert

readOnly: true

- mountPath: /etc/adapter/

name: config

readOnly: true

- mountPath: /tmp

name: tmp-vol

volumes:

- name: volume-serving-cert

secret:

secretName: cm-adapter-serving-certs

- name: config

configMap:

name: adapter-config

- name: tmp-vol

emptyDir: {}

wget https://raw.githubusercontent.com/DirectXMan12/k8s-prometheus-adapter/master/deploy/manifests/custom-metrics-config-map.yaml

#修改名称空间

#custom-metrics-config-map.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: adapter-config

namespace: prom

data:

config.yaml: |

rules:

- seriesQuery: '{__name__=~"^container_.*",container_name!="POD",namespace!="",pod_name!=""}'

seriesFilters: []

resources:

overrides:

namespace:

resource: namespace

pod_name:

resource: pod

name:

matches: ^container_(.*)_seconds_total$

as: ""

metricsQuery: sum(rate(<<.Series>>{<<.LabelMatchers>>,container_name!="POD"}[1m])) by (<<.GroupBy>>)

- seriesQuery: '{__name__=~"^container_.*",container_name!="POD",namespace!="",pod_name!=""}'

seriesFilters:

- isNot: ^container_.*_seconds_total$

resources:

overrides:

namespace:

resource: namespace

pod_name:

resource: pod

name:

matches: ^container_(.*)_total$

as: ""

metricsQuery: sum(rate(<<.Series>>{<<.LabelMatchers>>,container_name!="POD"}[1m])) by (<<.GroupBy>>)

- seriesQuery: '{__name__=~"^container_.*",container_name!="POD",namespace!="",pod_name!=""}'

seriesFilters:

- isNot: ^container_.*_total$

resources:

overrides:

namespace:

resource: namespace

pod_name:

resource: pod

name:

matches: ^container_(.*)$

as: ""

metricsQuery: sum(<<.Series>>{<<.LabelMatchers>>,container_name!="POD"}) by (<<.GroupBy>>)

- seriesQuery: '{namespace!="",__name__!~"^container_.*"}'

seriesFilters:

- isNot: .*_total$

resources:

template: <<.Resource>>

name:

matches: ""

as: ""

metricsQuery: sum(<<.Series>>{<<.LabelMatchers>>}) by (<<.GroupBy>>)

- seriesQuery: '{namespace!="",__name__!~"^container_.*"}'

seriesFilters:

- isNot: .*_seconds_total

resources:

template: <<.Resource>>

name:

matches: ^(.*)_total$

as: ""

metricsQuery: sum(rate(<<.Series>>{<<.LabelMatchers>>}[1m])) by (<<.GroupBy>>)

- seriesQuery: '{namespace!="",__name__!~"^container_.*"}'

seriesFilters: []

resources:

template: <<.Resource>>

name:

matches: ^(.*)_seconds_total$

as: ""

metricsQuery: sum(rate(<<.Series>>{<<.LabelMatchers>>}[1m])) by (<<.GroupBy>>)

resourceRules:

cpu:

containerQuery: sum(rate(container_cpu_usage_seconds_total{<<.LabelMatchers>>}[1m])) by (<<.GroupBy>>)

nodeQuery: sum(rate(container_cpu_usage_seconds_total{<<.LabelMatchers>>, id='/'}[1m])) by (<<.GroupBy>>)

resources:

overrides:

instance:

resource: node

namespace:

resource: namespace

pod_name:

resource: pod

containerLabel: container_name

memory:

containerQuery: sum(container_memory_working_set_bytes{<<.LabelMatchers>>}) by (<<.GroupBy>>)

nodeQuery: sum(container_memory_working_set_bytes{<<.LabelMatchers>>,id='/'}) by (<<.GroupBy>>)

resources:

overrides:

instance:

resource: node

namespace:

resource: namespace

pod_name:

resource: pod

containerLabel: container_name

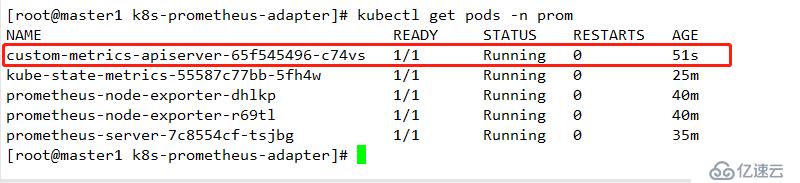

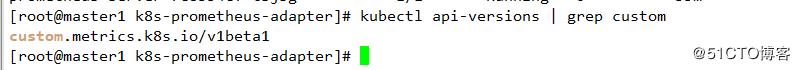

window: 1m部署

kubectl apply -f custom-metrics-config-map.yaml

kubectl apply -f .

查看

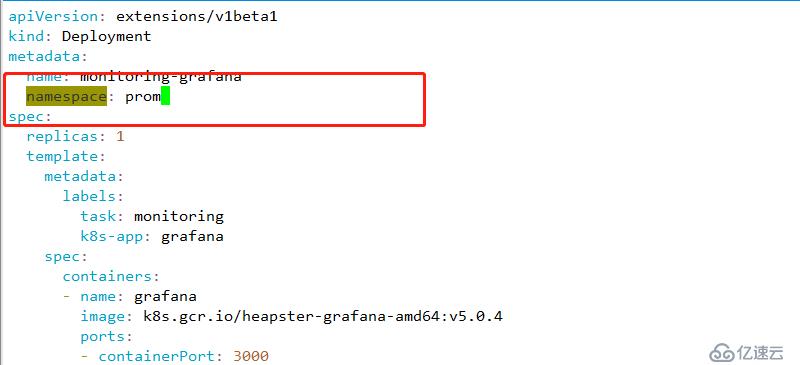

wget https://raw.githubusercontent.com/kubernetes-retired/heapster/master/deploy/kube-config/influxdb/grafana.yaml1 修改名称空间

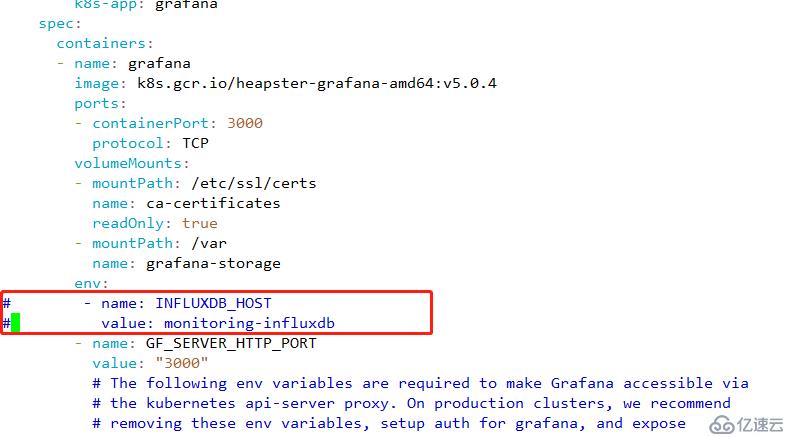

2 修改器默认使用的存储

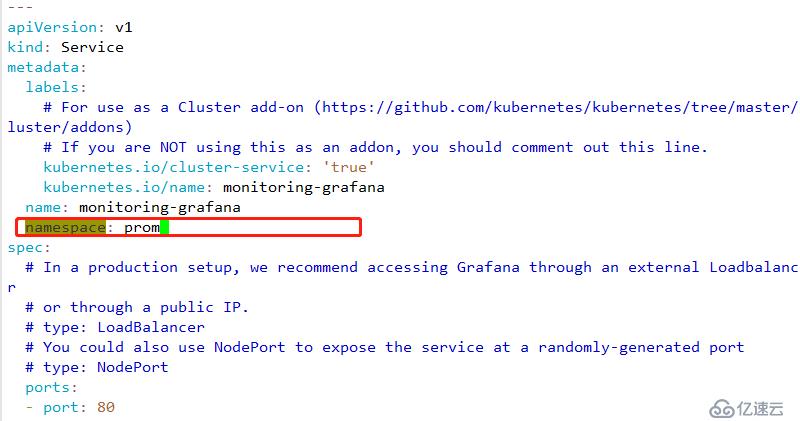

3 修改service名称空间

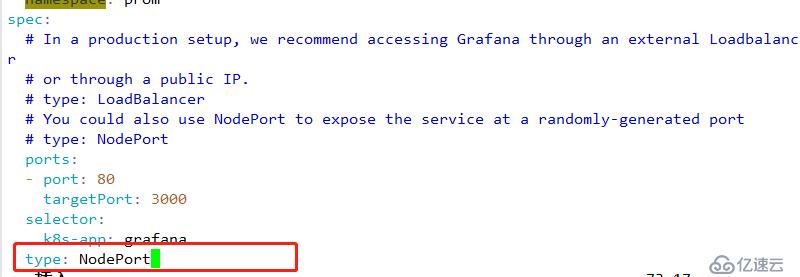

4 修改nodeport 以供外网访问

5 配置文件如下:

#grafana.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: prom

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: registry.cn-hangzhou.aliyuncs.com/google_containers/heapster-grafana-amd64:v5.0.4

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: true

- mountPath: /var

name: grafana-storage

env:

# - name: INFLUXDB_HOST

# value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: "3000"

# The following env variables are required to make Grafana accessible via

# the kubernetes api-server proxy. On production clusters, we recommend

# removing these env variables, setup auth for grafana, and expose the grafana

# service using a LoadBalancer or a public IP.

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

# If you're only using the API Server proxy, set this value instead:

# value: /api/v1/namespaces/kube-system/services/monitoring-grafana/proxy

value: /

volumes:

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

- name: grafana-storage

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

labels:

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

name: monitoring-grafana

namespace: prom

spec:

# In a production setup, we recommend accessing Grafana through an external Loadbalancer

# or through a public IP.

# type: LoadBalancer

# You could also use NodePort to expose the service at a randomly-generated port

# type: NodePort

ports:

- port: 80

targetPort: 3000

selector:

k8s-app: grafana

type: NodePortkubectl apply -f grafana.yaml

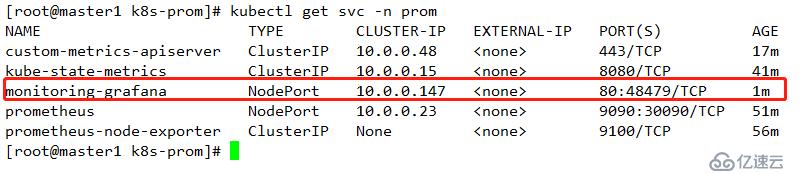

查看其运行节点

查看其映射端口

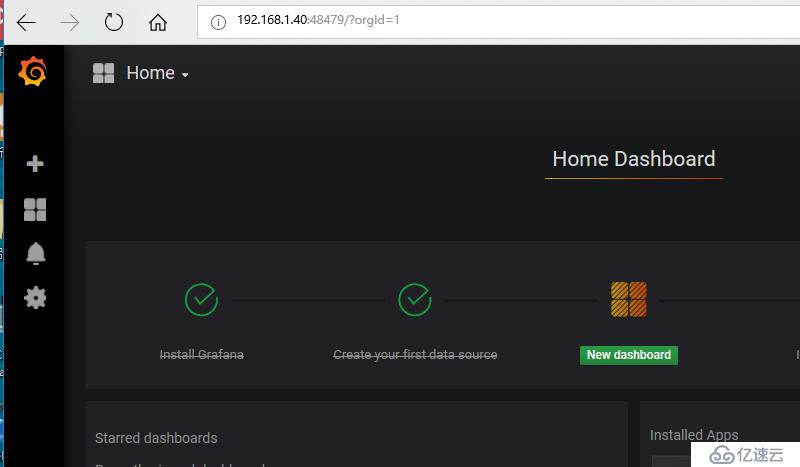

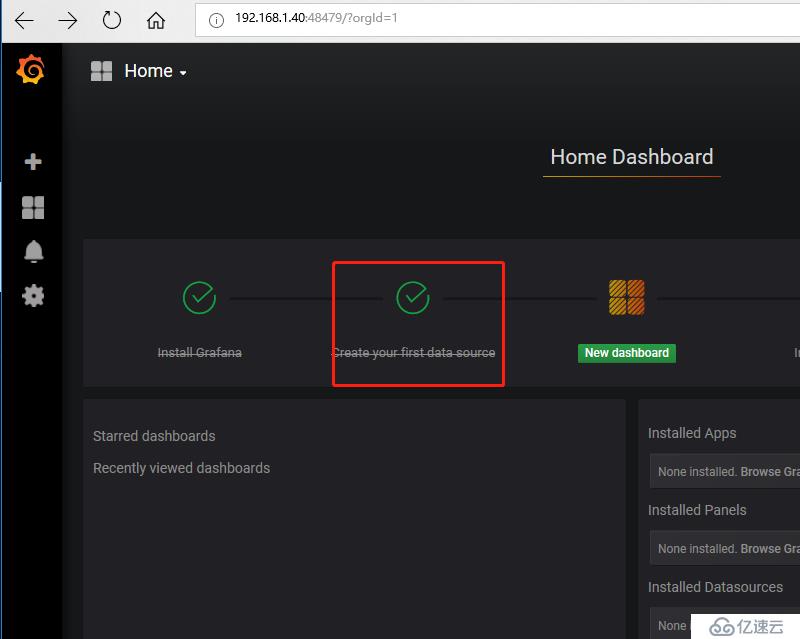

查看

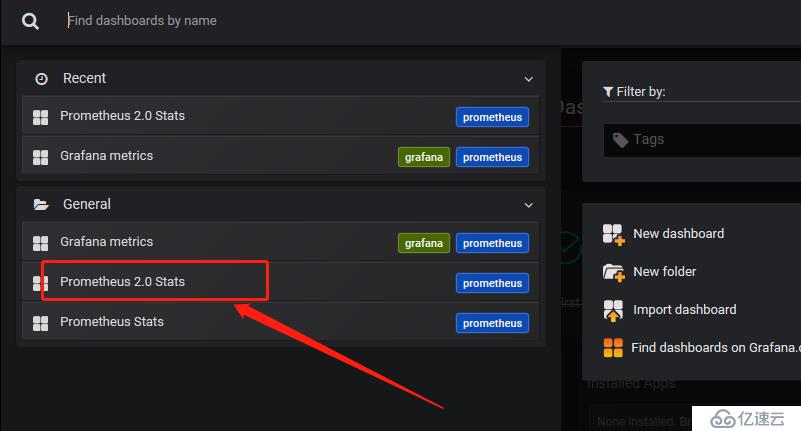

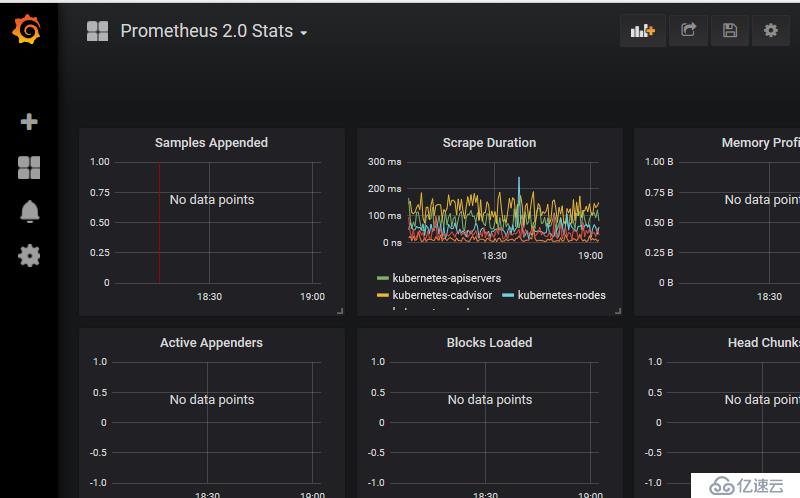

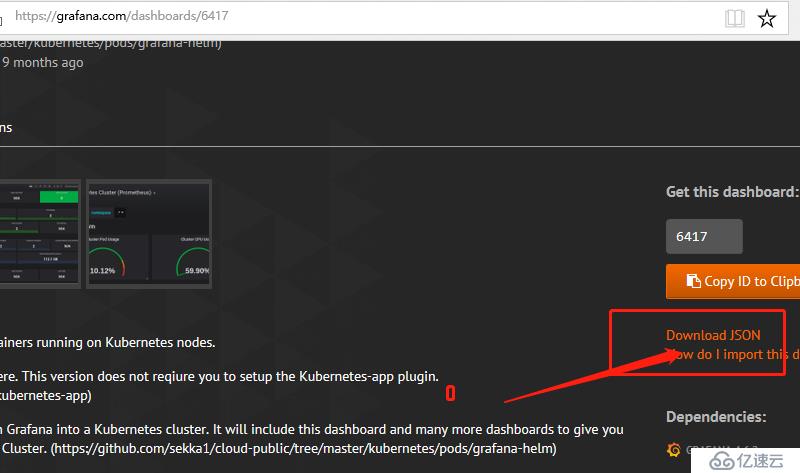

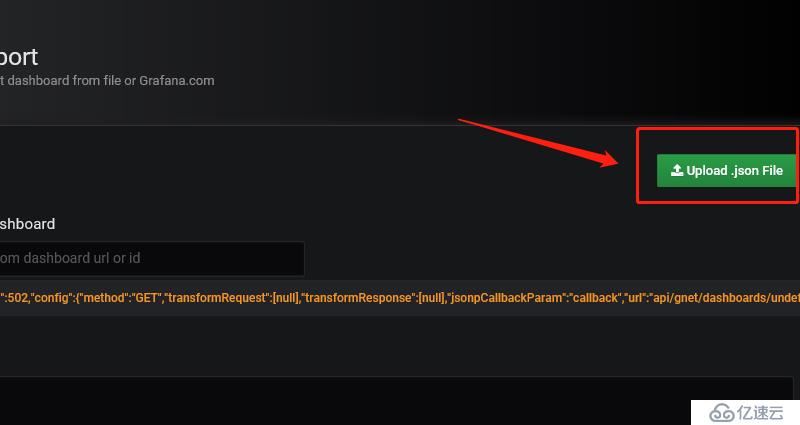

1 插件位置并进行下载

https://grafana.com/dashboards/6417

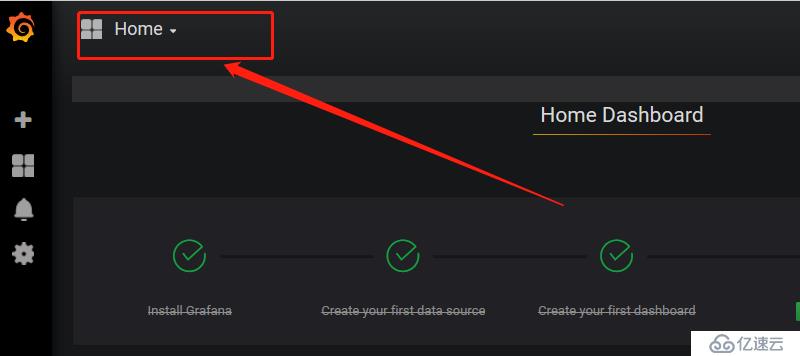

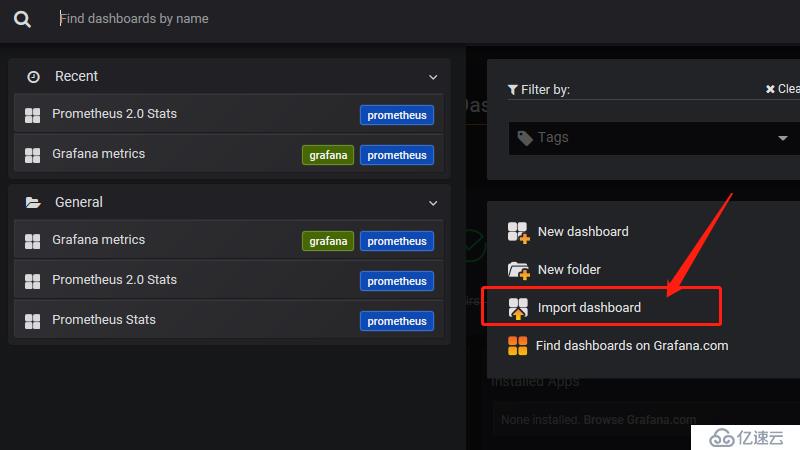

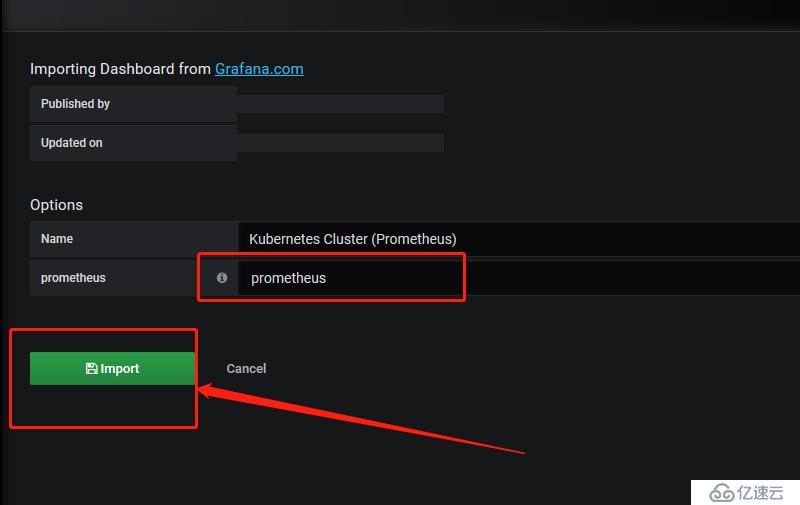

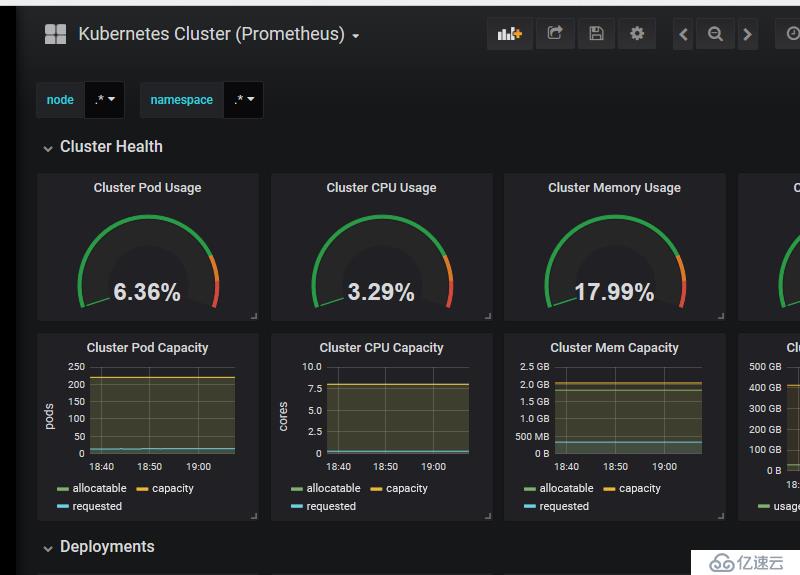

2 导入插件

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。