这篇文章主要介绍了spark怎么读取hbase表的相关知识,内容详细易懂,操作简单快捷,具有一定借鉴价值,相信大家阅读完这篇spark怎么读取hbase表文章都会有所收获,下面我们一起来看看吧。

一.场景:

spark通过phoenix 读取hbase表,其实说白了先要去Zookeeper建立connection。

二.代码:

val zkUrl = "192.168.100.39,192.168.100.40,192.168.100.41:2181"

val formatStr = "org.apache.phoenix.spark"

val oms_orderinfoDF = spark.read.format(formatStr)

.options(Map("table" -> "oms_orderinfo", "zkUrl" -> zkUrl))

.load

17/10/24 03:25:25 INFO zookeeper.ClientCnxn: Opening socket connection to server hadoop40/192.168.100.40:2181. Will not attempt to authenticate using SASL (unknown error) 17/10/24 03:25:25 INFO zookeeper.ClientCnxn: Socket connection established, initiating session, client: /192.168.100.48:35952, server: hadoop40/192.168.100.40:2181 17/10/24 03:25:25 WARN zookeeper.ClientCnxn: Session 0x0 for server hadoop40/192.168.100.40:2181, unexpected error, closing socket connection and attempting reconnect java.io.IOException: Connection reset by peer at sun.nio.ch.FileDispatcherImpl.read0(Native Method) at sun.nio.ch.SocketDispatcher.read(SocketDispatcher.java:39) at sun.nio.ch.IOUtil.readIntoNativeBuffer(IOUtil.java:223) at sun.nio.ch.IOUtil.read(IOUtil.java:192) at sun.nio.ch.SocketChannelImpl.read(SocketChannelImpl.java:380) at org.apache.phoenix.shaded.org.apache.zookeeper.ClientCnxnSocketNIO.doIO(ClientCnxnSocketNIO.java:68) at org.apache.phoenix.shaded.org.apache.zookeeper.ClientCnxnSocketNIO.doTransport(ClientCnxnSocketNIO.java:355) at org.apache.phoenix.shaded.org.apache.zookeeper.ClientCnxn$SendThread.run(ClientCnxn.java:1081) 17/10/24 03:25:25 INFO yarn.ApplicationMaster: Deleting staging directory hdfs://nameservice1/user/hdfs/.sparkStaging/application_1507703377455_4854 17/10/24 03:25:25 INFO util.ShutdownHookManager: Shutdown hook called 2017-10-24 03:25:22,498 WARN org.apache.zookeeper.server.NIOServerCnxnFactory: Too many connections from /192.168.100.40 - max is 500 2017-10-24 03:25:25,588 WARN org.apache.zookeeper.server.NIOServerCnxnFactory: Too many connections from /192.168.100.40 - max is 500 2017-10-24 03:25:25,819 WARN org.apache.zookeeper.server.NIOServerCnxnFactory: Too many connections from /192.168.100.40 - max is 500 2017-10-24 03:25:26,092 WARN org.apache.zookeeper.server.NIOServerCnxn: caught end of stream exception EndOfStreamException: Unable to read additional data from client sessionid 0x15ed091ee09897d, likely client has closed socket at org.apache.zookeeper.server.NIOServerCnxn.doIO(NIOServerCnxn.java:231) at org.apache.zookeeper.server.NIOServerCnxnFactory.run(NIOServerCnxnFactory.java:208) at java.lang.Thread.run(Thread.java:745) 2017-10-24 03:25:26,092 INFO org.apache.zookeeper.server.NIOServerCnxn: Closed socket connection for client /192.168.100.40:47084 which had sessionid 0x15ed091ee09897d 2017-10-24 03:25:26,092 WARN org.apache.zookeeper.server.NIOServerCnxn: caught end of stream exception EndOfStreamException: Unable to read additional data from client sessionid 0x15ed091ee098981, likely client has closed socket at org.apache.zookeeper.server.NIOServerCnxn.doIO(NIOServerCnxn.java:231) at org.apache.zookeeper.server.NIOServerCnxnFactory.run(NIOServerCnxnFactory.java:208) at java.lang.Thread.run(Thread.java:745) 2017-10-24 03:25:26,093 INFO org.apache.zookeeper.server.NIOServerCnxn: Closed socket connection for client /192.168.100.40:47304 which had sessionid 0x15ed091ee098981 2017-10-24 03:25:26,093 WARN org.apache.zookeeper.server.NIOServerCnxn: caught end of stream exception

三.查看SparkJob日志:

四.查看Zookeeper日志:

五.解决方法:

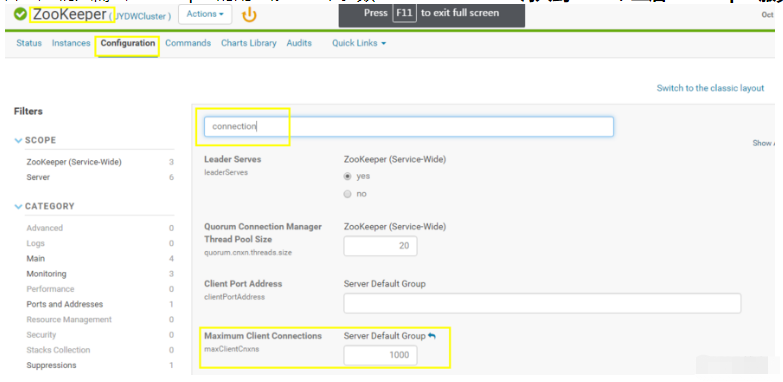

undefinedmaxClientCnxns调大到1000,重启zookeeper服务生效配置即可。

关于“spark怎么读取hbase表”这篇文章的内容就介绍到这里,感谢各位的阅读!相信大家对“spark怎么读取hbase表”知识都有一定的了解,大家如果还想学习更多知识,欢迎关注亿速云行业资讯频道。

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。