来源

使用happybase通过thrift接口向HBase读取、写入数据时,出现Broken pipe的错误。排查步骤:

Java HotSpot(TM) 64-Bit Server VM warning: Using incremental CMS is deprecated and will likely be removed in a future release

17/05/12 18:08:41 INFO util.VersionInfo: HBase 1.2.0-cdh6.10.1

17/05/12 18:08:41 INFO util.VersionInfo: Source code repository file:///data/jenkins/workspace/generic-package-centos64-7-0/topdir/BUILD/hbase-1.2.0-cdh6.10.1 revision=Unknown

17/05/12 18:08:41 INFO util.VersionInfo: Compiled by jenkins on Mon Mar 20 02:46:09 PDT 2017

17/05/12 18:08:41 INFO util.VersionInfo: From source with checksum c6d9864e1358df7e7f39d39a40338b4e

17/05/12 18:08:41 INFO thrift.ThriftServerRunner: Using default thrift server type

17/05/12 18:08:41 INFO thrift.ThriftServerRunner: Using thrift server type threadpool

17/05/12 18:08:42 WARN impl.MetricsConfig: Cannot locate configuration: tried hadoop-metrics2-hbase.properties,hadoop-metrics2.properties

17/05/12 18:08:42 INFO impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

17/05/12 18:08:42 INFO impl.MetricsSystemImpl: HBase metrics system started

17/05/12 18:08:42 INFO mortbay.log: Logging to org.slf4j.impl.Log4jLoggerAdapter(org.mortbay.log) via org.mortbay.log.Slf4jLog

17/05/12 18:08:42 INFO http.HttpRequestLog: Http request log for http.requests.thrift is not defined

17/05/12 18:08:42 INFO http.HttpServer: Added global filter 'safety' (class=org.apache.hadoop.hbase.http.HttpServer$QuotingInputFilter)

17/05/12 18:08:42 INFO http.HttpServer: Added global filter 'clickjackingprevention' (class=org.apache.hadoop.hbase.http.ClickjackingPreventionFilter)

17/05/12 18:08:42 INFO http.HttpServer: Added filter static_user_filter (class=org.apache.hadoop.hbase.http.lib.StaticUserWebFilter$StaticUserFilter) to context thrift

17/05/12 18:08:42 INFO http.HttpServer: Added filter static_user_filter (class=org.apache.hadoop.hbase.http.lib.StaticUserWebFilter$StaticUserFilter) to context static

17/05/12 18:08:42 INFO http.HttpServer: Added filter static_user_filter (class=org.apache.hadoop.hbase.http.lib.StaticUserWebFilter$StaticUserFilter) to context logs

17/05/12 18:08:42 INFO http.HttpServer: Jetty bound to port 9095

17/05/12 18:08:42 INFO mortbay.log: jetty-6.1.26.cloudera.4

17/05/12 18:08:42 WARN mortbay.log: Can't reuse /tmp/Jetty_0_0_0_0_9095_thrift____.vqpz9l, using /tmp/Jetty_0_0_0_0_9095_thrift____.vqpz9l_5120175032480185058

17/05/12 18:08:43 INFO mortbay.log: Started SelectChannelConnector@0.0.0.0:9095

17/05/12 18:08:43 INFO thrift.ThriftServerRunner: starting TBoundedThreadPoolServer on /0.0.0.0:9090 with readTimeout 300000ms; min worker threads=128, max worker threads=1000, max queued requests=1000

.

.

.

/05/08 15:05:51 INFO zookeeper.RecoverableZooKeeper: Process identifier=hconnection-0x645132bf connecting to ZooKeeper ensemble=cdh-master-slave1:2181,cdh-slave2:2181,cdh-slave3:2181

17/05/08 15:05:51 INFO zookeeper.ZooKeeper: Initiating client connection, connectString=cdh-master-slave1:2181,cdh-slave2:2181,cdh-slave3:2181 sessionTimeout=60000 watcher=hconnection-0x64513-master-slave1:2181,cdh-slave2:2181,cdh-slave3:2181, baseZNode=/hbase

17/05/08 15:05:51 INFO zookeeper.ClientCnxn: Opening socket connection to server cdh-slave3/192.168.10.219:2181. Will not attempt to authenticate using SASL (unknown error)

17/05/08 15:05:51 INFO zookeeper.ClientCnxn: Socket connection established, initiating session, client: /192.168.10.23:43170, server: cdh-slave3/192.168.10.219:2181

17/05/08 15:05:51 INFO zookeeper.ClientCnxn: Session establishment complete on server cdh-slave3/192.168.10.219:2181, sessionid = 0x35bd74a77802148, negotiated timeout = 60000

[caitinggui@cdh-master-slave1 example]$ 17/05/08 15:32:50 INFO client.ConnectionManager$HConnectionImplementation: Closing zookeeper sessionid=0x35bd74a77802148

17/05/08 15:32:51 INFO zookeeper.ZooKeeper: Session: 0x35bd74a77802148 closed

17/05/08 15:32:51 INFO zookeeper.ClientCnxn: EventThread shut down

17/05/08 15:38:53 INFO zookeeper.RecoverableZooKeeper: Process identifier=hconnection-0xb876351 connecting to ZooKeeper ensemble=cdh-master-slave1:2181,cdh-slave2:2181,cdh-slave3:2181

17/05/08 15:38:53 INFO zookeeper.ZooKeeper: Initiating client connection, connectString=cdh-master-slave1:2181,cdh-slave2:2181,cdh-slave3:2181 sessionTimeout=60000 watcher=hconnection-0xb8763510x0, quorum=cdh-master-slave1:2181,cdh-slave2:2181,cdh-slave3:2181, baseZNode=/hbase

17/05/08 15:38:53 INFO zookeeper.ClientCnxn: Opening socket connection to server cdh-master-slave1/192.168.10.23:2181. Will not attempt to authenticate using SASL (unknown error)

17/05/08 15:38:53 INFO zookeeper.ClientCnxn: Socket connection established, initiating session, client: /192.168.10.23:35526, server: cdh-master-slave1/192.168.10.23:2181

17/05/08 15:38:53 INFO zookeeper.ClientCnxn: Session establishment complete on server cdh-master-slave1/192.168.10.23:2181, sessionid = 0x15ba3ddc6cc90d4, negotiated timeout = 60000初步推断是hbase设置了某个超时时间,导致连接断开

查看相似的内容:

Uploaded image for project: 'HBase'

HBaseHBASE-14926

Hung ThriftServer; no timeout on read from client; if client crashes, worker thread gets stuck reading

Agile Board

Export

Details

Type: Bug

Status:RESOLVED

Priority: Major

Resolution: Fixed

Affects Version/s:

2.0.0, 1.2.0, 1.1.2, 1.3.0, 1.0.3, 0.98.16

Fix Version/s:

2.0.0, 1.2.0, 1.3.0, 0.98.17

Component/s:

Thrift

Labels:None

Hadoop Flags:

Reviewed

Release Note: Adds a timeout to server read from clients. Adds new configs hbase.thrift.server.socket.read.timeout for setting read timeout on server socket in milliseconds. Default is 60000;

Description

Thrift server is hung. All worker threads are doing this:

"thrift-worker-0" daemon prio=10 tid=0x00007f0bb95c2800 nid=0xf6a7 runnable [0x00007f0b956e0000]

java.lang.Thread.State: RUNNABLE

at java.net.SocketInputStream.socketRead0(Native Method)

at java.net.SocketInputStream.read(SocketInputStream.java:152)

at java.net.SocketInputStream.read(SocketInputStream.java:122)

at java.io.BufferedInputStream.fill(BufferedInputStream.java:235)

at java.io.BufferedInputStream.read1(BufferedInputStream.java:275)

at java.io.BufferedInputStream.read(BufferedInputStream.java:334)

- locked <0x000000066d859490> (a java.io.BufferedInputStream)

at org.apache.thrift.transport.TIOStreamTransport.read(TIOStreamTransport.java:127)

at org.apache.thrift.transport.TTransport.readAll(TTransport.java:84)

at org.apache.thrift.transport.TFramedTransport.readFrame(TFramedTransport.java:129)

at org.apache.thrift.transport.TFramedTransport.read(TFramedTransport.java:101)

at org.apache.thrift.transport.TTransport.readAll(TTransport.java:84)

at org.apache.thrift.protocol.TCompactProtocol.readByte(TCompactProtocol.java:601)

at org.apache.thrift.protocol.TCompactProtocol.readMessageBegin(TCompactProtocol.java:470)

at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:27)

at org.apache.hadoop.hbase.thrift.TBoundedThreadPoolServer$ClientConnnection.run(TBoundedThreadPoolServer.java:289)

at org.apache.hadoop.hbase.thrift.CallQueue$Call.run(CallQueue.java:64)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)

at java.lang.Thread.run(Thread.java:745)

They never recover.

I don't have client side logs.

We've been here before: HBASE-4967 "connected client thrift sockets should have a server side read timeout" but this patch only got applied to fb branch (and thrift has changed since then)

ps:来源https://issues.apache.org/jira/browse/HBASE-14926可以看到一个网页内容:

问题背景

测试环境是三台服务器搭建的Hadoop分布式环境。Hadoop版本是:hadoop-2.7.3;Hbase-1.2.4;

zookeeper-3.4.9。

使用thrift c++接口向hbase中写入数据,每次都是刚开始写入正常,过一段时间就开始报错。

但之前使用的hbase-0.94.27版本就没遇到过该问题,配置也相同,一直用的好好地。

thrift接口报错

解决办法

通过抓包可以看出,hbase server响应了RST包,导致连接中断。

通过 bin/hbase thrift start -threadpool命令可以readTimeout的设置为60s。

thriftpool

经过验证却是和这个设置有关,配置中没有配置过该项,通过查看代码发现60s是默认值,如果没有配置即按照以该值为准。

因此在conf/hbase-site.xml中添加上配置即可:

<property>

<name>hbase.thrift.server.socket.read.timeout</name>

<value>6000000</value>

<description>eg:milisecond</description>

</property>

ps:来源http://blog.csdn.net/wwlhz/article/details/56012053所以添加参数后,重启hbase thrift,发现问题解决

#https://github.com/apache/hbase/blob/master/hbase-thrift/src/main/java/org/apache/hadoop/hbase/thrift/ThriftServerRunner.java

...

public static final String THRIFT_SERVER_SOCKET_READ_TIMEOUT_KEY =

"hbase.thrift.server.socket.read.timeout";

public static final int THRIFT_SERVER_SOCKET_READ_TIMEOUT_DEFAULT = 60000;

.

.

.

int readTimeout = conf.getInt(THRIFT_SERVER_SOCKET_READ_TIMEOUT_KEY,

THRIFT_SERVER_SOCKET_READ_TIMEOUT_DEFAULT);

TServerTransport serverTransport = new TServerSocket(

new TServerSocket.ServerSocketTransportArgs().

bindAddr(new InetSocketAddress(listenAddress, listenPort)).

backlog(backlog).

clientTimeout(readTimeout));问题解决~~~

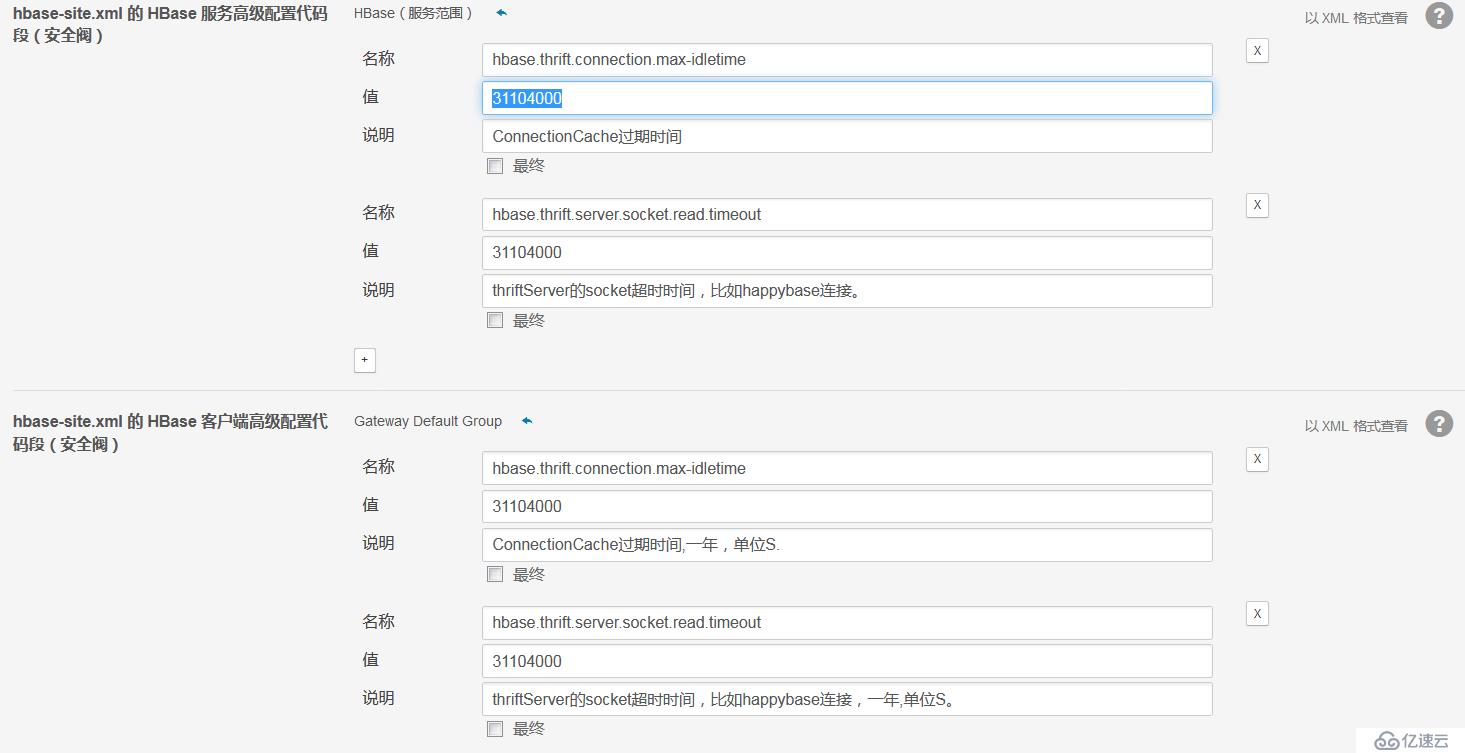

实际上还是有问题,一段时间发现连续scan大概20多分钟后,连接又被断开了,又是一次艰难的搜索,发现是hbase该版本自带的问题,它将所有连接(不管有没有在使用)都默认为idle的状态,然后有个hbase.thrift.connection.max-idletime的配置,所以我将此项配置为31104000(一年),如果是在CDH中,应该在管理页面配置,如图:

遇到问题一般步骤:

技术进步型:

1、查看日志,查看报错的地方,初步定位问题

2、查看官方文档

3、Google相似的问题,或者查看源码去定位问题

快速解决问题型:

1、查看日志,查看报错的地方,初步定位问题

2、Google相似问题

3、查看官方文档,或者查看源码

参考:

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。