本文小编为大家详细介绍“Springboot 2.x集成kafka 2.2.0的方法”,内容详细,步骤清晰,细节处理妥当,希望这篇“Springboot 2.x集成kafka 2.2.0的方法”文章能帮助大家解决疑惑,下面跟着小编的思路慢慢深入,一起来学习新知识吧。

kafka近几年更新非常快,也可以看出kafka在企业中是用的频率越来越高,在springboot中集成kafka还是比较简单的,但是应该注意使用的版本和kafka中基本配置,这个地方需要信心,防止进入坑中。

springboot版本2.1.4

kafka版本2.2.0

jdk 1.8

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>2.1.4.RELEASE</version>

<relativePath/> <!-- lookup parent from repository -->

</parent>

<groupId>com.example</groupId>

<artifactId>demo</artifactId>

<version>0.0.1-SNAPSHOT</version>

<name>kafkademo</name>

<description>Demo project for Spring Boot</description>

<properties>

<java.version>1.8</java.version>

</properties>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<scope>runtime</scope>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

<version>2.2.0.RELEASE</version>

</dependency>

<dependency>

<groupId>com.google.code.gson</groupId>

<artifactId>gson</artifactId>

<version>2.7</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

</plugin>

</plugins>

</build>

</project>spring.kafka.bootstrap-servers=2.1.1.1:9092

spring.kafka.consumer.group-id=test-consumer-group

spring.kafka.consumer.key-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.consumer.value-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.producer.key-serializer=org.apache.kafka.common.serialization.StringSerializer

spring.kafka.producer.value-serializer=org.apache.kafka.common.serialization.StringSerializer

#logging.level.root=debugpackage com.example.demo.model;

import java.util.Date;

public class Messages {

private Long id;

private String msg;

private Date sendTime;

public Long getId() {

return id;

}

public void setId(Long id) {

this.id = id;

}

public String getMsg() {

return msg;

}

public void setMsg(String msg) {

this.msg = msg;

}

public Date getSendTime() {

return sendTime;

}

public void setSendTime(Date sendTime) {

this.sendTime = sendTime;

}

}package com.example.demo.service;

import com.example.demo.model.Messages;

import com.google.gson.Gson;

import com.google.gson.GsonBuilder;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.kafka.support.SendResult;

import org.springframework.stereotype.Service;

import org.springframework.util.concurrent.ListenableFuture;

import java.util.Date;

import java.util.UUID;

@Service

public class KafkaSender {

@Autowired

private KafkaTemplate<String, String> kafkaTemplate;

private Gson gson = new GsonBuilder().create();

public void send() {

Messages message = new Messages();

message.setId(System.currentTimeMillis());

message.setMsg("123");

message.setSendTime(new Date());

ListenableFuture<SendResult<String, String>> test0 = kafkaTemplate.send("newtopic", gson.toJson(message));

}

}package com.example.demo.service;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.stereotype.Service;

import java.util.Optional;

@Service

public class KafkaReceiver {

@KafkaListener(topics = {"newtopic"})

public void listen(ConsumerRecord<?, ?> record) {

Optional<?> kafkaMessage = Optional.ofNullable(record.value());

if (kafkaMessage.isPresent()) {

Object message = kafkaMessage.get();

System.out.println("record =" + record);

System.out.println("message =" + message);

}

}

}在启动方法中模拟消息生产者,向kafka中发送消息

package com.example.demo;

import com.example.demo.service.KafkaSender;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.context.ConfigurableApplicationContext;

@SpringBootApplication

public class KafkademoApplication {

public static void main(String[] args) {

ConfigurableApplicationContext context = SpringApplication.run(KafkademoApplication.class, args);

KafkaSender sender = context.getBean(KafkaSender.class);

for (int i = 0; i <1000; i++) {

sender.send();

try {

Thread.sleep(300);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

}

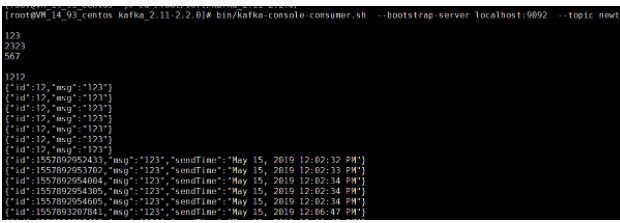

命令行直接消费消息

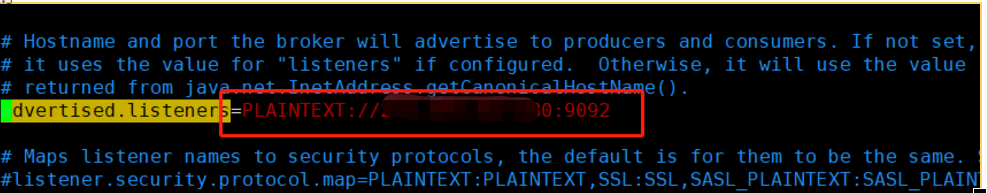

生产端连接kafka超时

at org.apache.kafka.common.network.NetworkReceive.readFrom(NetworkReceive.java:119)

解决方案:

修改kafka中的server.properties中的下面配置,将原来的默认配置替换成下面ip+端口的形式,重启kafka

读到这里,这篇“Springboot 2.x集成kafka 2.2.0的方法”文章已经介绍完毕,想要掌握这篇文章的知识点还需要大家自己动手实践使用过才能领会,如果想了解更多相关内容的文章,欢迎关注亿速云行业资讯频道。

亿速云「云服务器」,即开即用、新一代英特尔至强铂金CPU、三副本存储NVMe SSD云盘,价格低至29元/月。点击查看>>

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。