本篇内容介绍了“Hadoop2.7.1分布式安装配置过程”的有关知识,在实际案例的操作过程中,不少人都会遇到这样的困境,接下来就让小编带领大家学习一下如何处理这些情况吧!希望大家仔细阅读,能够学有所成!

VirtualBox5(三台),CentOS7,Hadoop2.7.1

先完成Hadoop2.7.1分布式安装-准备篇

注意:Hadoop-2.5.1开始终于不用再编译64位的libhadoop.so.1.0.0了,再早版本的hadoop自带的是32位的,如需64位需要自省编译,具体见Hadoop2.4.1分布式安装。

从apache hadoop官网下载并解压hadoop压缩包到本地目录/home/wukong/local/hadoop-2.7.1/

在打算做namenode的机器上,wget或其他方式下载hadoop的压缩包,并解压到本地指定目录。下载解压命令参考Linux常用命令。

共有七个文件,位于/home/wukong/local/hadoop-2.7.1/etc/hadoop,以下分别描述:

hadoop-env.sh

# 必配 # The java implementation to use. export JAVA_HOME=/opt/jdk1.7.0_79 # 选配。考虑是虚拟机,所以少配一点 # The maximum amount of heap to use, in MB. Default is 1000. export HADOOP_HEAPSIZE=500 export HADOOP_NAMENODE_INIT_HEAPSIZE="100"

yarn-env.sh

# some Java parameters export JAVA_HOME=/opt/jdk1.7.0_79 if [ "$JAVA_HOME" != "" ]; then #echo "run java in $JAVA_HOME" JAVA_HOME=$JAVA_HOME fi if [ "$JAVA_HOME" = "" ]; then echo "Error: JAVA_HOME is not set." exit 1 fi JAVA=$JAVA_HOME/bin/java JAVA_HEAP_MAX=-Xmx600m # 默认的heap_max是1000m,我的虚拟机没这么大内存,所以改小了

slaves

#写入你slave的节点。如果是多个就每行一个,写入host名 bd02 bd03

core-site.xml

<configuration> <property> <name>fs.defaultFS</name> <value>hdfs://bd01:9000</value> </property> <property> <name>io.file.buffer.size</name> <value>131072</value> </property> <property> <name>hadoop.tmp.dir</name> <value>file:/home/wukong/local/hdp-data/tmp</value> <description>Abase for other temporary directories.</description> </property> <property> <name>hadoop.proxyuser.hduser.hosts</name> <value>*</value> </property> <property> <name>hadoop.proxyuser.hduser.groups</name> <value>*</value> </property> </configuration>

其中hdp-data目录是原来没有的,需要自行创建

hdfs-site.xml

<configuration> <property> <name>dfs.namenode.secondary.http-address</name> <value>bd01:9001</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>file:/home/wukong/local/hdp-data/name</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>file:/home/wukong/a_usr/hdp-data/data</value> </property> <property> <name>dfs.replication</name> <value>3</value> </property> <property> <name>dfs.webhdfs.enabled</name> <value>true</value> </property> <oconfiguration>

mapred-site.xml

<configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> <property> <name>mapreduce.jobhistory.address</name> <value>bd01:10020</value> </property> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>bd01.19888</value> </property> </configuration>

yarn-site.xml

<configuration> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name> <value>org.apache.hadoop.mapred.ShuffleHandler</value> </property> <property> <name>yarn.resourcemanager.address</name> <value>bd01:8032</value> </property> <property> <name>yarn.resourcemanager.scheduler.address</name> <value>bd01:8030</value> </property> <property> <name>yarn.resourcemanager.resource-tracker.address</name> <value>bd01:8031</value> </property> <property> <name>yarn.resourcemanager.admin.address</name> <value>bd01:8033</value> </property> <property> <name>yarn.resourcemanager.webapp.address</name> <value>bd01:8088</value> </property> </configuration>

远程拷贝的方法见Linux常用命令

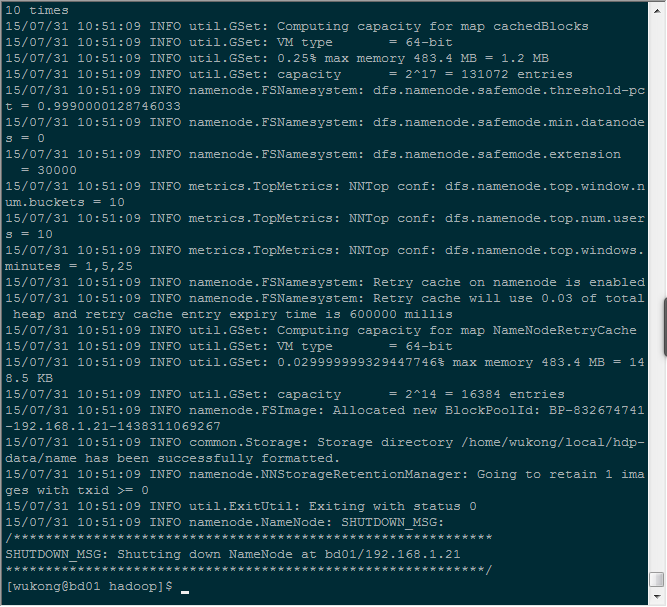

[wukong@bd01 hadoop-2.7.1]$ hdfs namenode -format

当执行完毕,没有抛异常,并且看到这一句时,就是成功了

15/07/31 10:51:09 INFO common.Storage: Storage directory /home/wukong/local/hdp-data/name has been successfully formatted.

[wukong@bd01 ~]$ start-dfs.sh Starting namenodes on [bd01] bd01: starting namenode, logging to /home/wukong/local/hadoop-2.7.1/logs/hadoop-wukong-namenode-bd01.out bd02: starting datanode, logging to /home/wukong/local/hadoop-2.7.1/logs/hadoop-wukong-datanode-bd02.out bd03: starting datanode, logging to /home/wukong/local/hadoop-2.7.1/logs/hadoop-wukong-datanode-bd03.out Starting secondary namenodes [bd01] bd01: starting secondarynamenode, logging to /home/wukong/local/hadoop-2.7.1/logs/hadoop-wukong-secondarynamenode-bd01.out [wukong@bd01 ~]

通过jps和日志看是否启动成功。jps查看机器启动的进程情况。正常情况下master上应该有namenode和sencondarynamenode。slave上有datanode。

[wukong@bd01 hadoop]$ jps 5224 Jps 5074 SecondaryNameNode 4923 NameNode [wukong@bd02 ~]$ jps 2307 Jps 2206 DataNode [wukong@bd03 ~]$ jps 2298 Jps 2198 DataNode

[wukong@bd01 ~]$ start-yarn.sh starting yarn daemons starting resourcemanager, logging to /home/wukong/local/hadoop-2.7.1/logs/yarn-wukong-resourcemanager-bd01.out bd03: starting nodemanager, logging to /home/wukong/local/hadoop-2.7.1/logs/yarn-wukong-nodemanager-bd03.out bd02: starting nodemanager, logging to /home/wukong/local/hadoop-2.7.1/logs/yarn-wukong-nodemanager-bd02.out [wukong@bd01 ~]$

通过jps和日志验证启动是否成功

[wukong@bd01 ~]$ jps 5830 ResourceManager 6106 Jps 5074 SecondaryNameNode 4923 NameNode [wukong@bd01 ~]$ [wukong@bd02 ~]$ jps 4615 Jps 2206 DataNode 4502 NodeManager [wukong@bd02 ~]$ [wukong@bd03 ~]$ jps 4608 Jps 4495 NodeManager 2198 DataNode [wukong@bd03 ~]$

[wukong@bd01 ~]$ start-dfs.sh Starting namenodes on [bd01] The authenticity of host 'bd01 (192.168.1.21)' can't be established. ECDSA key fingerprint is af:96:74:e1:41:ec:af:ec:d8:8e:df:cd:99:61:33:0d. Are you sure you want to continue connecting (yes/no)? yes bd01: Warning: Permanently added 'bd01,192.168.1.21' (ECDSA) to the list of known hosts. bd01: Error: JAVA_HOME is not set and could not be found. bd03: Error: JAVA_HOME is not set and could not be found. bd02: Error: JAVA_HOME is not set and could not be found. Starting secondary namenodes [bd01] bd01: Error: JAVA_HOME is not set and could not be found. [wukong@bd01 some_log]$ java -version java version "1.7.0_79" Java(TM) SE Runtime Environment (build 1.7.0_79-b15) Java HotSpot(TM) 64-Bit Server VM (build 24.79-b02, mixed mode) [wukong@bd01 ~]

# .bash_profile # Get the aliases and functions if [ -f ~/.bashrc ]; then . ~/.bashrc fi # 把hadoop可执行文件和脚本的路径都配进来 PATH=$PATH:$HOME/local/hadoop-2.7.1/bin:$HOME/local/hadoop-2.7.1/sbin export PATH ~ ~ ~ ".bash_profile" 16L, 267C

“Hadoop2.7.1分布式安装配置过程”的内容就介绍到这里了,感谢大家的阅读。如果想了解更多行业相关的知识可以关注亿速云网站,小编将为大家输出更多高质量的实用文章!

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。