这篇文章将为大家详细讲解有关hadoop-002中Eclipse如何运行WordCount,小编觉得挺实用的,因此分享给大家做个参考,希望大家阅读完这篇文章后可以有所收获。

1、如过提示 eclipse 无法编译 文件 ,提示对某文件无权限。

chmod -R 777 workspace

2、在eclipse中跑Hadoop测试用例时,出现这样的错误

Exception in thread "main" org.apache.hadoop.mapred.InvalidInputException: Input path does not exist:

原因是系统没有找到hadoop的配置文件,

对于2.5.2就是core-site.xml

其中指定了

fs.defaultFS的配置

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

解决方法:

JobConf conf = new JobConf(WordCount.class);

conf.setJobName("wordcount");

//conf.set("fs.defaultFS", "hdfs://localhost:9000");

//conf.addResource(new Path("/opt/hadoop/etc/hadoop/core-site.xml"));

任选注释掉代码其中的一行执行即可。

完整代码如下:

package com.zwh;

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class WordCount {

public static class TokenizerMapper

extends Mapper<Object, Text, Text, IntWritable>{

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(Object key, Text value, Context context

) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

context.write(word, one);

}

}

}

public static class IntSumReducer

extends Reducer<Text,IntWritable,Text,IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values,

Context context

) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

conf.set("fs.defaultFS", "hdfs://localhost:9000");

Job job = new Job(conf, "word count");

job.setJarByClass(WordCount.class);

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(IntSumReducer.class);

job.setReducerClass(IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path("/user/root/input/"));

FileOutputFormat.setOutputPath(job,new Path("/user/root/output/wc"));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

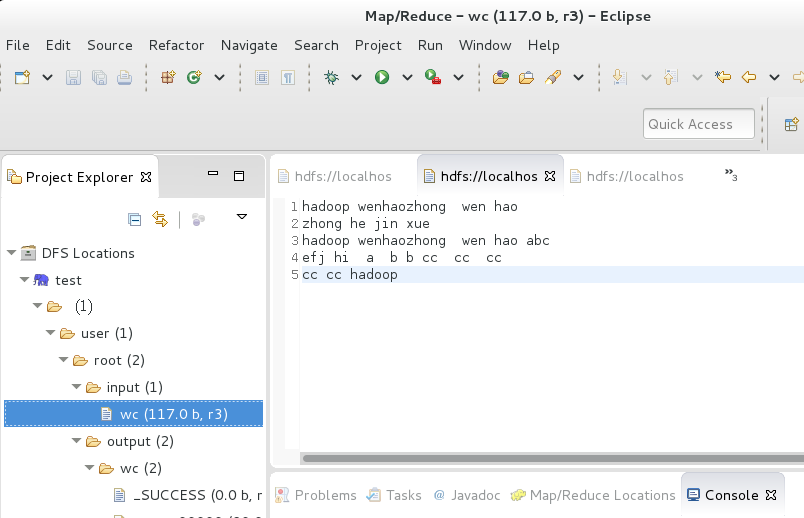

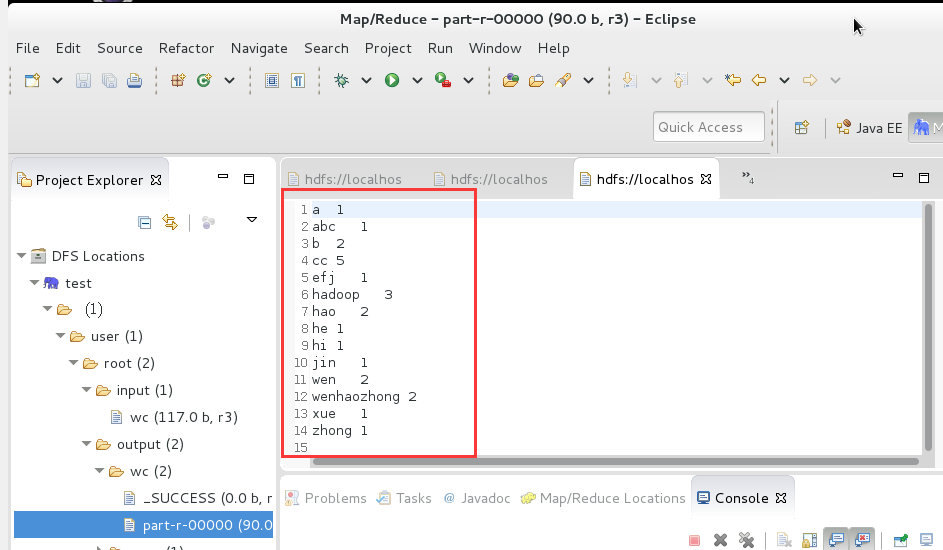

}示意图

关于“hadoop-002中Eclipse如何运行WordCount”这篇文章就分享到这里了,希望以上内容可以对大家有一定的帮助,使各位可以学到更多知识,如果觉得文章不错,请把它分享出去让更多的人看到。

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。