1 node(s) had taints that the pod didn't tolerate.

集群中有个节点被污染了,查看节点信息,发现其中一个节点没有起来,导致只能启动2个pod

2 PV和PVC上的坑

一个zk.yaml中需要创建3个zookeeper,根据zookeeper集群的特性,三个zookeeper需要三个不同的数据目录(如果再添加日志目录也是一样),集群才会正常起来

创建PV的时候需要创建三个不同的PV

在一个zk.yaml中,PVC需要关联到后端不同的三个PVC,如果按照传统的创建PVC,并在zk.yaml中指定PVC的名字,只能指定一个名字,这样是办不到关联到三个不同的PV,于是需要volumeClaimTemplates(PVC模板),模板accessModes需要ReadWriteOnce,ReadWriteMany会关联失败。

volumeClaimTemplates: - metadata: name: datadir spec: accessModes: [ "ReadWriteOnce" ] resources: requests: storage: 1Gi

如果是用动态pvc供给,也是一样需要创建PVC模板

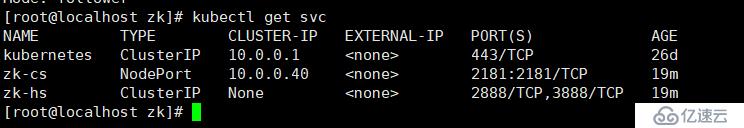

3 zookeeper提供给外部访问

zookeeper由于是使用statefulSet部署,使用到了无头服务,如果提供给外部访问呢?办法就是增加一个专门为外部访问的service。

1 在192.168.0.11上创建安装nfs,并配置启动nfs

2 在每个node节点上下载nfs

3 在matser节点创建zk使用的PV

apiVersion: v1 kind: PersistentVolume metadata: name: zk-data1 spec: capacity: storage: 1Gi accessModes: - ReadWriteOnce nfs: server: 192.168.0.11 path: /data/zk/data1 --- apiVersion: v1 kind: PersistentVolume metadata: name: zk-data2 spec: capacity: storage: 1Gi accessModes: - ReadWriteOnce nfs: server: 192.168.0.11 path: /data/zk/data2 --- apiVersion: v1 kind: PersistentVolume metadata: name: zk-data3 spec: capacity: storage: 1Gi accessModes: - ReadWriteOnce nfs: server: 192.168.0.11 path: /data/zk/data3

4 在master节点上创建zookeeper的创建pod的yaml文件

参考官方文档:https://kubernetes.io/docs/tutorials/stateful-application/zookeeper/https://kubernetes.io/docs/tutorials/stateful-application/zookeeper/

官方文档中的镜像地址为国外地址,我这里网上找了一个可用的地址,跟官方镜像一样

[root@localhost zk]# cat zk.yaml apiVersion: v1 kind: Service metadata: name: zk-hs labels: app: zk spec: ports: - port: 2888 name: server - port: 3888 name: leader-election clusterIP: None selector: app: zk --- apiVersion: v1 kind: Service metadata: name: zk-cs labels: app: zk spec: type: NodePort ports: - port: 2181 targetPort: 2181 name: client nodePort: 2181 selector: app: zk --- apiVersion: policy/v1beta1 kind: PodDisruptionBudget metadata: name: zk-pdb spec: selector: matchLabels: app: zk maxUnavailable: 1 --- apiVersion: apps/v1 kind: StatefulSet metadata: name: zok spec: serviceName: zk-hs replicas: 3 selector: matchLabels: app: zk template: metadata: labels: app: zk spec: affinity: podAntiAffinity: requiredDuringSchedulingIgnoredDuringExecution: - labelSelector: matchExpressions: - key: "app" operator: In values: - zk topologyKey: "kubernetes.io/hostname" containers: - name: kubernetes-zookeeper imagePullPolicy: Always image: leolee32/kubernetes-library:kubernetes-zookeeper1.0-3.4.10 resources: requests: memory: "1Gi" cpu: "0.5" ports: - containerPort: 2181 name: client - containerPort: 2888 name: server - containerPort: 3888 name: leader-election command: - sh - -c - "start-zookeeper \ --servers=3 \ --data_dir=/var/lib/zookeeper/data \ --data_log_dir=/var/lib/zookeeper/data/log \ --conf_dir=/opt/zookeeper/conf \ --client_port=2181 \ --election_port=3888 \ --server_port=2888 \ --tick_time=2000 \ --init_limit=10 \ --sync_limit=5 \ --heap=512M \ --max_client_cnxns=60 \ --snap_retain_count=3 \ --purge_interval=12 \ --max_session_timeout=40000 \ --min_session_timeout=4000 \ --log_level=INFO" readinessProbe: exec: command: - sh - -c - "zookeeper-ready 2181" initialDelaySeconds: 10 timeoutSeconds: 5 livenessProbe: exec: command: - sh - -c - "zookeeper-ready 2181" initialDelaySeconds: 10 timeoutSeconds: 5 volumeMounts: - name: datadir mountPath: /var/lib/zookeeper volumeClaimTemplates: - metadata: name: datadir spec: accessModes: [ "ReadWriteOnce" ] resources: requests: storage: 1Gi

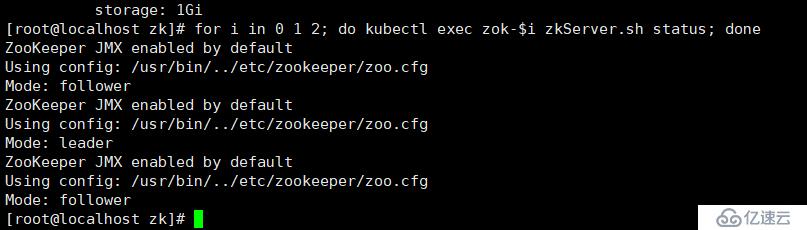

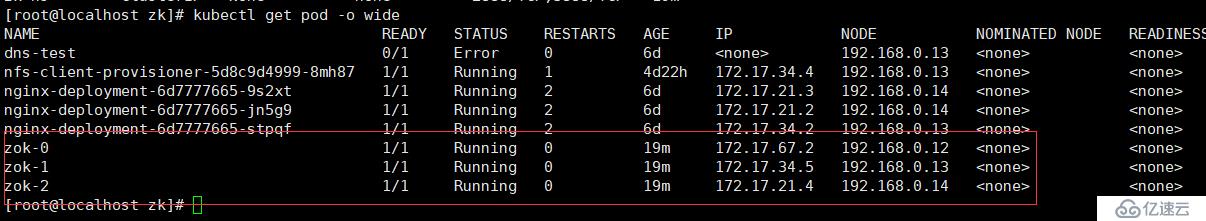

5 查看集群状态

for i in 0 1 2; do kubectl exec zok-$i zkServer.sh status; done

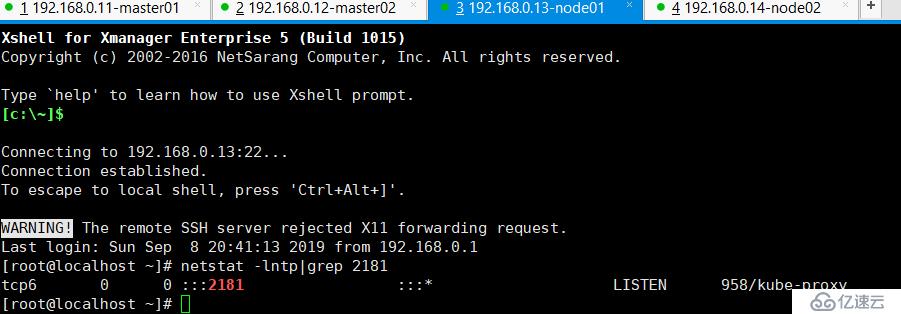

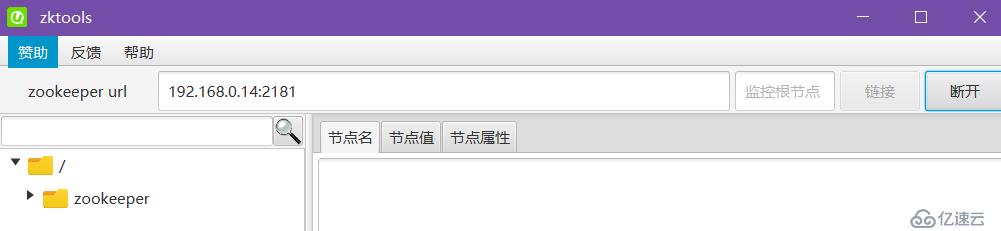

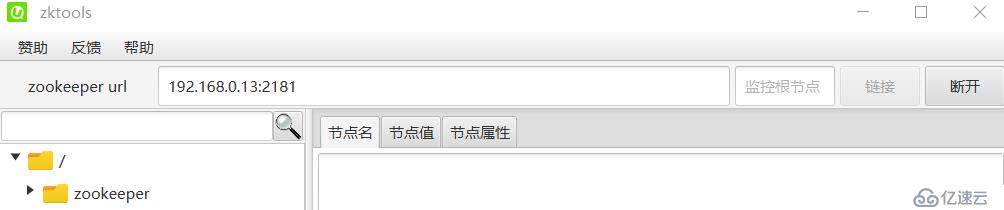

6 外部访问zookeeper集群(使用本机电脑访问)

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。