本篇内容介绍了“怎么联合使用Spark Streaming、Broadcast、Accumulaor”的有关知识,在实际案例的操作过程中,不少人都会遇到这样的困境,接下来就让小编带领大家学习一下如何处理这些情况吧!希望大家仔细阅读,能够学有所成!

广播可以自定义,通过Broadcast、Accumulator联合可以完成复杂的业务逻辑。

以下代码实现在本机9999端口监听,并向连接上的客户端发送单词,其中包含黑名单的单词Hadoop,Mahout和Hive。

package org.scala.opt

case class ServerThread(socket : Socket) extends Thread("ServerThread") { |

以下代码实现接收本机9999端口发送的单词,统计黑名单出现的次数的功能。

package com.dt.spark.streaming_scala println("BlackList word %s appeared".formatted(wordPair._1)) |

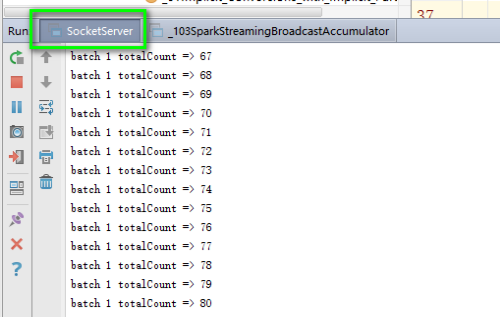

Server发送端日志如下,不断打印输出的次数。

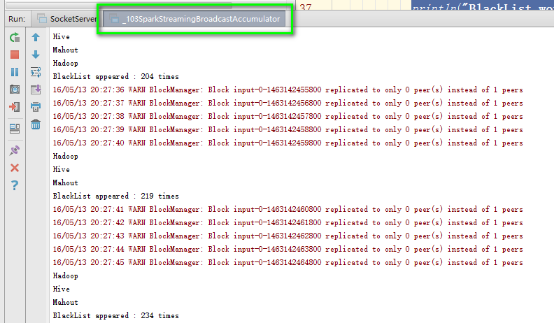

Spark Streaming端打印黑名单的单词及出现的次数。

“怎么联合使用Spark Streaming、Broadcast、Accumulaor”的内容就介绍到这里了,感谢大家的阅读。如果想了解更多行业相关的知识可以关注亿速云网站,小编将为大家输出更多高质量的实用文章!

亿速云「云服务器」,即开即用、新一代英特尔至强铂金CPU、三副本存储NVMe SSD云盘,价格低至29元/月。点击查看>>

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。

原文链接:https://my.oschina.net/u/928448/blog/674919